kMaX-DeepLab: k-means Mask Transformer

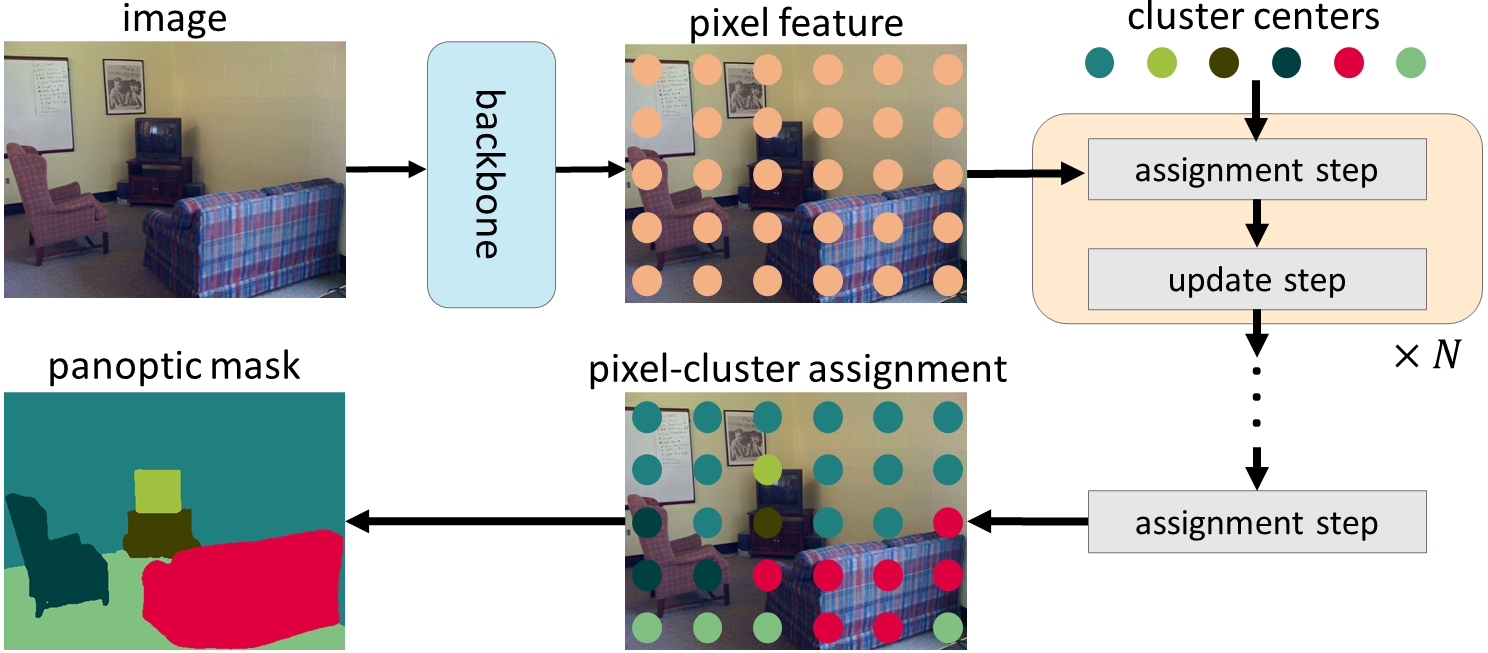

The rise of transformers in vision tasks not only advances network backbone designs, but also starts a brand-new page to achieve end-to-end image recognition (e.g., object detection and panoptic segmentation). Originated from Natural Language Processing (NLP), transformer architectures, consisting of self-attention and cross-attention, effectively learn long-range interactions between elements in a sequence. However, we observe that most existing transformer-based vision models simply borrow the idea from NLP, neglecting the crucial difference between languages and images, particularly the extremely large sequence length of spatially flattened pixel features. This subsequently impedes the learning in cross-attention between pixel features and object queries. In this paper, we rethink the relationship between pixels and object queries and propose to reformulate the cross-attention learning as a clustering process. Inspired by the traditional k-means clustering algorithm, we develop a k-means Mask Xformer (kMaX-DeepLab) for segmentation tasks, which not only improves the state-of-the-art, but also enjoys a simple and elegant design. As a result, our kMaX-DeepLab achieves a new state-of-the-art performance on COCO val set with 58.0% PQ, Cityscapes val set with 68.4% PQ, 44.0% AP, and 83.5% mIoU, and ADE20K val set with 50.9% PQ and 55.2% mIoU without test-time augmentation or external dataset. We hope our work can shed some light on designing transformers tailored for vision tasks. TensorFlow code and models are available at https://github.com/google-research/deeplab2 A PyTorch re-implementation is also available at https://github.com/bytedance/kmax-deeplab

PDF Abstract

MS COCO

MS COCO

Cityscapes

Cityscapes

ADE20K

ADE20K