Learning Class-Transductive Intent Representations for Zero-shot Intent Detection

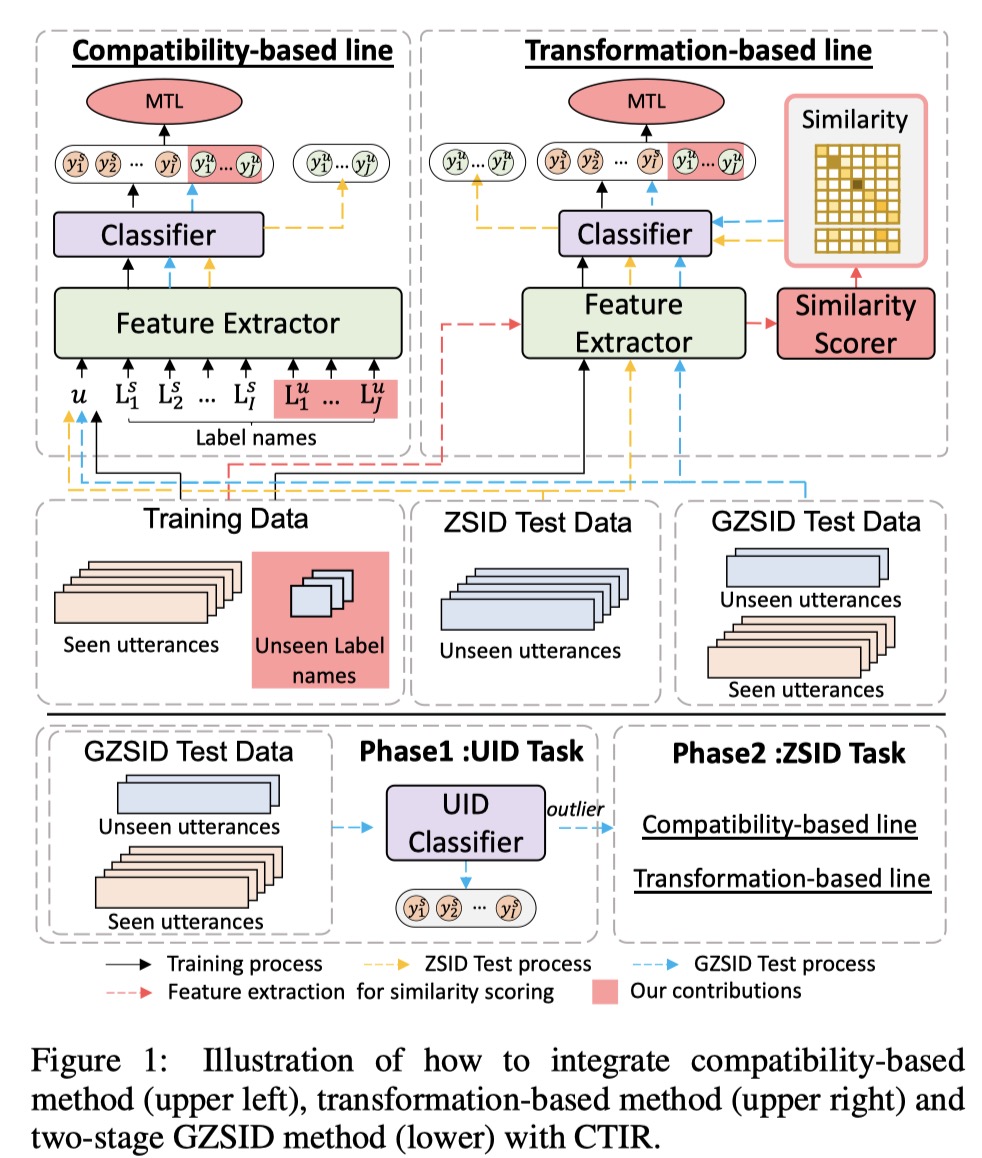

Zero-shot intent detection (ZSID) aims to deal with the continuously emerging intents without annotated training data. However, existing ZSID systems suffer from two limitations: 1) They are not good at modeling the relationship between seen and unseen intents. 2) They cannot effectively recognize unseen intents under the generalized intent detection (GZSID) setting. A critical problem behind these limitations is that the representations of unseen intents cannot be learned in the training stage. To address this problem, we propose a novel framework that utilizes unseen class labels to learn Class-Transductive Intent Representations (CTIR). Specifically, we allow the model to predict unseen intents during training, with the corresponding label names serving as input utterances. On this basis, we introduce a multi-task learning objective, which encourages the model to learn the distinctions among intents, and a similarity scorer, which estimates the connections among intents more accurately. CTIR is easy to implement and can be integrated with existing methods. Experiments on two real-world datasets show that CTIR brings considerable improvement to the baseline systems.

PDF Abstract

SNIPS

SNIPS