Learning Domain-Invariant Subspace using Domain Features and Independence Maximization

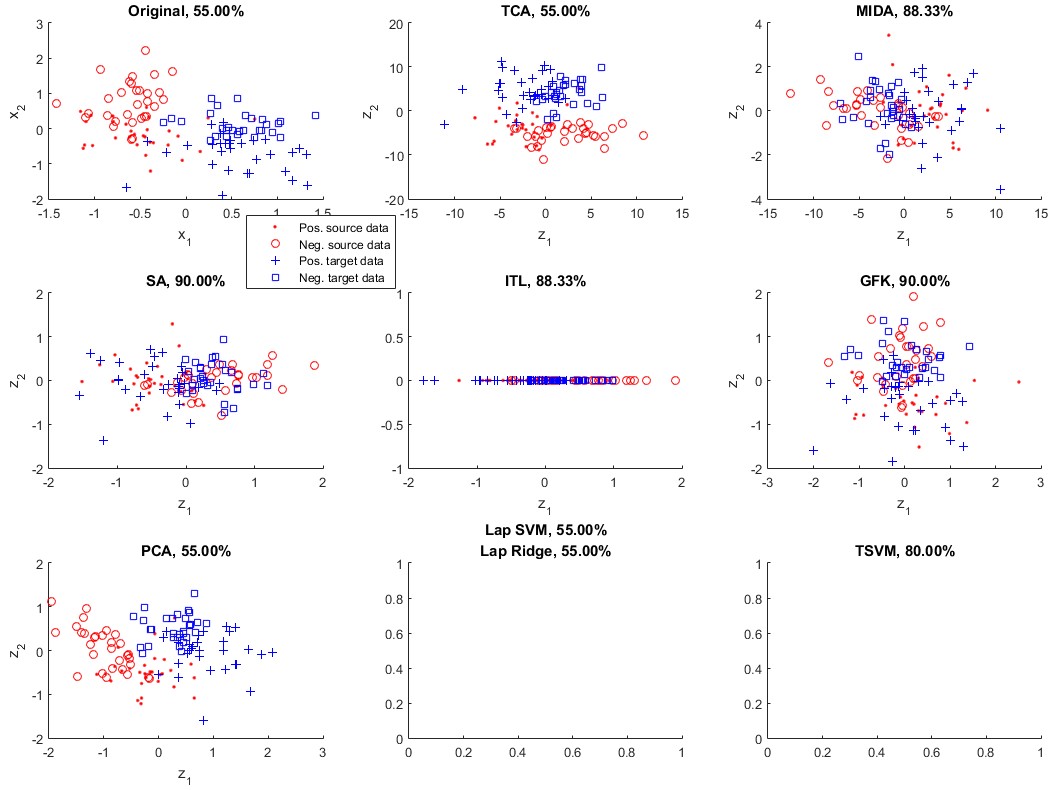

Domain adaptation algorithms are useful when the distributions of the training and the test data are different. In this paper, we focus on the problem of instrumental variation and time-varying drift in the field of sensors and measurement, which can be viewed as discrete and continuous distributional change in the feature space. We propose maximum independence domain adaptation (MIDA) and semi-supervised MIDA (SMIDA) to address this problem. Domain features are first defined to describe the background information of a sample, such as the device label and acquisition time. Then, MIDA learns a subspace which has maximum independence with the domain features, so as to reduce the inter-domain discrepancy in distributions. A feature augmentation strategy is also designed to project samples according to their backgrounds so as to improve the adaptation. The proposed algorithms are flexible and fast. Their effectiveness is verified by experiments on synthetic datasets and four real-world ones on sensors, measurement, and computer vision. They can greatly enhance the practicability of sensor systems, as well as extend the application scope of existing domain adaptation algorithms by uniformly handling different kinds of distributional change.

PDF Abstract