Learning Human-Object Interactions by Graph Parsing Neural Networks

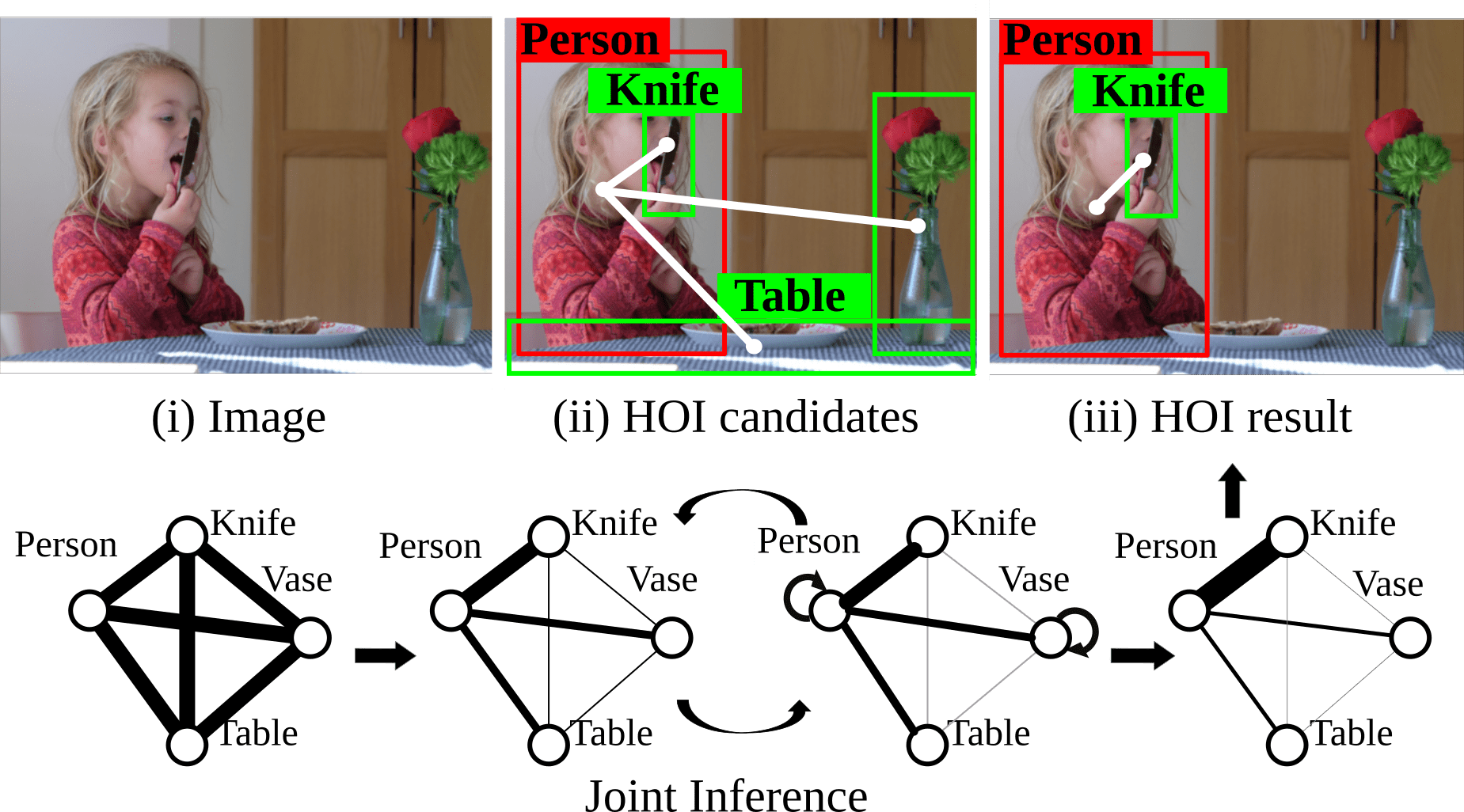

This paper addresses the task of detecting and recognizing human-object interactions (HOI) in images and videos. We introduce the Graph Parsing Neural Network (GPNN), a framework that incorporates structural knowledge while being differentiable end-to-end. For a given scene, GPNN infers a parse graph that includes i) the HOI graph structure represented by an adjacency matrix, and ii) the node labels. Within a message passing inference framework, GPNN iteratively computes the adjacency matrices and node labels. We extensively evaluate our model on three HOI detection benchmarks on images and videos: HICO-DET, V-COCO, and CAD-120 datasets. Our approach significantly outperforms state-of-art methods, verifying that GPNN is scalable to large datasets and applies to spatial-temporal settings. The code is available at https://github.com/SiyuanQi/gpnn.

PDF Abstract ECCV 2018 PDF ECCV 2018 Abstract

MS COCO

MS COCO

HICO-DET

HICO-DET

V-COCO

V-COCO

CAD-120

CAD-120