Learning image-to-image translation using paired and unpaired training samples

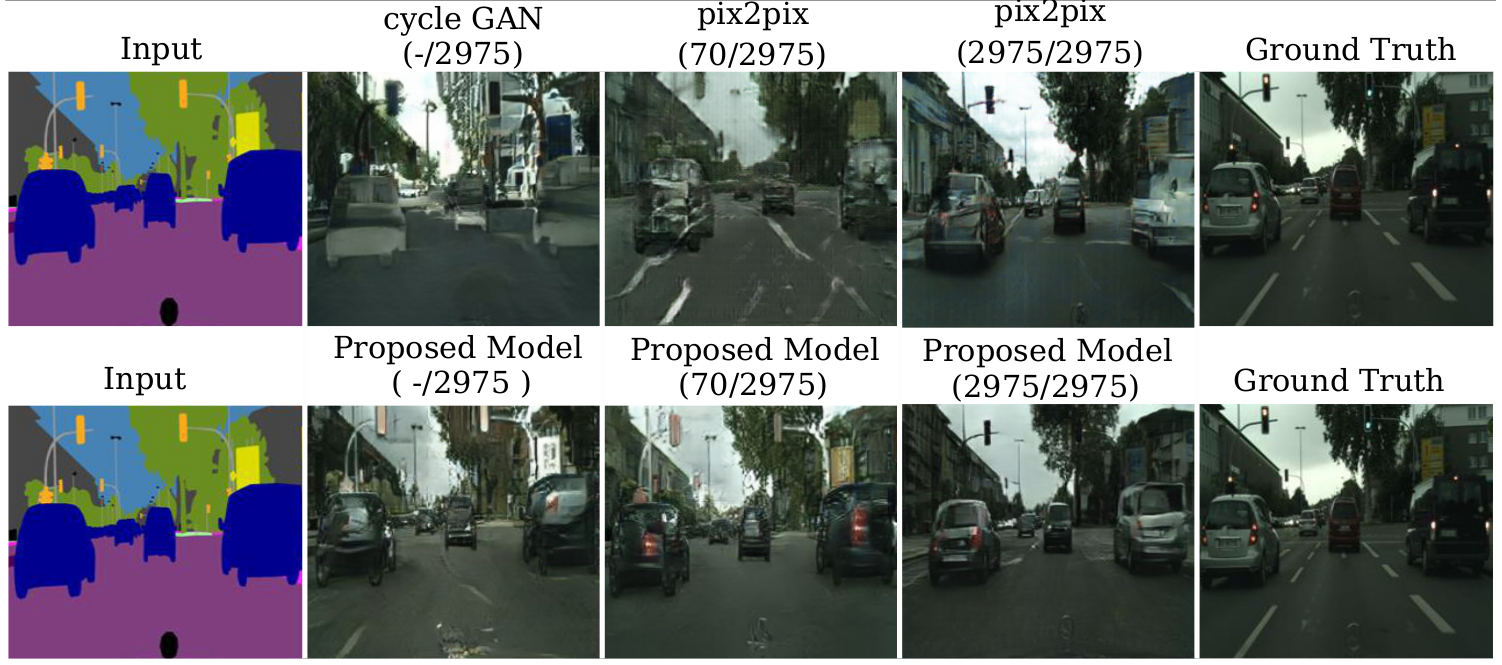

Image-to-image translation is a general name for a task where an image from one domain is converted to a corresponding image in another domain, given sufficient training data. Traditionally different approaches have been proposed depending on whether aligned image pairs or two sets of (unaligned) examples from both domains are available for training. While paired training samples might be difficult to obtain, the unpaired setup leads to a highly under-constrained problem and inferior results. In this paper, we propose a new general purpose image-to-image translation model that is able to utilize both paired and unpaired training data simultaneously. We compare our method with two strong baselines and obtain both qualitatively and quantitatively improved results. Our model outperforms the baselines also in the case of purely paired and unpaired training data. To our knowledge, this is the first work to consider such hybrid setup in image-to-image translation.

PDF Abstract

Cityscapes

Cityscapes

Mapillary Vistas Dataset

Mapillary Vistas Dataset