LM-Critic: Language Models for Unsupervised Grammatical Error Correction

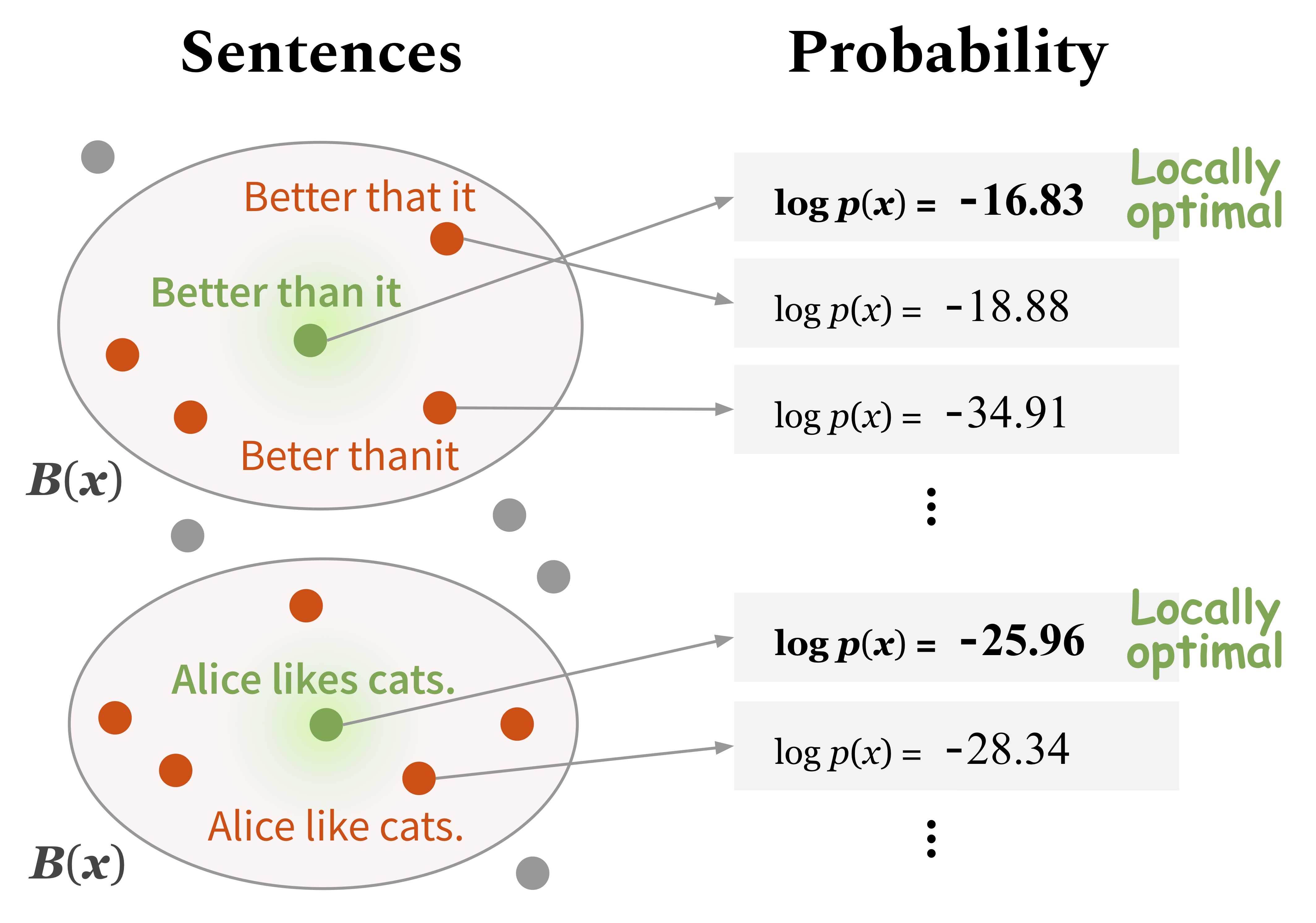

Training a model for grammatical error correction (GEC) requires a set of labeled ungrammatical / grammatical sentence pairs, but manually annotating such pairs can be expensive. Recently, the Break-It-Fix-It (BIFI) framework has demonstrated strong results on learning to repair a broken program without any labeled examples, but this relies on a perfect critic (e.g., a compiler) that returns whether an example is valid or not, which does not exist for the GEC task. In this work, we show how to leverage a pretrained language model (LM) in defining an LM-Critic, which judges a sentence to be grammatical if the LM assigns it a higher probability than its local perturbations. We apply this LM-Critic and BIFI along with a large set of unlabeled sentences to bootstrap realistic ungrammatical / grammatical pairs for training a corrector. We evaluate our approach on GEC datasets across multiple domains (CoNLL-2014, BEA-2019, GMEG-wiki and GMEG-yahoo) and show that it outperforms existing methods in both the unsupervised setting (+7.7 F0.5) and the supervised setting (+0.5 F0.5).

PDF Abstract EMNLP 2021 PDF EMNLP 2021 Abstract

JFLEG

JFLEG

CoNLL-2014 Shared Task: Grammatical Error Correction

CoNLL-2014 Shared Task: Grammatical Error Correction

WI-LOCNESS

WI-LOCNESS