Local Class-Specific and Global Image-Level Generative Adversarial Networks for Semantic-Guided Scene Generation

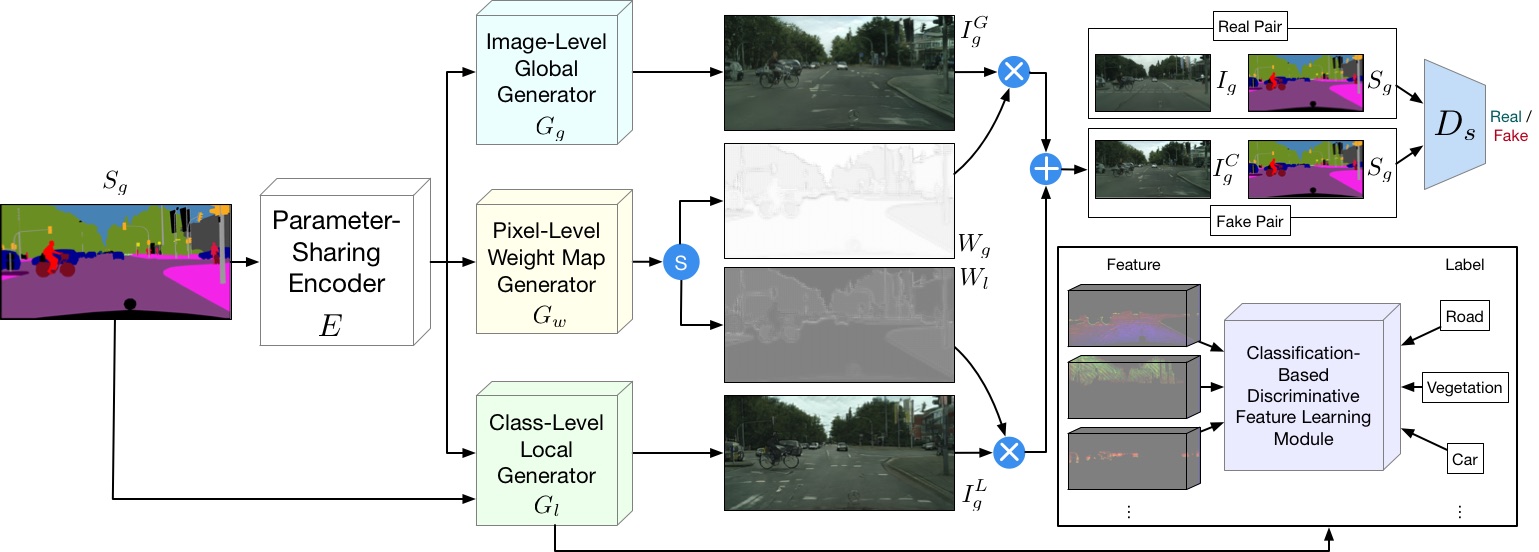

In this paper, we address the task of semantic-guided scene generation. One open challenge in scene generation is the difficulty of the generation of small objects and detailed local texture, which has been widely observed in global image-level generation methods. To tackle this issue, in this work we consider learning the scene generation in a local context, and correspondingly design a local class-specific generative network with semantic maps as a guidance, which separately constructs and learns sub-generators concentrating on the generation of different classes, and is able to provide more scene details. To learn more discriminative class-specific feature representations for the local generation, a novel classification module is also proposed. To combine the advantage of both the global image-level and the local class-specific generation, a joint generation network is designed with an attention fusion module and a dual-discriminator structure embedded. Extensive experiments on two scene image generation tasks show superior generation performance of the proposed model. The state-of-the-art results are established by large margins on both tasks and on challenging public benchmarks. The source code and trained models are available at https://github.com/Ha0Tang/LGGAN.

PDF Abstract CVPR 2020 PDF CVPR 2020 Abstract

Cityscapes

Cityscapes

ADE20K

ADE20K

Dayton

Dayton