ML4ML: Automated Invariance Testing for Machine Learning Models

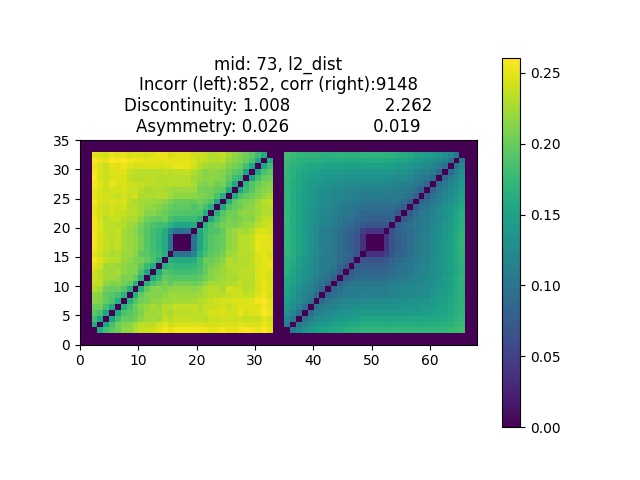

In machine learning (ML) workflows, determining the invariance qualities of an ML model is a common testing procedure. Traditionally, invariance qualities are evaluated using simple formula-based scores, e.g., accuracy. In this paper, we show that testing the invariance qualities of ML models may result in complex visual patterns that cannot be classified using simple formulas. In order to test ML models by analyzing such visual patterns automatically using other ML models, we propose a systematic framework that is applicable to a variety of invariance qualities. We demonstrate the effectiveness and feasibility of the framework by developing ML4ML models (assessors) for determining rotation-, brightness-, and size-variances of a collection of neural networks. Our testing results show that the trained ML4ML assessors can perform such analytical tasks with sufficient accuracy.

PDF Abstract