MMChat: Multi-Modal Chat Dataset on Social Media

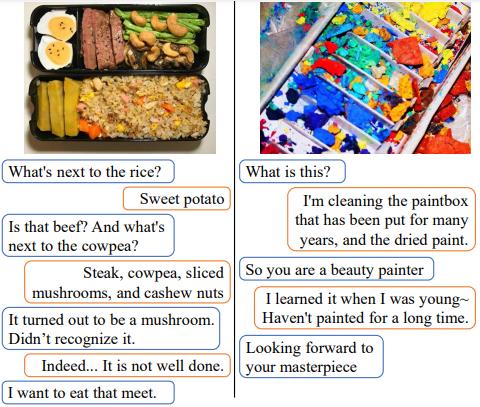

Incorporating multi-modal contexts in conversation is important for developing more engaging dialogue systems. In this work, we explore this direction by introducing MMChat: a large-scale Chinese multi-modal dialogue corpus (32.4M raw dialogues and 120.84K filtered dialogues). Unlike previous corpora that are crowd-sourced or collected from fictitious movies, MMChat contains image-grounded dialogues collected from real conversations on social media, in which the sparsity issue is observed. Specifically, image-initiated dialogues in common communications may deviate to some non-image-grounded topics as the conversation proceeds. To better investigate this issue, we manually annotate 100K dialogues from MMChat and further filter the corpus accordingly, which yields MMChat-hf. We develop a benchmark model to address the sparsity issue in dialogue generation tasks by adapting the attention routing mechanism on image features. Experiments demonstrate the usefulness of incorporating image features and the effectiveness of handling the sparsity of image features.

PDF Abstract LREC 2022 PDF LREC 2022 Abstract

MMChat

MMChat

Visual Genome

Visual Genome