MOROCCO: Model Resource Comparison Framework

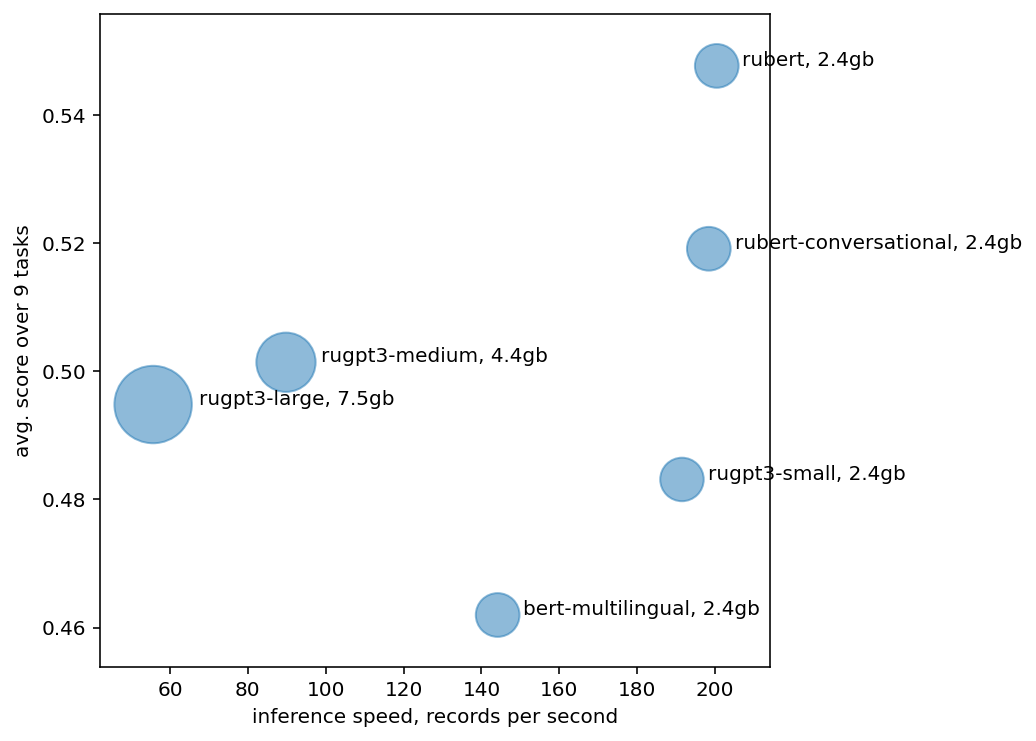

The new generation of pre-trained NLP models push the SOTA to the new limits, but at the cost of computational resources, to the point that their use in real production environments is often prohibitively expensive. We tackle this problem by evaluating not only the standard quality metrics on downstream tasks but also the memory footprint and inference time. We present MOROCCO, a framework to compare language models compatible with \texttt{jiant} environment which supports over 50 NLU tasks, including SuperGLUE benchmark and multiple probing suites. We demonstrate its applicability for two GLUE-like suites in different languages.

PDF Abstract

GLUE

GLUE

SuperGLUE

SuperGLUE