Neural Architecture Design for GPU-Efficient Networks

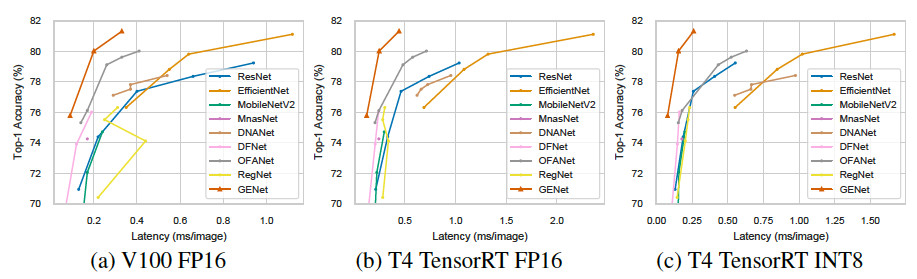

Many mission-critical systems are based on GPU for inference. It requires not only high recognition accuracy but also low latency in responding time. Although many studies are devoted to optimizing the structure of deep models for efficient inference, most of them do not leverage the architecture of \textbf{modern GPU} for fast inference, leading to suboptimal performance. To address this issue, we propose a general principle for designing GPU-efficient networks based on extensive empirical studies. This design principle enables us to search for GPU-efficient network structures effectively by a simple and lightweight method as opposed to most Neural Architecture Search (NAS) methods that are complicated and computationally expensive. Based on the proposed framework, we design a family of GPU-Efficient Networks, or GENets in short. We did extensive evaluations on multiple GPU platforms and inference engines. While achieving $\geq 81.3\%$ top-1 accuracy on ImageNet, GENet is up to $6.4$ times faster than EfficienNet on GPU. It also outperforms most state-of-the-art models that are more efficient than EfficientNet in high precision regimes. Our source code and pre-trained models are available from \url{https://github.com/idstcv/GPU-Efficient-Networks}.

PDF Abstract

ImageNet

ImageNet