Not Just a Black Box: Learning Important Features Through Propagating Activation Differences

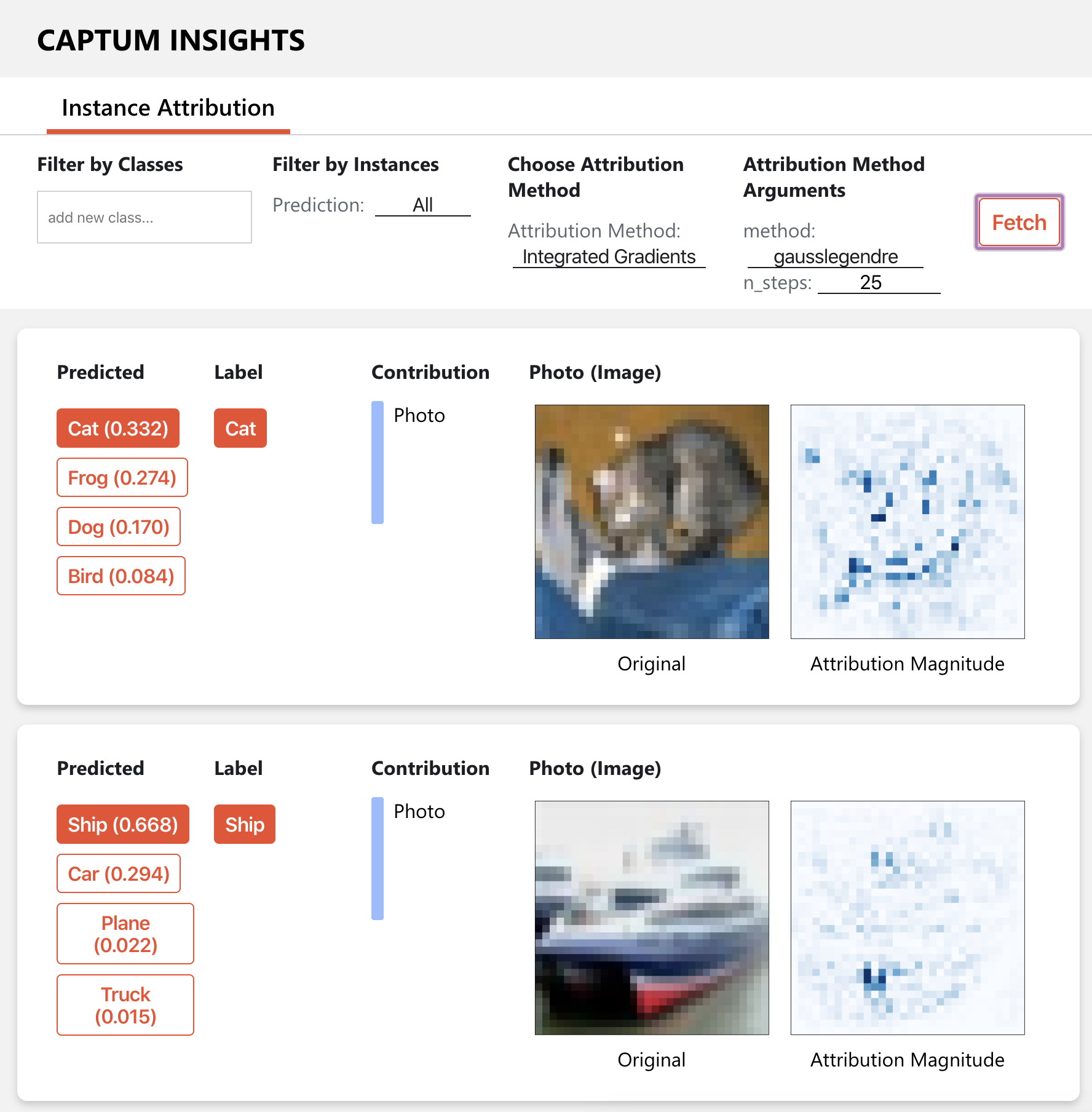

Note: This paper describes an older version of DeepLIFT. See https://arxiv.org/abs/1704.02685 for the newer version. Original abstract follows: The purported "black box" nature of neural networks is a barrier to adoption in applications where interpretability is essential. Here we present DeepLIFT (Learning Important FeaTures), an efficient and effective method for computing importance scores in a neural network. DeepLIFT compares the activation of each neuron to its 'reference activation' and assigns contribution scores according to the difference. We apply DeepLIFT to models trained on natural images and genomic data, and show significant advantages over gradient-based methods.

PDF Abstract