Object Captioning and Retrieval with Natural Language

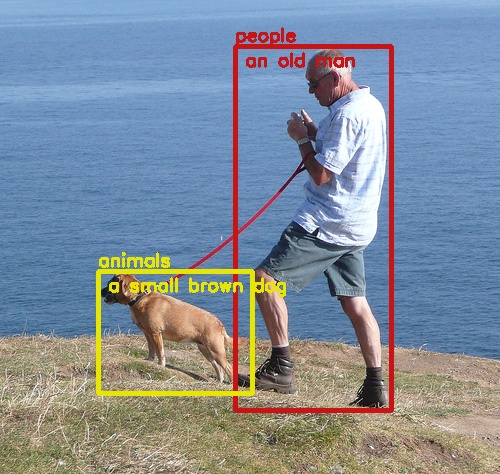

We address the problem of jointly learning vision and language to understand the object in a fine-grained manner. The key idea of our approach is the use of object descriptions to provide the detailed understanding of an object. Based on this idea, we propose two new architectures to solve two related problems: object captioning and natural language-based object retrieval. The goal of the object captioning task is to simultaneously detect the object and generate its associated description, while in the object retrieval task, the goal is to localize an object given an input query. We demonstrate that both problems can be solved effectively using hybrid end-to-end CNN-LSTM networks. The experimental results on our new challenging dataset show that our methods outperform recent methods by a fair margin, while providing a detailed understanding of the object and having fast inference time. The source code will be made available.

PDF Abstract