OGB-LSC: A Large-Scale Challenge for Machine Learning on Graphs

Enabling effective and efficient machine learning (ML) over large-scale graph data (e.g., graphs with billions of edges) can have a great impact on both industrial and scientific applications. However, existing efforts to advance large-scale graph ML have been largely limited by the lack of a suitable public benchmark. Here we present OGB Large-Scale Challenge (OGB-LSC), a collection of three real-world datasets for facilitating the advancements in large-scale graph ML. The OGB-LSC datasets are orders of magnitude larger than existing ones, covering three core graph learning tasks -- link prediction, graph regression, and node classification. Furthermore, we provide dedicated baseline experiments, scaling up expressive graph ML models to the massive datasets. We show that expressive models significantly outperform simple scalable baselines, indicating an opportunity for dedicated efforts to further improve graph ML at scale. Moreover, OGB-LSC datasets were deployed at ACM KDD Cup 2021 and attracted more than 500 team registrations globally, during which significant performance improvements were made by a variety of innovative techniques. We summarize the common techniques used by the winning solutions and highlight the current best practices in large-scale graph ML. Finally, we describe how we have updated the datasets after the KDD Cup to further facilitate research advances. The OGB-LSC datasets, baseline code, and all the information about the KDD Cup are available at https://ogb.stanford.edu/docs/lsc/ .

PDF Abstract

OGB-LSC

OGB-LSC

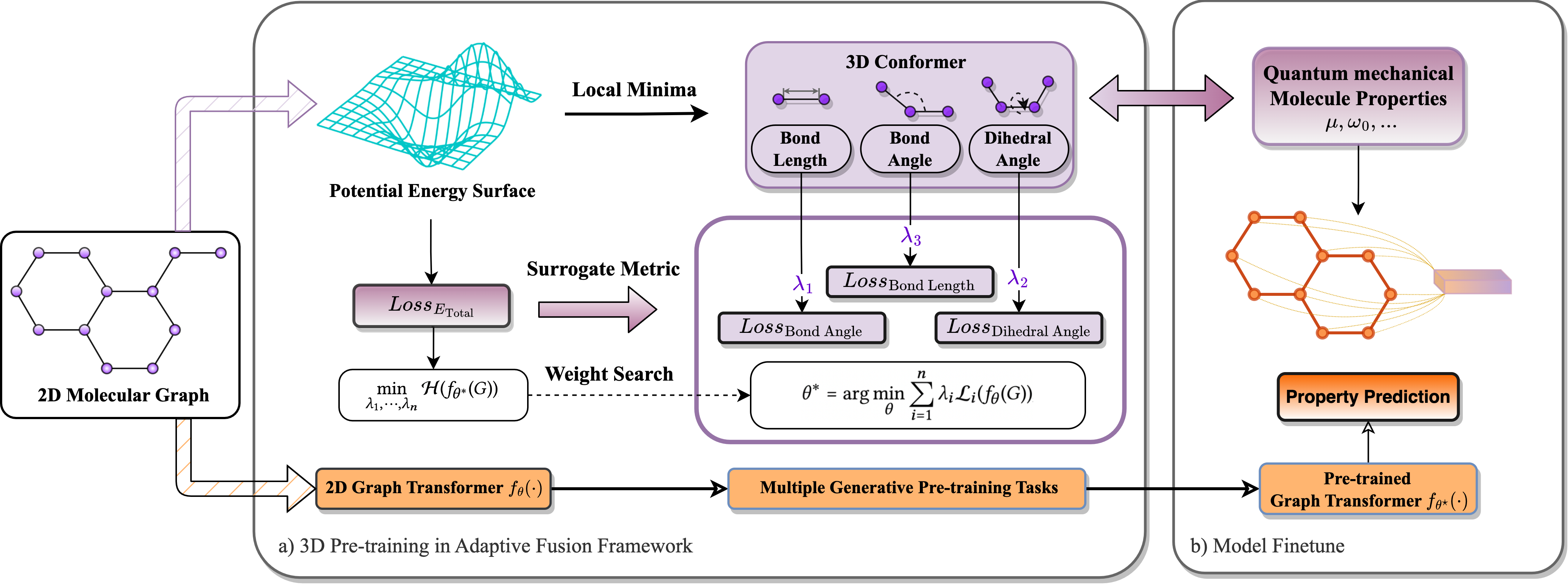

PCQM4Mv2-LSC

PCQM4Mv2-LSC

OGB

OGB