On the Art and Science of Machine Learning Explanations

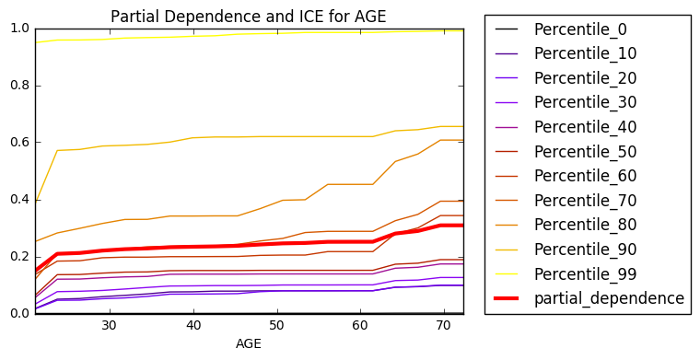

This text discusses several popular explanatory methods that go beyond the error measurements and plots traditionally used to assess machine learning models. Some of the explanatory methods are accepted tools of the trade while others are rigorously derived and backed by long-standing theory. The methods, decision tree surrogate models, individual conditional expectation (ICE) plots, local interpretable model-agnostic explanations (LIME), partial dependence plots, and Shapley explanations, vary in terms of scope, fidelity, and suitable application domain. Along with descriptions of these methods, this text presents real-world usage recommendations supported by a use case and public, in-depth software examples for reproducibility.

PDF Abstract