Physical invariance in neural networks for subgrid-scale scalar flux modeling

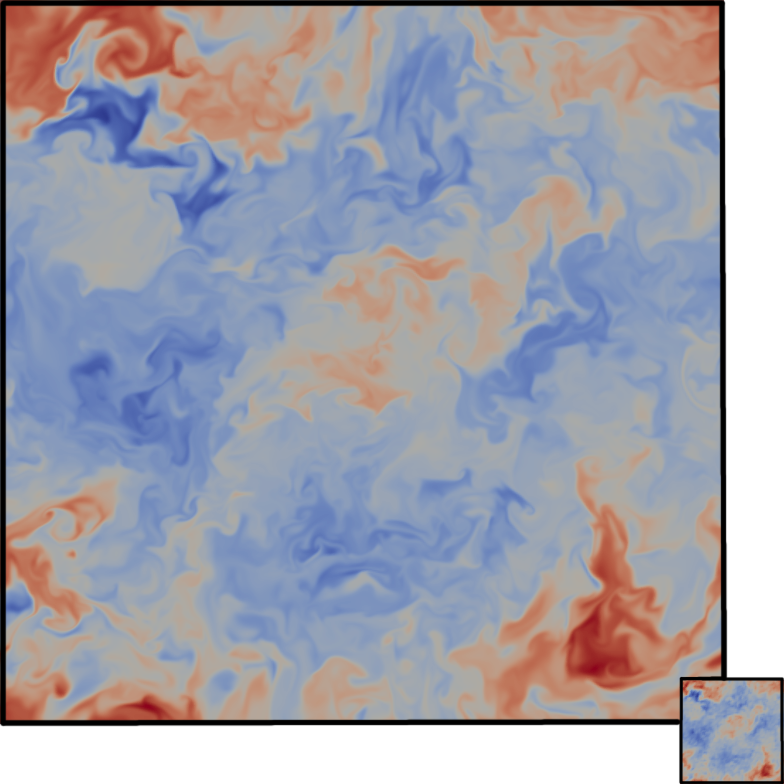

In this paper we present a new strategy to model the subgrid-scale scalar flux in a three-dimensional turbulent incompressible flow using physics-informed neural networks (NNs). When trained from direct numerical simulation (DNS) data, state-of-the-art neural networks, such as convolutional neural networks, may not preserve well known physical priors, which may in turn question their application to real case-studies. To address this issue, we investigate hard and soft constraints into the model based on classical transformation invariances and symmetries derived from physical laws. From simulation-based experiments, we show that the proposed transformation-invariant NN model outperforms both purely data-driven ones as well as parametric state-of-the-art subgrid-scale models. The considered invariances are regarded as regularizers on physical metrics during the a priori evaluation and constrain the distribution tails of the predicted subgrid-scale term to be closer to the DNS. They also increase the stability and performance of the model when used as a surrogate during a large-eddy simulation. Moreover, the transformation-invariant NN is shown to generalize to regimes that have not been seen during the training phase.

PDF Abstract