PoP-Net: Pose over Parts Network for Multi-Person 3D Pose Estimation from a Depth Image

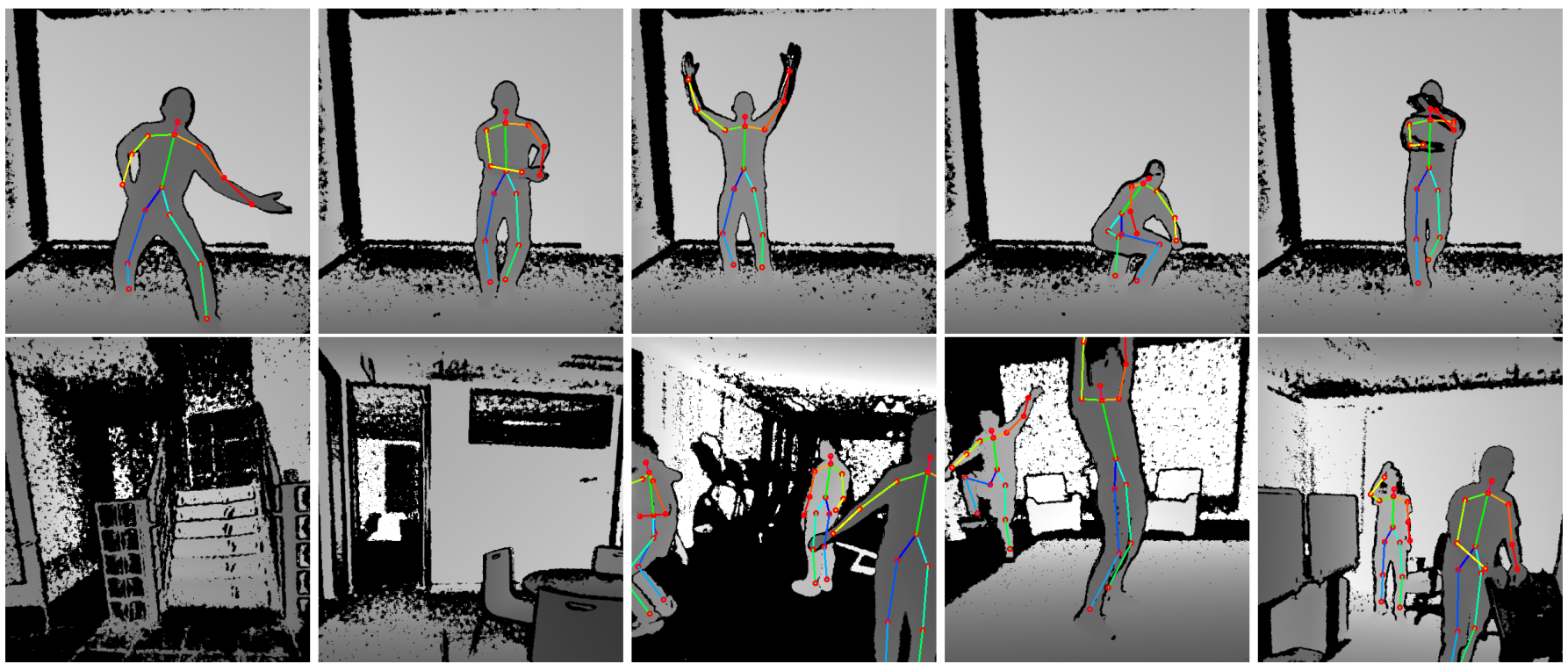

In this paper, a real-time method called PoP-Net is proposed to predict multi-person 3D poses from a depth image. PoP-Net learns to predict bottom-up part representations and top-down global poses in a single shot. Specifically, a new part-level representation, called Truncated Part Displacement Field (TPDF), is introduced which enables an explicit fusion process to unify the advantages of bottom-up part detection and global pose detection. Meanwhile, an effective mode selection scheme is introduced to automatically resolve the conflicting cases between global pose and part detections. Finally, due to the lack of high-quality depth datasets for developing multi-person 3D pose estimation, we introduce Multi-Person 3D Human Pose Dataset (MP-3DHP) as a new benchmark. MP-3DHP is designed to enable effective multi-person and background data augmentation in model training, and to evaluate 3D human pose estimators under uncontrolled multi-person scenarios. We show that PoP-Net achieves the state-of-the-art results both on MP-3DHP and on the widely used ITOP dataset, and has significant advantages in efficiency for multi-person processing. To demonstrate one of the applications of our algorithm pipeline, we also show results of virtual avatars driven by our calculated 3D joint positions. MP-3DHP Dataset and the evaluation code have been made available at: https://github.com/oppo-us-research/PoP-Net.

PDF Abstract

MP-3DHP: Multi-Person 3D Human Pose Dataset

MP-3DHP: Multi-Person 3D Human Pose Dataset

ITOP

ITOP