Re-evaluating Continual Learning Scenarios: A Categorization and Case for Strong Baselines

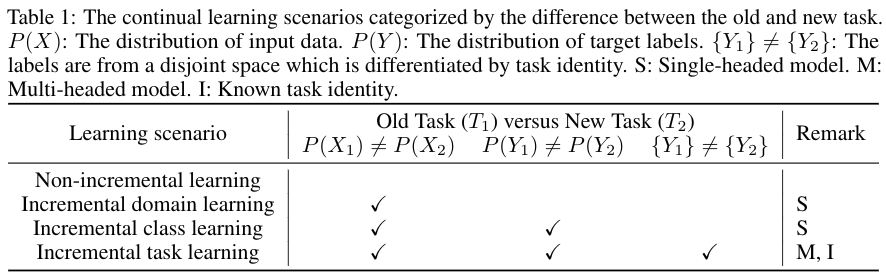

Continual learning has received a great deal of attention recently with several approaches being proposed. However, evaluations involve a diverse set of scenarios making meaningful comparison difficult. This work provides a systematic categorization of the scenarios and evaluates them within a consistent framework including strong baselines and state-of-the-art methods. The results provide an understanding of the relative difficulty of the scenarios and that simple baselines (Adagrad, L2 regularization, and naive rehearsal strategies) can surprisingly achieve similar performance to current mainstream methods. We conclude with several suggestions for creating harder evaluation scenarios and future research directions. The code is available at https://github.com/GT-RIPL/Continual-Learning-Benchmark

PDF Abstract

MNIST

MNIST

Permuted MNIST

Permuted MNIST