Robust Learning with Jacobian Regularization

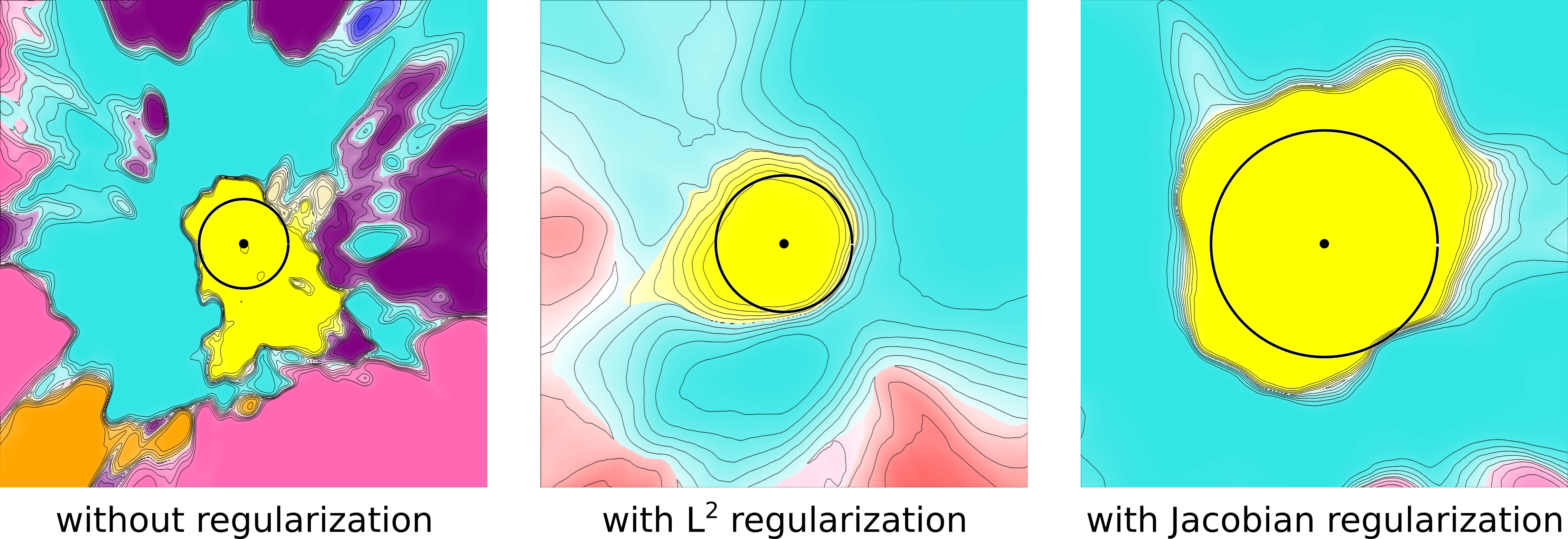

Design of reliable systems must guarantee stability against input perturbations. In machine learning, such guarantee entails preventing overfitting and ensuring robustness of models against corruption of input data. In order to maximize stability, we analyze and develop a computationally efficient implementation of Jacobian regularization that increases classification margins of neural networks. The stabilizing effect of the Jacobian regularizer leads to significant improvements in robustness, as measured against both random and adversarial input perturbations, without severely degrading generalization properties on clean data.

PDF Abstract ICLR 2020 PDF ICLR 2020 Abstract

CIFAR-10

CIFAR-10

ImageNet

ImageNet

MNIST

MNIST

USPS

USPS