SC2 Benchmark: Supervised Compression for Split Computing

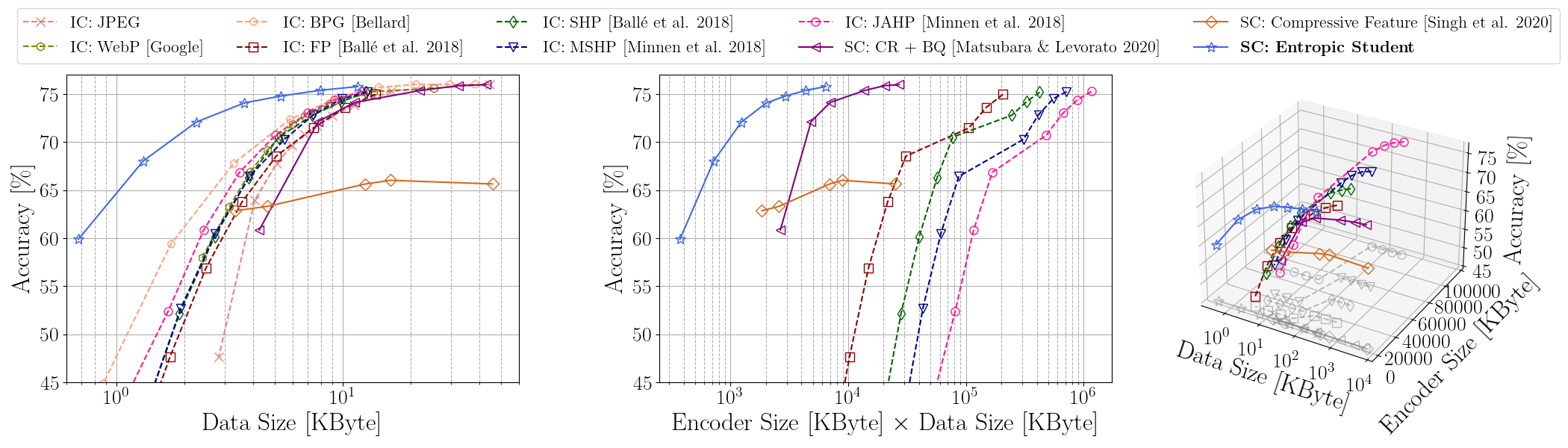

With the increasing demand for deep learning models on mobile devices, splitting neural network computation between the device and a more powerful edge server has become an attractive solution. However, existing split computing approaches often underperform compared to a naive baseline of remote computation on compressed data. Recent studies propose learning compressed representations that contain more relevant information for supervised downstream tasks, showing improved tradeoffs between compressed data size and supervised performance. However, existing evaluation metrics only provide an incomplete picture of split computing. This study introduces supervised compression for split computing (SC2) and proposes new evaluation criteria: minimizing computation on the mobile device, minimizing transmitted data size, and maximizing model accuracy. We conduct a comprehensive benchmark study using 10 baseline methods, three computer vision tasks, and over 180 trained models, and discuss various aspects of SC2. We also release sc2bench, a Python package for future research on SC2. Our proposed metrics and package will help researchers better understand the tradeoffs of supervised compression in split computing.

PDF Abstract

ImageNet

ImageNet

MS COCO

MS COCO

PASCAL VOC

PASCAL VOC