Self-Paced Deep Regression Forests with Consideration of Ranking Fairness

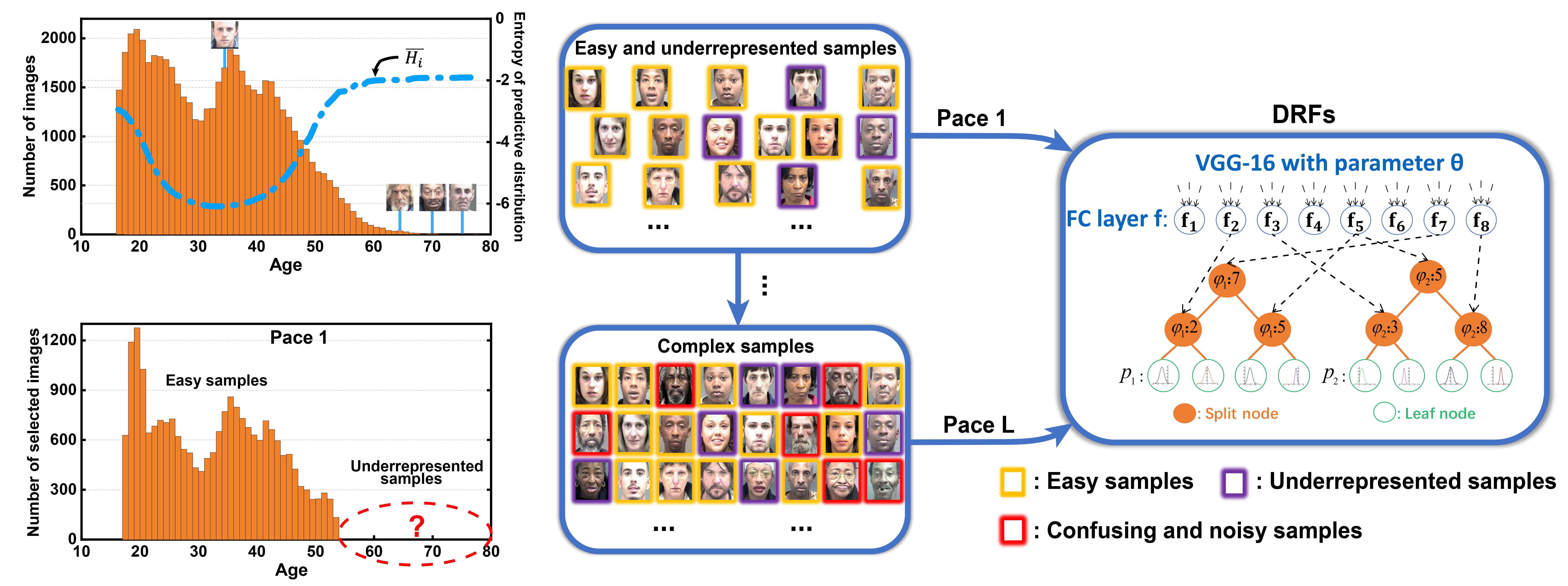

Deep discriminative models (DDMs), e.g. deep regression forests and deep decision forests, have been extensively studied recently to solve problems such as facial age estimation, head pose estimation, etc.. Due to a shortage of well-labeled data that does not have noise and imbalanced distribution problems, learning DDMs is always challenging. Existing methods usually tackle these challenges through learning more discriminative features or re-weighting samples. We argue that learning DDMs gradually, from easy to hard, is more reasonable, for two reasons. First, this is more consistent with the cognitive process of human beings. Second, noisy as well as underrepresented examples can be distinguished by virtue of previously learned knowledge. Thus, we resort to a gradual learning strategy -- self-paced learning (SPL). Then, a natural question arises: can SPL lead DDMs to achieve more robust and less biased solutions? To answer this question, this paper proposes a new SPL method: easy and underrepresented examples first, for learning DDMs. This tackles the fundamental ranking and selection problem in SPL from a new perspective: fairness. Our idea is fundamental and can be easily combined with a variety of DDMs. Extensive experimental results on three computer vision tasks, i.e., facial age estimation, head pose estimation, and gaze estimation, show our new method gains considerable performance improvement in both accuracy and fairness. Source code is available at https://github.com/learninginvision/SPU.

PDF Abstract

MORPH

MORPH

MPIIGaze

MPIIGaze