Self-Supervised Classification Network

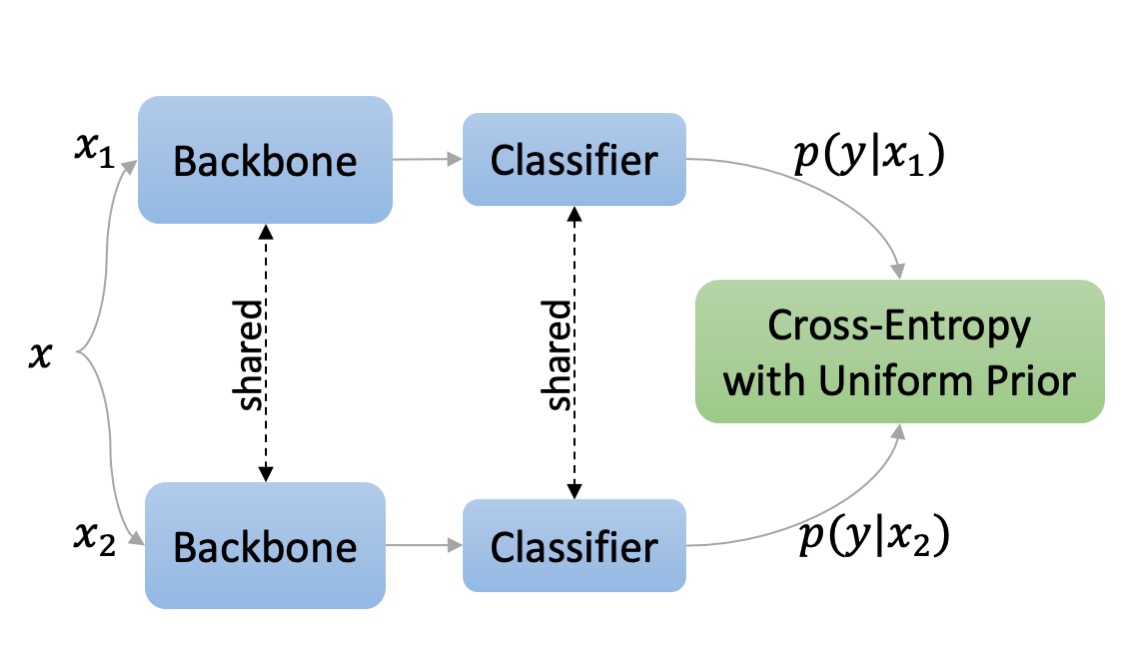

We present Self-Classifier -- a novel self-supervised end-to-end classification learning approach. Self-Classifier learns labels and representations simultaneously in a single-stage end-to-end manner by optimizing for same-class prediction of two augmented views of the same sample. To guarantee non-degenerate solutions (i.e., solutions where all labels are assigned to the same class) we propose a mathematically motivated variant of the cross-entropy loss that has a uniform prior asserted on the predicted labels. In our theoretical analysis, we prove that degenerate solutions are not in the set of optimal solutions of our approach. Self-Classifier is simple to implement and scalable. Unlike other popular unsupervised classification and contrastive representation learning approaches, it does not require any form of pre-training, expectation-maximization, pseudo-labeling, external clustering, a second network, stop-gradient operation, or negative pairs. Despite its simplicity, our approach sets a new state of the art for unsupervised classification of ImageNet; and even achieves comparable to state-of-the-art results for unsupervised representation learning. Code is available at https://github.com/elad-amrani/self-classifier.

PDF Abstract

ImageNet

ImageNet

MS COCO

MS COCO