Self-Supervised GANs via Auxiliary Rotation Loss

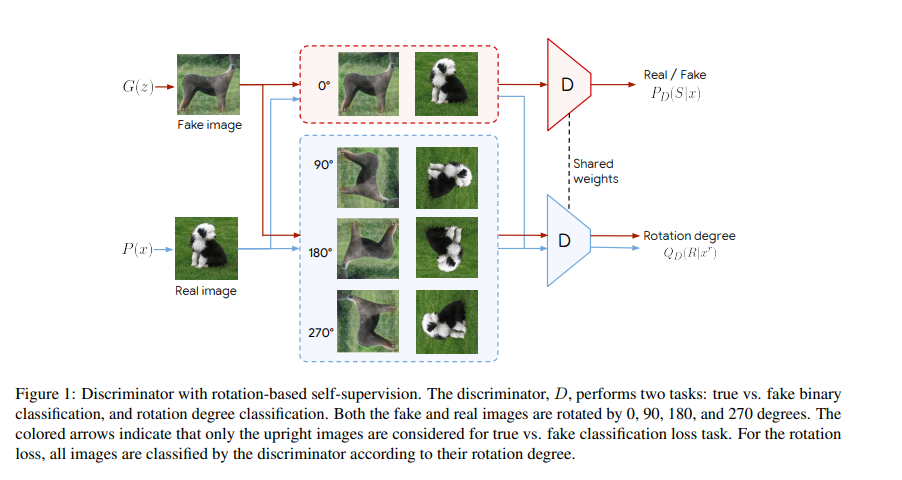

Conditional GANs are at the forefront of natural image synthesis. The main drawback of such models is the necessity for labeled data. In this work we exploit two popular unsupervised learning techniques, adversarial training and self-supervision, and take a step towards bridging the gap between conditional and unconditional GANs. In particular, we allow the networks to collaborate on the task of representation learning, while being adversarial with respect to the classic GAN game. The role of self-supervision is to encourage the discriminator to learn meaningful feature representations which are not forgotten during training. We test empirically both the quality of the learned image representations, and the quality of the synthesized images. Under the same conditions, the self-supervised GAN attains a similar performance to state-of-the-art conditional counterparts. Finally, we show that this approach to fully unsupervised learning can be scaled to attain an FID of 23.4 on unconditional ImageNet generation.

PDF Abstract CVPR 2019 PDF CVPR 2019 Abstract

CIFAR-10

CIFAR-10

CelebA-HQ

CelebA-HQ

LSUN

LSUN