Self-supervised Representation Learning Framework for Remote Physiological Measurement Using Spatiotemporal Augmentation Loss

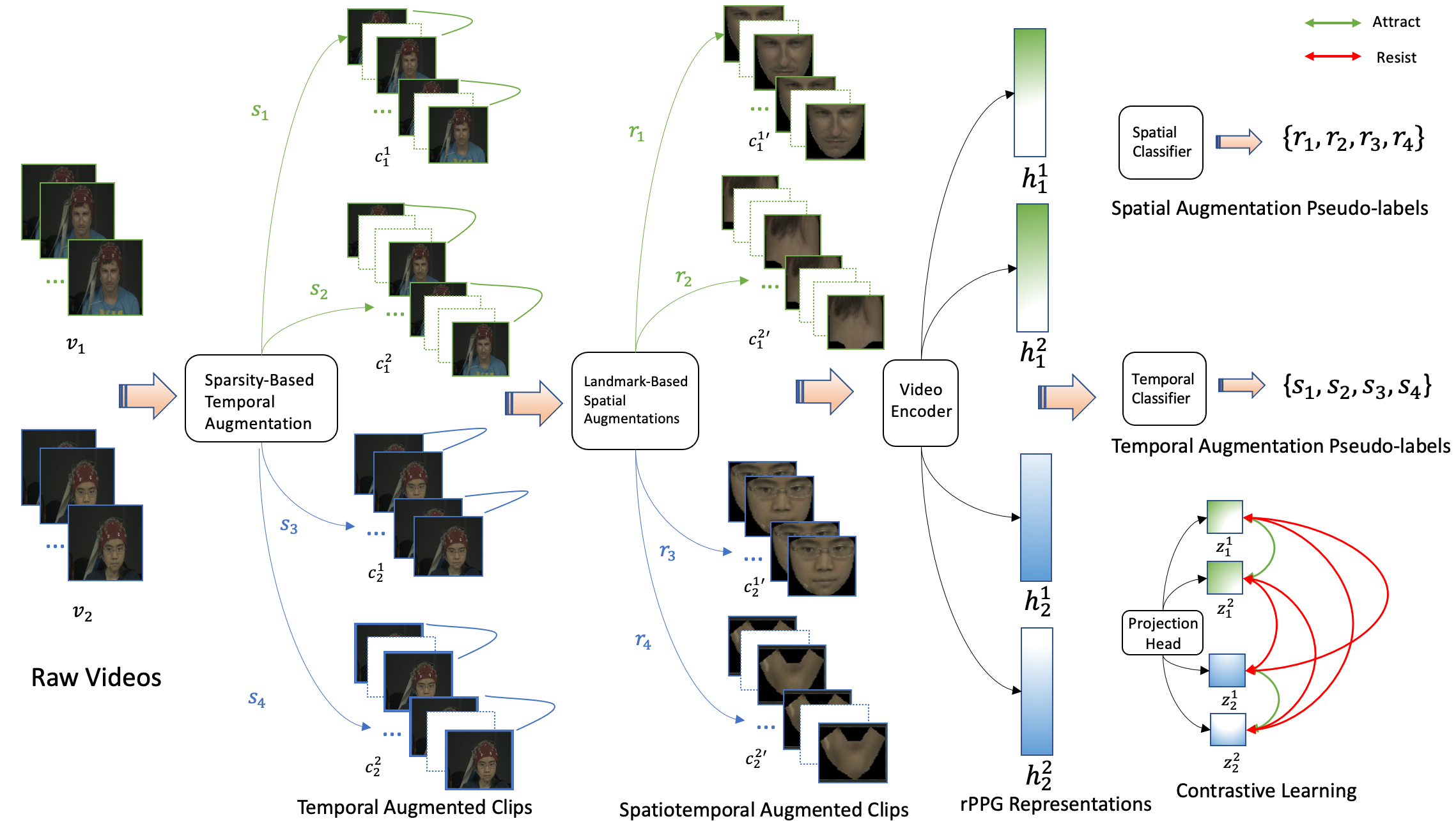

Recent advances in supervised deep learning methods are enabling remote measurements of photoplethysmography-based physiological signals using facial videos. The performance of these supervised methods, however, are dependent on the availability of large labelled data. Contrastive learning as a self-supervised method has recently achieved state-of-the-art performances in learning representative data features by maximising mutual information between different augmented views. However, existing data augmentation techniques for contrastive learning are not designed to learn physiological signals from videos and often fail when there are complicated noise and subtle and periodic colour or shape variations between video frames. To address these problems, we present a novel self-supervised spatiotemporal learning framework for remote physiological signal representation learning, where there is a lack of labelled training data. Firstly, we propose a landmark-based spatial augmentation that splits the face into several informative parts based on the Shafer dichromatic reflection model to characterise subtle skin colour fluctuations. We also formulate a sparsity-based temporal augmentation exploiting Nyquist-Shannon sampling theorem to effectively capture periodic temporal changes by modelling physiological signal features. Furthermore, we introduce a constrained spatiotemporal loss which generates pseudo-labels for augmented video clips. It is used to regulate the training process and handle complicated noise. We evaluated our framework on 3 public datasets and demonstrated superior performances than other self-supervised methods and achieved competitive accuracy compared to the state-of-the-art supervised methods.

PDF Abstract

UBFC-rPPG

UBFC-rPPG