SemanticStyleGAN: Learning Compositional Generative Priors for Controllable Image Synthesis and Editing

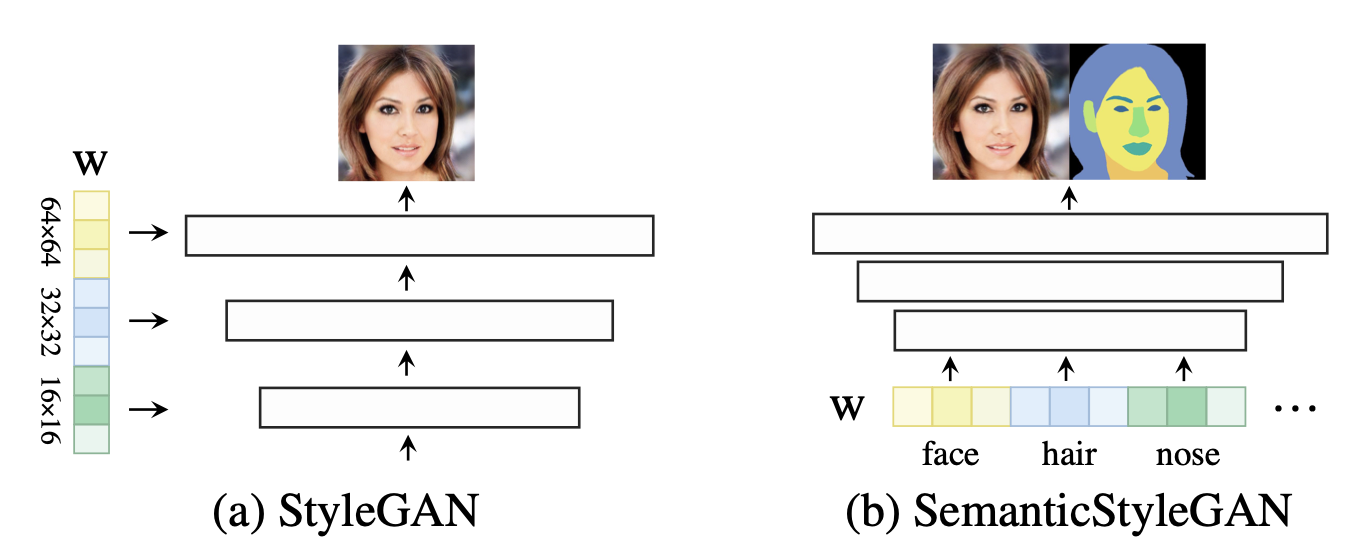

Recent studies have shown that StyleGANs provide promising prior models for downstream tasks on image synthesis and editing. However, since the latent codes of StyleGANs are designed to control global styles, it is hard to achieve a fine-grained control over synthesized images. We present SemanticStyleGAN, where a generator is trained to model local semantic parts separately and synthesizes images in a compositional way. The structure and texture of different local parts are controlled by corresponding latent codes. Experimental results demonstrate that our model provides a strong disentanglement between different spatial areas. When combined with editing methods designed for StyleGANs, it can achieve a more fine-grained control to edit synthesized or real images. The model can also be extended to other domains via transfer learning. Thus, as a generic prior model with built-in disentanglement, it could facilitate the development of GAN-based applications and enable more potential downstream tasks.

PDF Abstract CVPR 2022 PDF CVPR 2022 Abstract

FFHQ

FFHQ

DeepFashion

DeepFashion

CelebAMask-HQ

CelebAMask-HQ

MetFaces

MetFaces