Simple Recurrent Units for Highly Parallelizable Recurrence

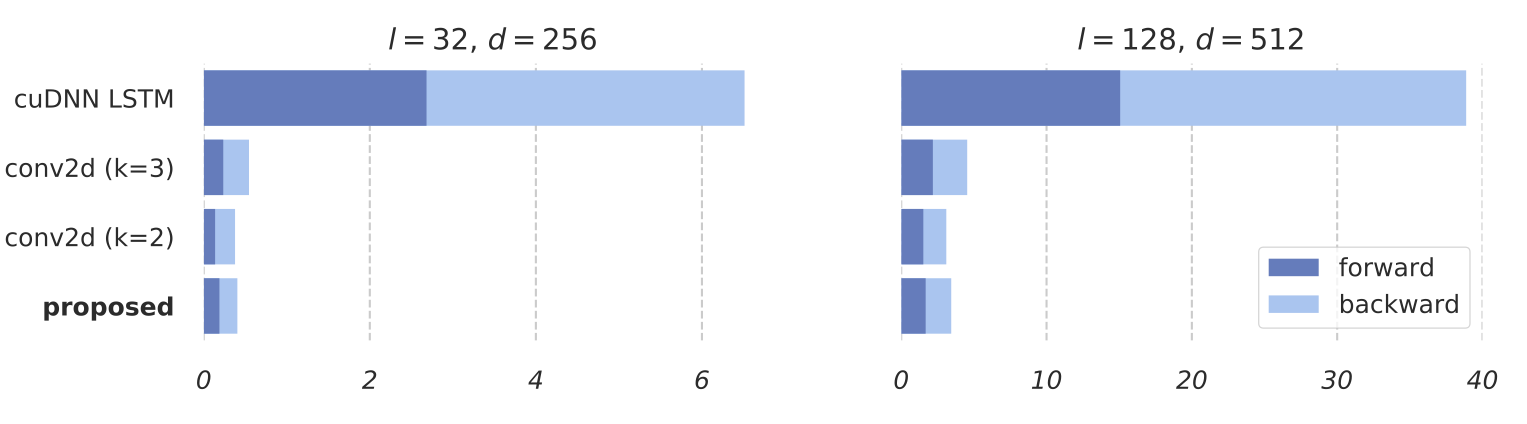

Common recurrent neural architectures scale poorly due to the intrinsic difficulty in parallelizing their state computations. In this work, we propose the Simple Recurrent Unit (SRU), a light recurrent unit that balances model capacity and scalability. SRU is designed to provide expressive recurrence, enable highly parallelized implementation, and comes with careful initialization to facilitate training of deep models. We demonstrate the effectiveness of SRU on multiple NLP tasks. SRU achieves 5--9x speed-up over cuDNN-optimized LSTM on classification and question answering datasets, and delivers stronger results than LSTM and convolutional models. We also obtain an average of 0.7 BLEU improvement over the Transformer model on translation by incorporating SRU into the architecture.

PDF Abstract EMNLP 2018 PDF EMNLP 2018 Abstract

SST

SST

SQuAD

SQuAD

MPQA Opinion Corpus

MPQA Opinion Corpus

WMT 2014

WMT 2014