Single Path One-Shot Neural Architecture Search with Uniform Sampling

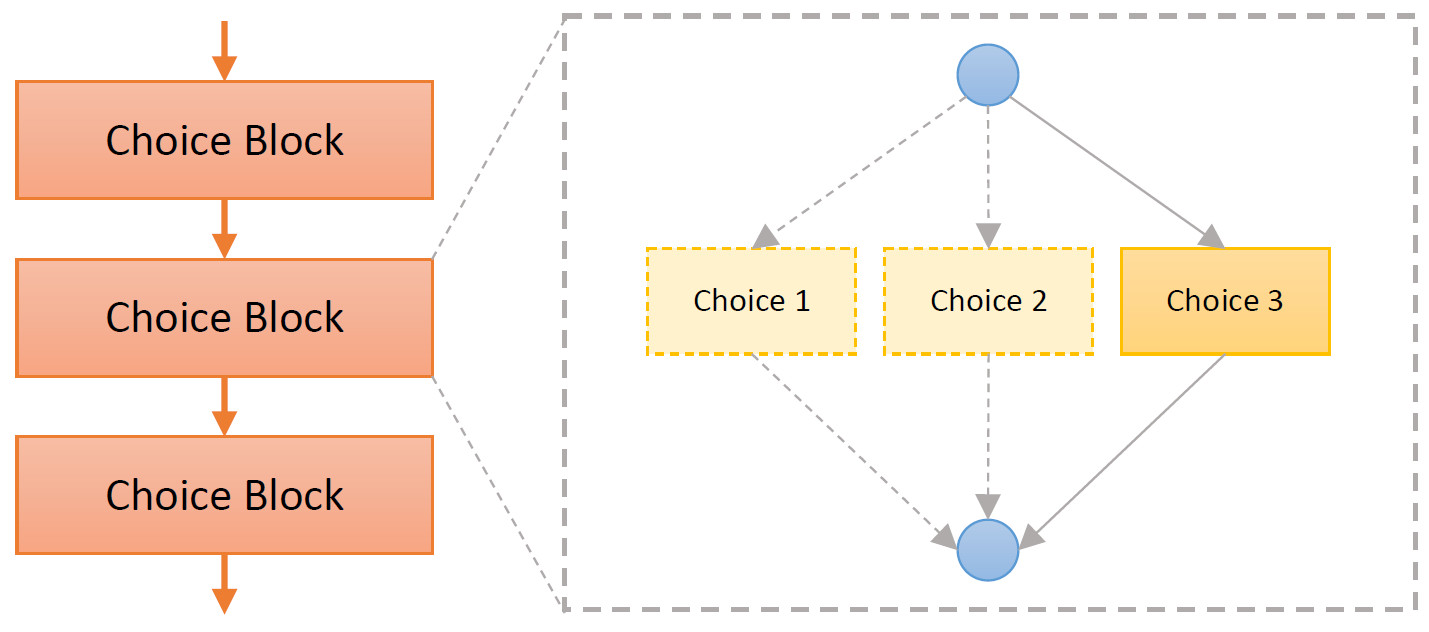

We revisit the one-shot Neural Architecture Search (NAS) paradigm and analyze its advantages over existing NAS approaches. Existing one-shot method, however, is hard to train and not yet effective on large scale datasets like ImageNet. This work propose a Single Path One-Shot model to address the challenge in the training. Our central idea is to construct a simplified supernet, where all architectures are single paths so that weight co-adaption problem is alleviated. Training is performed by uniform path sampling. All architectures (and their weights) are trained fully and equally. Comprehensive experiments verify that our approach is flexible and effective. It is easy to train and fast to search. It effortlessly supports complex search spaces (e.g., building blocks, channel, mixed-precision quantization) and different search constraints (e.g., FLOPs, latency). It is thus convenient to use for various needs. It achieves start-of-the-art performance on the large dataset ImageNet.

PDF Abstract ECCV 2020 PDF ECCV 2020 AbstractCode

Datasets

Results from the Paper

Ranked #88 on

Neural Architecture Search

on ImageNet

(Accuracy metric)

Ranked #88 on

Neural Architecture Search

on ImageNet

(Accuracy metric)

ImageNet

ImageNet