SmoothNets: Optimizing CNN architecture design for differentially private deep learning

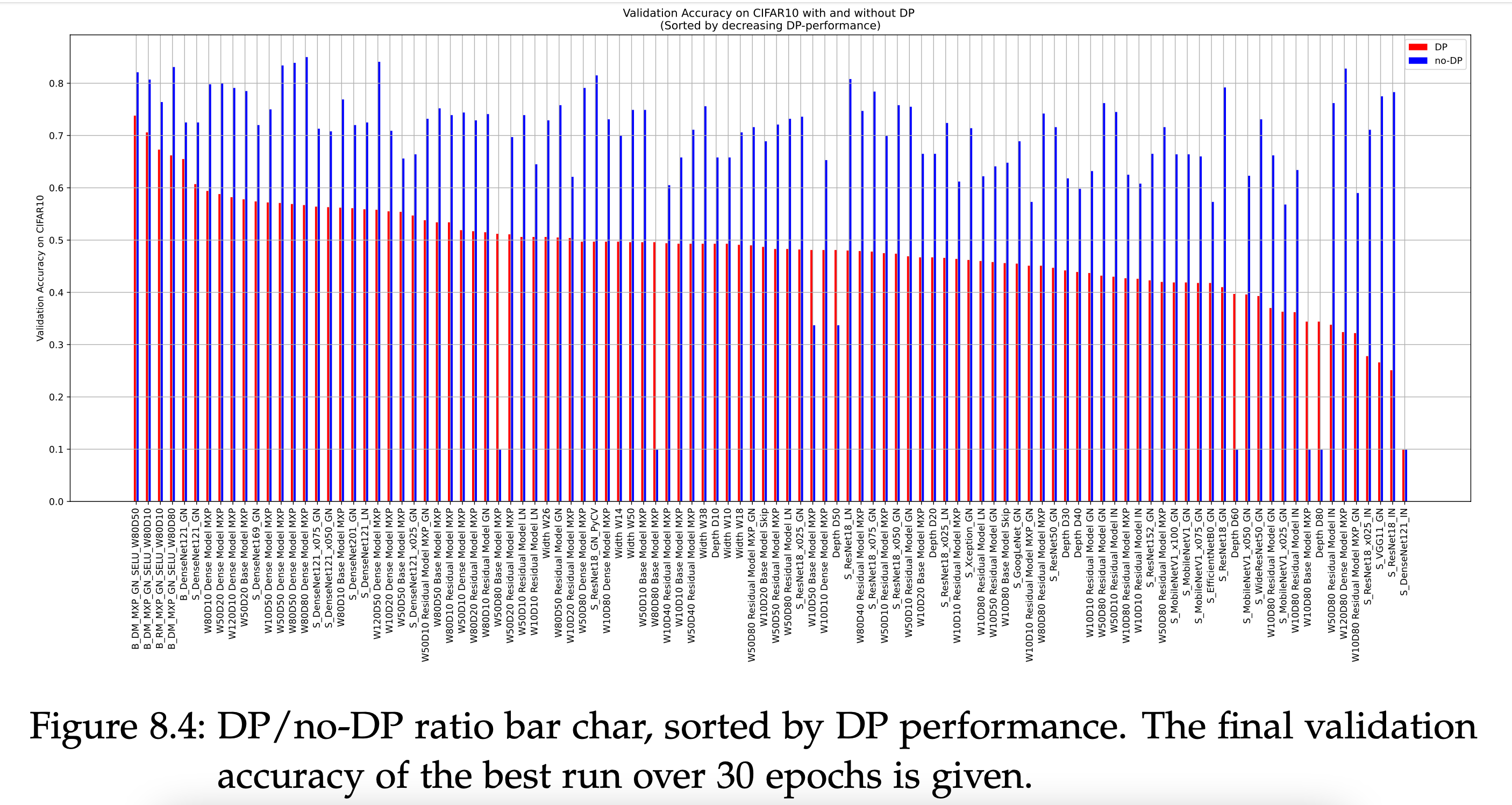

The arguably most widely employed algorithm to train deep neural networks with Differential Privacy is DPSGD, which requires clipping and noising of per-sample gradients. This introduces a reduction in model utility compared to non-private training. Empirically, it can be observed that this accuracy degradation is strongly dependent on the model architecture. We investigated this phenomenon and, by combining components which exhibit good individual performance, distilled a new model architecture termed SmoothNet, which is characterised by increased robustness to the challenges of DP-SGD training. Experimentally, we benchmark SmoothNet against standard architectures on two benchmark datasets and observe that our architecture outperforms others, reaching an accuracy of 73.5\% on CIFAR-10 at $\varepsilon=7.0$ and 69.2\% at $\varepsilon=7.0$ on ImageNette, a state-of-the-art result compared to prior architectural modifications for DP.

PDF Abstract

CIFAR-10

CIFAR-10

ImageNet

ImageNet

Imagenette

Imagenette