Taking a Deeper Look at Co-Salient Object Detection

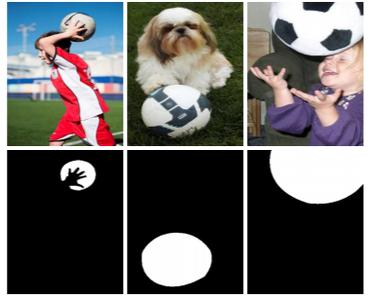

Co-salient object detection (CoSOD) is a newly emerging and rapidly growing branch of salient object detection (SOD), which aims to detect the co-occurring salient objects in multiple images. However, existing CoSOD datasets often have a serious data bias, which assumes that each group of images contains salient objects of similar visual appearances. This bias results in the ideal settings and the effectiveness of the models, trained on existing datasets, may be impaired in real-life situations, where the similarity is usually semantic or conceptual. To tackle this issue, we first collect a new high-quality dataset, named CoSOD3k, which contains 3,316 images divided into 160 groups with multiple level annotations, i.e., category, bounding box, object, and instance levels. CoSOD3k makes a significant leap in terms of diversity, difficulty and scalability, benefiting related vision tasks. Besides, we comprehensively summarize 34 cutting-edge algorithms, benchmarking 19 of them over four existing CoSOD datasets (MSRC, iCoSeg, Image Pair and CoSal2015) and our CoSOD3k with a total of 61K images (largest scale), and reporting group-level performance analysis. Finally, we discuss the challenge and future work of CoSOD. Our study would give a strong boost to growth in the CoSOD community. Benchmark toolbox and results are available on our project page.

PDF AbstractCode

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Co-Salient Object Detection | CoSal2015 | CoEG-Net | MAE | 0.077 | # 8 | |

| S-measure | 0.836 | # 8 | ||||

| max F-measure | 0.832 | # 8 | ||||

| max E-measure | 0.882 | # 8 | ||||

| mean E-measure | 0.867 | # 8 | ||||

| mean F-measure | 0.827 | # 7 | ||||

| Co-Salient Object Detection | CoSOD3k | CoEG-Net | S-measure | 0.778 | # 8 | |

| max E-measure | 0.837 | # 8 | ||||

| max F-measure | 0.758 | # 8 | ||||

| MAE | 0.084 | # 7 | ||||

| mean E-measure | 0.817 | # 8 | ||||

| mean F-measure | 0.748 | # 7 | ||||

| Co-Salient Object Detection | iCoSeg | CoEG-Net | S-measure | 0.875 | # 2 | |

| MAE | 0.060 | # 2 | ||||

| max E-measure | 0.912 | # 2 | ||||

| max F-measure | 0.876 | # 2 |

ImageNet

ImageNet

MS COCO

MS COCO

CoSal2015

CoSal2015