Time Domain Audio Visual Speech Separation

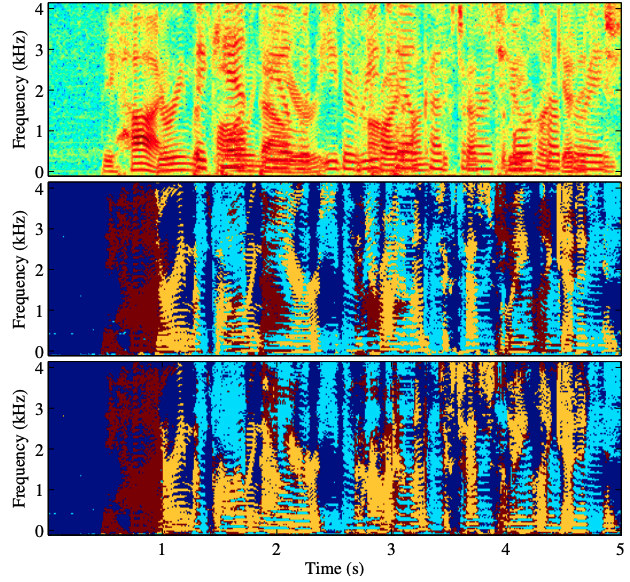

Audio-visual multi-modal modeling has been demonstrated to be effective in many speech related tasks, such as speech recognition and speech enhancement. This paper introduces a new time-domain audio-visual architecture for target speaker extraction from monaural mixtures. The architecture generalizes the previous TasNet (time-domain speech separation network) to enable multi-modal learning and at meanwhile it extends the classical audio-visual speech separation from frequency-domain to time-domain. The main components of proposed architecture include an audio encoder, a video encoder that extracts lip embedding from video streams, a multi-modal separation network and an audio decoder. Experiments on simulated mixtures based on recently released LRS2 dataset show that our method can bring 3dB+ and 4dB+ Si-SNR improvements on two- and three-speaker cases respectively, compared to audio-only TasNet and frequency-domain audio-visual networks

PDF Abstract

WSJ0-2mix

WSJ0-2mix

LRS2

LRS2