TVQA: Localized, Compositional Video Question Answering

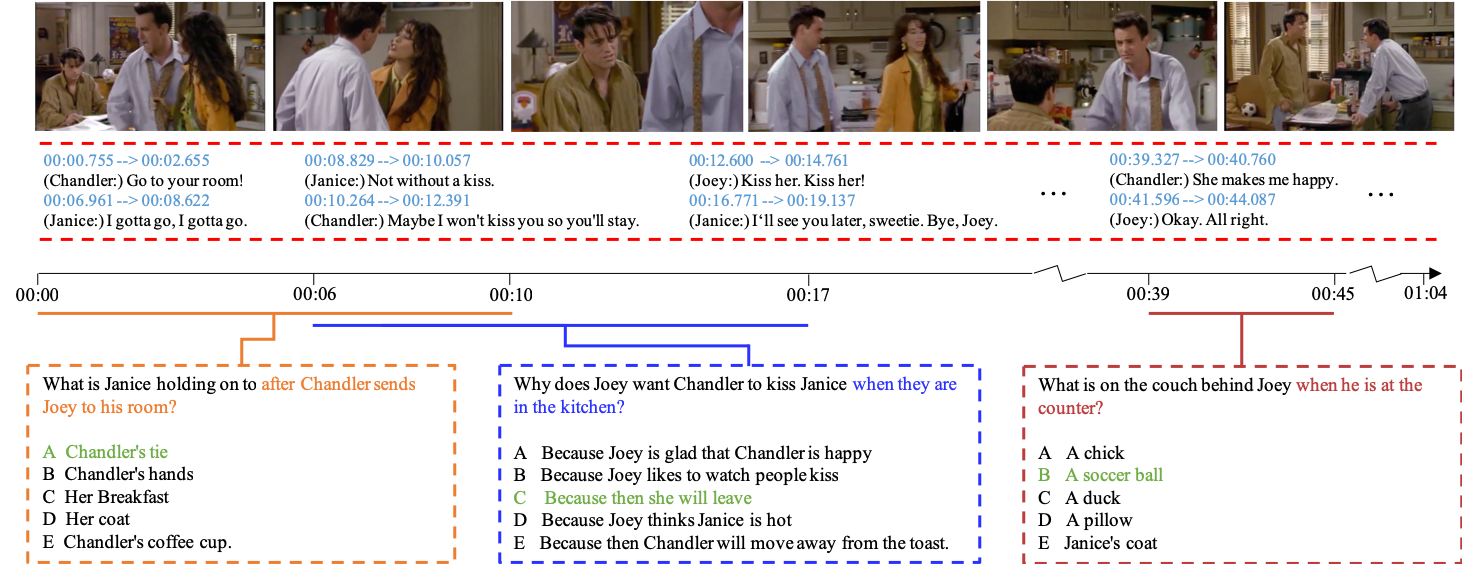

Recent years have witnessed an increasing interest in image-based question-answering (QA) tasks. However, due to data limitations, there has been much less work on video-based QA. In this paper, we present TVQA, a large-scale video QA dataset based on 6 popular TV shows. TVQA consists of 152,545 QA pairs from 21,793 clips, spanning over 460 hours of video. Questions are designed to be compositional in nature, requiring systems to jointly localize relevant moments within a clip, comprehend subtitle-based dialogue, and recognize relevant visual concepts. We provide analyses of this new dataset as well as several baselines and a multi-stream end-to-end trainable neural network framework for the TVQA task. The dataset is publicly available at http://tvqa.cs.unc.edu.

PDF Abstract EMNLP 2018 PDF EMNLP 2018 AbstractCode

Tasks

Datasets

Introduced in the Paper:

TVQA

TVQA

Used in the Paper:

ImageNet

ImageNet

Visual Question Answering

Visual Question Answering

CLEVR

CLEVR

MCTest

MCTest

LSMDC

LSMDC

Visual7W

Visual7W

MovieQA

MovieQA

COCO-QA

COCO-QA

SUTD-TrafficQA

SUTD-TrafficQA

Visual Madlibs

MovieFIB

Visual Madlibs

MovieFIB