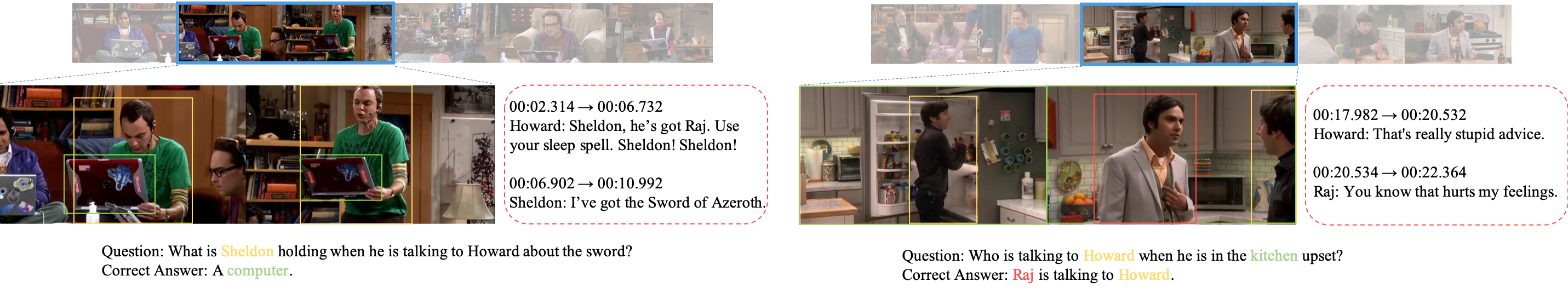

TVQA+: Spatio-Temporal Grounding for Video Question Answering

We present the task of Spatio-Temporal Video Question Answering, which requires intelligent systems to simultaneously retrieve relevant moments and detect referenced visual concepts (people and objects) to answer natural language questions about videos. We first augment the TVQA dataset with 310.8K bounding boxes, linking depicted objects to visual concepts in questions and answers. We name this augmented version as TVQA+. We then propose Spatio-Temporal Answerer with Grounded Evidence (STAGE), a unified framework that grounds evidence in both spatial and temporal domains to answer questions about videos. Comprehensive experiments and analyses demonstrate the effectiveness of our framework and how the rich annotations in our TVQA+ dataset can contribute to the question answering task. Moreover, by performing this joint task, our model is able to produce insightful and interpretable spatio-temporal attention visualizations. Dataset and code are publicly available at: http: //tvqa.cs.unc.edu, https://github.com/jayleicn/TVQAplus

PDF Abstract ACL 2020 PDF ACL 2020 Abstract

TVQA+

TVQA+

Visual Question Answering

Visual Question Answering

TVQA

TVQA

MovieQA

MovieQA