Unsupervised Object Localization: Observing the Background to Discover Objects

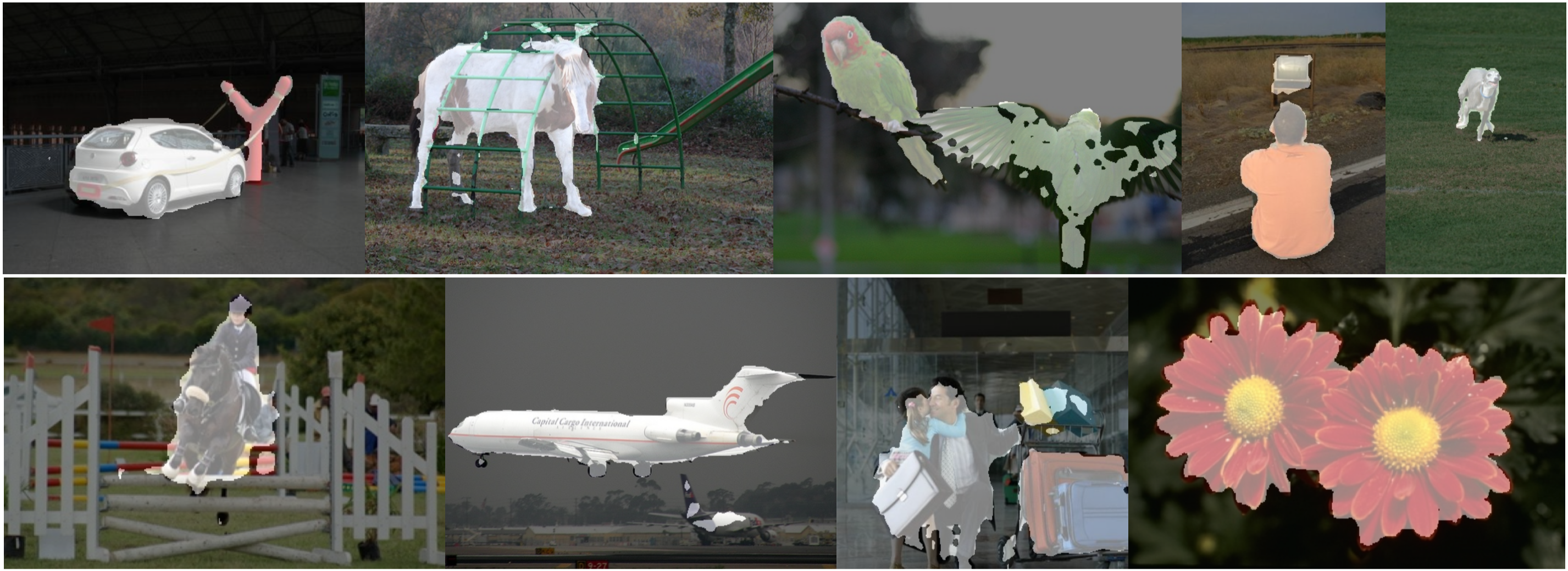

Recent advances in self-supervised visual representation learning have paved the way for unsupervised methods tackling tasks such as object discovery and instance segmentation. However, discovering objects in an image with no supervision is a very hard task; what are the desired objects, when to separate them into parts, how many are there, and of what classes? The answers to these questions depend on the tasks and datasets of evaluation. In this work, we take a different approach and propose to look for the background instead. This way, the salient objects emerge as a by-product without any strong assumption on what an object should be. We propose FOUND, a simple model made of a single $conv1\times1$ initialized with coarse background masks extracted from self-supervised patch-based representations. After fast training and refining these seed masks, the model reaches state-of-the-art results on unsupervised saliency detection and object discovery benchmarks. Moreover, we show that our approach yields good results in the unsupervised semantic segmentation retrieval task. The code to reproduce our results is available at https://github.com/valeoai/FOUND.

PDF Abstract CVPR 2023 PDF CVPR 2023 Abstract

MS COCO

MS COCO

DUTS

DUTS

DUT-OMRON

DUT-OMRON

PASCAL VOC 2007

PASCAL VOC 2007

VOC 2012

VOC 2012

ECSSD

ECSSD