VideoComposer: Compositional Video Synthesis with Motion Controllability

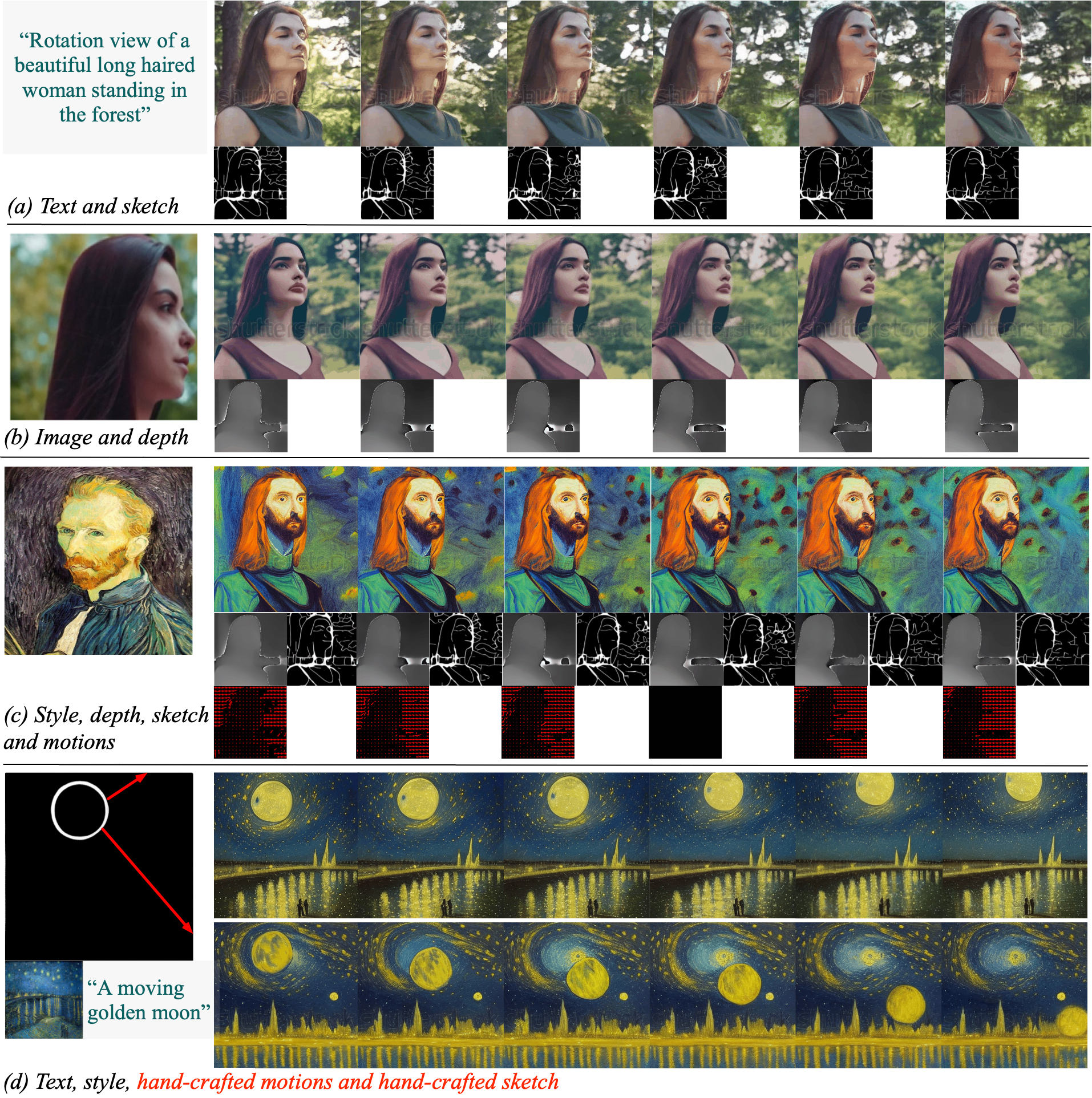

The pursuit of controllability as a higher standard of visual content creation has yielded remarkable progress in customizable image synthesis. However, achieving controllable video synthesis remains challenging due to the large variation of temporal dynamics and the requirement of cross-frame temporal consistency. Based on the paradigm of compositional generation, this work presents VideoComposer that allows users to flexibly compose a video with textual conditions, spatial conditions, and more importantly temporal conditions. Specifically, considering the characteristic of video data, we introduce the motion vector from compressed videos as an explicit control signal to provide guidance regarding temporal dynamics. In addition, we develop a Spatio-Temporal Condition encoder (STC-encoder) that serves as a unified interface to effectively incorporate the spatial and temporal relations of sequential inputs, with which the model could make better use of temporal conditions and hence achieve higher inter-frame consistency. Extensive experimental results suggest that VideoComposer is able to control the spatial and temporal patterns simultaneously within a synthesized video in various forms, such as text description, sketch sequence, reference video, or even simply hand-crafted motions. The code and models will be publicly available at https://videocomposer.github.io.

PDF Abstract NeurIPS 2023 PDF NeurIPS 2023 AbstractCode

Results from the Paper

Ranked #5 on

Text-to-Video Generation

on EvalCrafter Text-to-Video (ECTV) Dataset

(using extra training data)

Ranked #5 on

Text-to-Video Generation

on EvalCrafter Text-to-Video (ECTV) Dataset

(using extra training data)

MSR-VTT

MSR-VTT

LAION-400M

LAION-400M