What do Large Language Models Learn beyond Language?

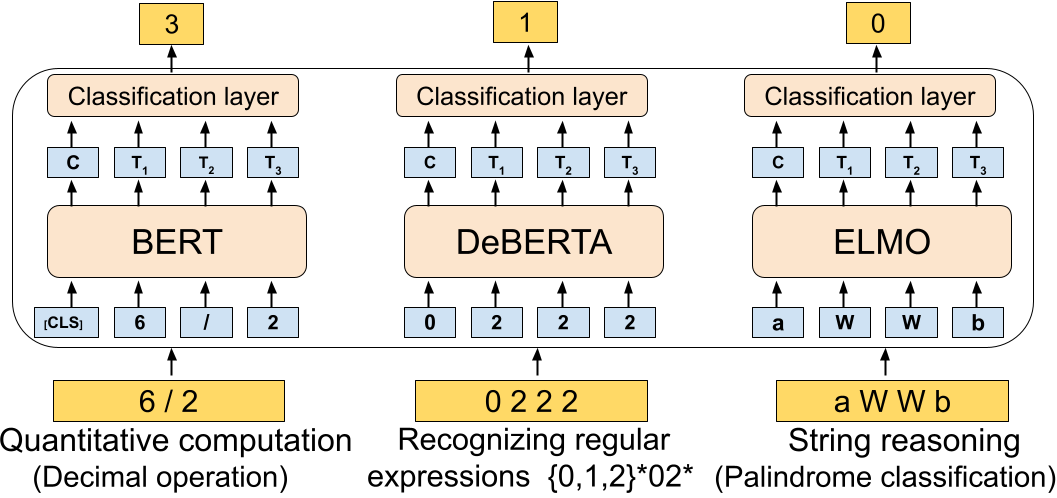

Large language models (LMs) have rapidly become a mainstay in Natural Language Processing. These models are known to acquire rich linguistic knowledge from training on large amounts of text. In this paper, we investigate if pre-training on text also confers these models with helpful `inductive biases' for non-linguistic reasoning. On a set of 19 diverse non-linguistic tasks involving quantitative computations, recognizing regular expressions and reasoning over strings. We find that pretrained models significantly outperform comparable non-pretrained neural models. This remains true also in experiments with training non-pretrained models with fewer parameters to account for model regularization effects. We further explore the effect of text domain on LMs by pretraining models from text from different domains and provenances. Our experiments surprisingly reveal that the positive effects of pre-training persist even when pretraining on multi-lingual text or computer code, and even for text generated from synthetic languages. Our findings suggest a hitherto unexplored deep connection between pre-training and inductive learning abilities of language models.

PDF Abstract

SNLI

SNLI