Search Results

Found 242 papers, 155 papers with code

NTIRE 2024 Challenge on Short-form UGC Video Quality Assessment: Methods and Results

This paper reviews the NTIRE 2024 Challenge on Shortform UGC Video Quality Assessment (S-UGC VQA), where various excellent solutions are submitted and evaluated on the collected dataset KVQ from popular short-form video platform, i. e., Kuaishou/Kwai Platform.

NIR-Assisted Image Denoising: A Selective Fusion Approach and A Real-World Benchmark Datase

Despite the significant progress in image denoising, it is still challenging to restore fine-scale details while removing noise, especially in extremely low-light environments.

TBSN: Transformer-Based Blind-Spot Network for Self-Supervised Image Denoising

For channel self-attention, we observe that it may leak the blind-spot information when the channel number is greater than spatial size in the deep layers of multi-scale architectures.

SmartControl: Enhancing ControlNet for Handling Rough Visual Conditions

The key idea of our SmartControl is to relax the visual condition on the areas that are conflicted with text prompts.

Responsible Visual Editing

To mitigate the negative implications of harmful images on research, we create a transparent and public dataset, AltBear, which expresses harmful information using teddy bears instead of humans.

MC$^2$: Multi-concept Guidance for Customized Multi-concept Generation

Customized text-to-image generation aims to synthesize instantiations of user-specified concepts and has achieved unprecedented progress in handling individual concept.

ShoeModel: Learning to Wear on the User-specified Shoes via Diffusion Model

Specifically, we propose a shoe-wearing system, called Shoe-Model, to generate plausible images of human legs interacting with the given shoes.

Dual-Camera Smooth Zoom on Mobile Phones

In this work, we introduce a new task, ie, dual-camera smooth zoom (DCSZ) to achieve a smooth zoom preview.

Self-Supervised Video Desmoking for Laparoscopic Surgery

On the other hand, in order to enhance the desmoking performance, we further feed the valuable information from PS frame into models, where a masking strategy and a regularization term are presented to avoid trivial solutions.

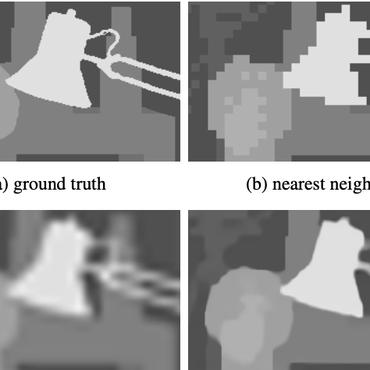

Learning Hierarchical Color Guidance for Depth Map Super-Resolution

On the one hand, the low-level detail embedding module is designed to supplement high-frequency color information of depth features in a residual mask manner at the low-level stages.