Search Results for author: Chang D. Yoo

Found 63 papers, 24 papers with code

C-TPT: Calibrated Test-Time Prompt Tuning for Vision-Language Models via Text Feature Dispersion

no code implementations • 21 Mar 2024 • Hee Suk Yoon, Eunseop Yoon, Joshua Tian Jin Tee, Mark Hasegawa-Johnson, Yingzhen Li, Chang D. Yoo

Through a series of observations, we find that the prompt choice significantly affects the calibration in CLIP, where the prompts leading to higher text feature dispersion result in better-calibrated predictions.

AdaMER-CTC: Connectionist Temporal Classification with Adaptive Maximum Entropy Regularization for Automatic Speech Recognition

no code implementations • 18 Mar 2024 • SooHwan Eom, Eunseop Yoon, Hee Suk Yoon, Chanwoo Kim, Mark Hasegawa-Johnson, Chang D. Yoo

In Automatic Speech Recognition (ASR) systems, a recurring obstacle is the generation of narrowly focused output distributions.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+1

Automatic Speech Recognition (ASR)

+1

Cross-view Masked Diffusion Transformers for Person Image Synthesis

no code implementations • 2 Feb 2024 • Trung X. Pham, Zhang Kang, Chang D. Yoo

Our best model surpasses the pixel-based diffusion with $\frac{2}{3}$ of the parameters and achieves $5. 43 \times$ faster inference.

Wavelet-Guided Acceleration of Text Inversion in Diffusion-Based Image Editing

no code implementations • 18 Jan 2024 • Gwanhyeong Koo, Sunjae Yoon, Chang D. Yoo

To address this, we introduce an innovative method that maintains the principles of the NTI while accelerating the image editing process.

Querying Easily Flip-flopped Samples for Deep Active Learning

no code implementations • 18 Jan 2024 • Seong Jin Cho, Gwangsu Kim, Junghyun Lee, Jinwoo Shin, Chang D. Yoo

Active learning is a machine learning paradigm that aims to improve the performance of a model by strategically selecting and querying unlabeled data.

Continual Learning: Forget-free Winning Subnetworks for Video Representations

2 code implementations • 19 Dec 2023 • Haeyong Kang, Jaehong Yoon, Sung Ju Hwang, Chang D. Yoo

Inspired by the Lottery Ticket Hypothesis (LTH), which highlights the existence of efficient subnetworks within larger, dense networks, a high-performing Winning Subnetwork (WSN) in terms of task performance under appropriate sparsity conditions is considered for various continual learning tasks.

Neutral Editing Framework for Diffusion-based Video Editing

no code implementations • 10 Dec 2023 • Sunjae Yoon, Gwanhyeong Koo, Ji Woo Hong, Chang D. Yoo

To this end, this paper proposes Neutral Editing (NeuEdit) framework to enable complex non-rigid editing by changing the motion of a person/object in a video, which has never been attempted before.

SimPSI: A Simple Strategy to Preserve Spectral Information in Time Series Data Augmentation

1 code implementation • 10 Dec 2023 • Hyun Ryu, Sunjae Yoon, Hee Suk Yoon, Eunseop Yoon, Chang D. Yoo

Our experimental results support that SimPSI considerably enhances the performance of time series data augmentations by preserving core spectral information.

Flexible Cross-Modal Steganography via Implicit Representations

no code implementations • 9 Dec 2023 • Seoyun Yang, Sojeong Song, Chang D. Yoo, Junmo Kim

We present INRSteg, an innovative lossless steganography framework based on a novel data form Implicit Neural Representations (INR) that is modal-agnostic.

Learning Pseudo-Labeler beyond Noun Concepts for Open-Vocabulary Object Detection

no code implementations • 4 Dec 2023 • Sunghun Kang, Junbum Cha, Jonghwan Mun, Byungseok Roh, Chang D. Yoo

Specifically, the proposed method aims to learn arbitrary image-to-text mapping for pseudo-labeling of arbitrary concepts, named Pseudo-Labeling for Arbitrary Concepts (PLAC).

DifAugGAN: A Practical Diffusion-style Data Augmentation for GAN-based Single Image Super-resolution

no code implementations • 30 Nov 2023 • Axi Niu, Kang Zhang, Joshua Tian Jin Tee, Trung X. Pham, Jinqiu Sun, Chang D. Yoo, In So Kweon, Yanning Zhang

It is well known the adversarial optimization of GAN-based image super-resolution (SR) methods makes the preceding SR model generate unpleasant and undesirable artifacts, leading to large distortion.

SCANet: Scene Complexity Aware Network for Weakly-Supervised Video Moment Retrieval

no code implementations • ICCV 2023 • Sunjae Yoon, Gwanhyeong Koo, Dahyun Kim, Chang D. Yoo

These proposals are assumed to contain many distinguishable scenes in a video as candidates.

Unsupervised Speech Recognition with N-Skipgram and Positional Unigram Matching

1 code implementation • 3 Oct 2023 • Liming Wang, Mark Hasegawa-Johnson, Chang D. Yoo

Training unsupervised speech recognition systems presents challenges due to GAN-associated instability, misalignment between speech and text, and significant memory demands.

DimCL: Dimensional Contrastive Learning For Improving Self-Supervised Learning

no code implementations • 21 Sep 2023 • Thanh Nguyen, Trung Pham, Chaoning Zhang, Tung Luu, Thang Vu, Chang D. Yoo

Self-supervised learning (SSL) has gained remarkable success, for which contrastive learning (CL) plays a key role.

Mitigating the Exposure Bias in Sentence-Level Grapheme-to-Phoneme (G2P) Transduction

no code implementations • 16 Aug 2023 • Eunseop Yoon, Hee Suk Yoon, Dhananjaya Gowda, SooHwan Eom, Daehyeok Kim, John Harvill, Heting Gao, Mark Hasegawa-Johnson, Chanwoo Kim, Chang D. Yoo

Text-to-Text Transfer Transformer (T5) has recently been considered for the Grapheme-to-Phoneme (G2P) transduction.

A Theory of Unsupervised Speech Recognition

1 code implementation • 9 Jun 2023 • Liming Wang, Mark Hasegawa-Johnson, Chang D. Yoo

Unsupervised speech recognition (ASR-U) is the problem of learning automatic speech recognition (ASR) systems from unpaired speech-only and text-only corpora.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

INTapt: Information-Theoretic Adversarial Prompt Tuning for Enhanced Non-Native Speech Recognition

no code implementations • 25 May 2023 • Eunseop Yoon, Hee Suk Yoon, John Harvill, Mark Hasegawa-Johnson, Chang D. Yoo

INTapt is trained simultaneously in the following two manners: (1) adversarial training to reduce accent feature dependence between the original input and the prompt-concatenated input and (2) training to minimize CTC loss for improving ASR performance to a prompt-concatenated input.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

Forget-free Continual Learning with Soft-Winning SubNetworks

1 code implementation • 27 Mar 2023 • Haeyong Kang, Jaehong Yoon, Sultan Rizky Madjid, Sung Ju Hwang, Chang D. Yoo

Inspired by Regularized Lottery Ticket Hypothesis (RLTH), which states that competitive smooth (non-binary) subnetworks exist within a dense network in continual learning tasks, we investigate two proposed architecture-based continual learning methods which sequentially learn and select adaptive binary- (WSN) and non-binary Soft-Subnetworks (SoftNet) for each task.

Maximum margin learning of t-SPNs for cell classification with filtered input

no code implementations • 16 Mar 2023 • Haeyong Kang, Chang D. Yoo, Yongcheon Na

The t-SPN is constructed such that the unnormalized probability is represented as conditional probabilities of a subset of most similar cell classes.

ESD: Expected Squared Difference as a Tuning-Free Trainable Calibration Measure

1 code implementation • 4 Mar 2023 • Hee Suk Yoon, Joshua Tian Jin Tee, Eunseop Yoon, Sunjae Yoon, Gwangsu Kim, Yingzhen Li, Chang D. Yoo

Studies have shown that modern neural networks tend to be poorly calibrated due to over-confident predictions.

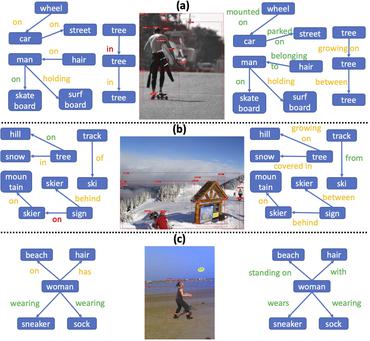

Skew Class-balanced Re-weighting for Unbiased Scene Graph Generation

1 code implementation • 1 Jan 2023 • Haeyong Kang, Chang D. Yoo

An unbiased scene graph generation (SGG) algorithm referred to as Skew Class-balanced Re-weighting (SCR) is proposed for considering the unbiased predicate prediction caused by the long-tailed distribution.

SMSMix: Sense-Maintained Sentence Mixup for Word Sense Disambiguation

no code implementations • 14 Dec 2022 • Hee Suk Yoon, Eunseop Yoon, John Harvill, Sunjae Yoon, Mark Hasegawa-Johnson, Chang D. Yoo

To the best of our knowledge, this is the first attempt to apply mixup in NLP while preserving the meaning of a specific word.

Information-Theoretic Text Hallucination Reduction for Video-grounded Dialogue

no code implementations • 12 Dec 2022 • Sunjae Yoon, Eunseop Yoon, Hee Suk Yoon, Junyeong Kim, Chang D. Yoo

Despite the recent success of multi-modal reasoning to generate answer sentences, existing dialogue systems still suffer from a text hallucination problem, which denotes indiscriminate text-copying from input texts without an understanding of the question.

Self-Supervised Visual Representation Learning via Residual Momentum

no code implementations • 17 Nov 2022 • Trung X. Pham, Axi Niu, Zhang Kang, Sultan Rizky Madjid, Ji Woo Hong, Daehyeok Kim, Joshua Tian Jin Tee, Chang D. Yoo

To solve this problem, we propose "residual momentum" to directly reduce this gap to encourage the student to learn the representation as close to that of the teacher as possible, narrow the performance gap with the teacher, and significantly improve the existing SSL.

Selective Query-guided Debiasing for Video Corpus Moment Retrieval

1 code implementation • 17 Oct 2022 • Sunjae Yoon, Ji Woo Hong, Eunseop Yoon, Dahyun Kim, Junyeong Kim, Hee Suk Yoon, Chang D. Yoo

Video moment retrieval (VMR) aims to localize target moments in untrimmed videos pertinent to a given textual query.

Scalable SoftGroup for 3D Instance Segmentation on Point Clouds

1 code implementation • 17 Sep 2022 • Thang Vu, Kookhoi Kim, Tung M. Luu, Thanh Nguyen, Junyeong Kim, Chang D. Yoo

Furthermore, SoftGroup can be extended to perform object detection and panoptic segmentation with nontrivial improvements over existing methods.

On the Soft-Subnetwork for Few-shot Class Incremental Learning

2 code implementations • 15 Sep 2022 • Haeyong Kang, Jaehong Yoon, Sultan Rizky Hikmawan Madjid, Sung Ju Hwang, Chang D. Yoo

Inspired by Regularized Lottery Ticket Hypothesis (RLTH), which hypothesizes that there exist smooth (non-binary) subnetworks within a dense network that achieve the competitive performance of the dense network, we propose a few-shot class incremental learning (FSCIL) method referred to as \emph{Soft-SubNetworks (SoftNet)}.

On the Pros and Cons of Momentum Encoder in Self-Supervised Visual Representation Learning

no code implementations • 11 Aug 2022 • Trung Pham, Chaoning Zhang, Axi Niu, Kang Zhang, Chang D. Yoo

Exponential Moving Average (EMA or momentum) is widely used in modern self-supervised learning (SSL) approaches, such as MoCo, for enhancing performance.

Decoupled Adversarial Contrastive Learning for Self-supervised Adversarial Robustness

2 code implementations • 22 Jul 2022 • Chaoning Zhang, Kang Zhang, Chenshuang Zhang, Axi Niu, Jiu Feng, Chang D. Yoo, In So Kweon

Adversarial training (AT) for robust representation learning and self-supervised learning (SSL) for unsupervised representation learning are two active research fields.

Forget-free Continual Learning with Winning Subnetworks

1 code implementation • International Conference on Machine Learning 2022 • Haeyong Kang, Rusty John Lloyd Mina, Sultan Rizky Hikmawan Madjid, Jaehong Yoon, Mark Hasegawa-Johnson, Sung Ju Hwang, Chang D. Yoo

Inspired by Lottery Ticket Hypothesis that competitive subnetworks exist within a dense network, we propose a continual learning method referred to as Winning SubNetworks (WSN), which sequentially learns and selects an optimal subnetwork for each task.

Learning Imbalanced Datasets with Maximum Margin Loss

1 code implementation • 11 Jun 2022 • Haeyong Kang, Thang Vu, Chang D. Yoo

A learning algorithm referred to as Maximum Margin (MM) is proposed for considering the class-imbalance data learning issue: the trained model tends to predict the majority of classes rather than the minority ones.

How Does SimSiam Avoid Collapse Without Negative Samples? A Unified Understanding with Self-supervised Contrastive Learning

no code implementations • 30 Mar 2022 • Chaoning Zhang, Kang Zhang, Chenshuang Zhang, Trung X. Pham, Chang D. Yoo, In So Kweon

This yields a unified perspective on how negative samples and SimSiam alleviate collapse.

Dual Temperature Helps Contrastive Learning Without Many Negative Samples: Towards Understanding and Simplifying MoCo

2 code implementations • CVPR 2022 • Chaoning Zhang, Kang Zhang, Trung X. Pham, Axi Niu, Zhinan Qiao, Chang D. Yoo, In So Kweon

Contrastive learning (CL) is widely known to require many negative samples, 65536 in MoCo for instance, for which the performance of a dictionary-free framework is often inferior because the negative sample size (NSS) is limited by its mini-batch size (MBS).

SoftGroup for 3D Instance Segmentation on Point Clouds

1 code implementation • CVPR 2022 • Thang Vu, Kookhoi Kim, Tung M. Luu, Xuan Thanh Nguyen, Chang D. Yoo

The hard predictions are made when performing semantic segmentation such that each point is associated with a single class.

Ranked #3 on

3D Instance Segmentation

on STPLS3D

Ranked #3 on

3D Instance Segmentation

on STPLS3D

Fast Adversarial Training with Noise Augmentation: A Unified Perspective on RandStart and GradAlign

no code implementations • 11 Feb 2022 • Axi Niu, Kang Zhang, Chaoning Zhang, Chenshuang Zhang, In So Kweon, Chang D. Yoo, Yanning Zhang

The former works only for a relatively small perturbation 8/255 with the l_\infty constraint, and GradAlign improves it by extending the perturbation size to 16/255 (with the l_\infty constraint) but at the cost of being 3 to 4 times slower.

GDCA: GAN-based single image super resolution with Dual discriminators and Channel Attention

no code implementations • 9 Nov 2021 • Thanh Nguyen, Hieu Hoang, Chang D. Yoo

Single Image Super-Resolution (SISR) is a very active research field.

Hindsight Goal Ranking on Replay Buffer for Sparse Reward Environment

1 code implementation • 28 Oct 2021 • Tung M. Luu, Chang D. Yoo

The actual sampling for large TD error is performed in two steps: first, an episode is sampled from the relay buffer according to the average TD error of its experiences, and then, for the sampled episode, the hindsight goal leading to larger TD error is sampled with higher probability from future visited states.

How Does SimSiam Avoid Collapse Without Negative Samples? Towards a Unified Understanding of Progress in SSL

no code implementations • ICLR 2022 • Chaoning Zhang, Kang Zhang, Chenshuang Zhang, Trung X. Pham, Chang D. Yoo, In So Kweon

Towards avoiding collapse in self-supervised learning (SSL), contrastive loss is widely used but often requires a large number of negative samples.

Active Learning: Sampling in the Least Probable Disagreement Region

no code implementations • 29 Sep 2021 • Seong Jin Cho, Gwangsu Kim, Chang D. Yoo

This strategy is valid only when the sample's "closeness" to the decision boundary can be estimated.

LEARNING PHONEME-LEVEL DISCRETE SPEECH REPRESENTATION WITH WORD-LEVEL SUPERVISION

no code implementations • 29 Sep 2021 • Liming Wang, Siyuan Feng, Mark A. Hasegawa-Johnson, Chang D. Yoo

Phonemes are defined by their relationship to words: changing a phoneme changes the word.

Fast and Efficient MMD-based Fair PCA via Optimization over Stiefel Manifold

2 code implementations • 23 Sep 2021 • Junghyun Lee, Gwangsu Kim, Matt Olfat, Mark Hasegawa-Johnson, Chang D. Yoo

This paper defines fair principal component analysis (PCA) as minimizing the maximum mean discrepancy (MMD) between dimensionality-reduced conditional distributions of different protected classes.

Self-supervised Learning with Local Attention-Aware Feature

no code implementations • 1 Aug 2021 • Trung X. Pham, Rusty John Lloyd Mina, Dias Issa, Chang D. Yoo

In this work, we propose a novel methodology for self-supervised learning for generating global and local attention-aware visual features.

Structured Co-reference Graph Attention for Video-grounded Dialogue

no code implementations • 24 Mar 2021 • Junyeong Kim, Sunjae Yoon, Dahyun Kim, Chang D. Yoo

A video-grounded dialogue system referred to as the Structured Co-reference Graph Attention (SCGA) is presented for decoding the answer sequence to a question regarding a given video while keeping track of the dialogue context.

Robust MAML: Prioritization task buffer with adaptive learning process for model-agnostic meta-learning

no code implementations • 15 Mar 2021 • Thanh Nguyen, Tung Luu, Trung Pham, Sanzhar Rakhimkul, Chang D. Yoo

Model agnostic meta-learning (MAML) is a popular state-of-the-art meta-learning algorithm that provides good weight initialization of a model given a variety of learning tasks.

Sample-efficient Reinforcement Learning Representation Learning with Curiosity Contrastive Forward Dynamics Model

1 code implementation • 15 Mar 2021 • Thanh Nguyen, Tung M. Luu, Thang Vu, Chang D. Yoo

Developing an agent in reinforcement learning (RL) that is capable of performing complex control tasks directly from high-dimensional observation such as raw pixels is yet a challenge as efforts are made towards improving sample efficiency and generalization.

Semantic Grouping Network for Video Captioning

1 code implementation • 1 Feb 2021 • Hobin Ryu, Sunghun Kang, Haeyong Kang, Chang D. Yoo

This paper considers a video caption generating network referred to as Semantic Grouping Network (SGN) that attempts (1) to group video frames with discriminating word phrases of partially decoded caption and then (2) to decode those semantically aligned groups in predicting the next word.

Least Probable Disagreement Region for Active Learning

no code implementations • 1 Jan 2021 • Seong Jin Cho, Gwangsu Kim, Chang D. Yoo

Active learning strategy to query unlabeled samples nearer the estimated decision boundary at each step has been known to be effective when the distance from the sample data to the decision boundary can be explicitly evaluated; however, in numerous cases in machine learning, especially when it involves deep learning, conventional distance such as the $\ell_p$ from sample to decision boundary is not readily measurable.

SCNet: Training Inference Sample Consistency for Instance Segmentation

2 code implementations • 18 Dec 2020 • Thang Vu, Haeyong Kang, Chang D. Yoo

This paper proposes an architecture referred to as Sample Consistency Network (SCNet) to ensure that the IoU distribution of the samples at training time is close to that at inference time.

VLANet: Video-Language Alignment Network for Weakly-Supervised Video Moment Retrieval

1 code implementation • ECCV 2020 • Minuk Ma, Sunjae Yoon, Junyeong Kim, Young-Joon Lee, Sunghun Kang, Chang D. Yoo

This paper explores methods for performing VMR in a weakly-supervised manner (wVMR): training is performed without temporal moment labels but only with the text query that describes a segment of the video.

Modality Shifting Attention Network for Multi-modal Video Question Answering

no code implementations • CVPR 2020 • Junyeong Kim, Minuk Ma, Trung Pham, Kyung-Su Kim, Chang D. Yoo

To this end, MSAN is based on (1) the moment proposal network (MPN) that attempts to locate the most appropriate temporal moment from each of the modalities, and also on (2) the heterogeneous reasoning network (HRN) that predicts the answer using an attention mechanism on both modalities.

Cascade RPN: Delving into High-Quality Region Proposal Network with Adaptive Convolution

2 code implementations • NeurIPS 2019 • Thang Vu, Hyunjun Jang, Trung X. Pham, Chang D. Yoo

This paper considers an architecture referred to as Cascade Region Proposal Network (Cascade RPN) for improving the region-proposal quality and detection performance by \textit{systematically} addressing the limitation of the conventional RPN that \textit{heuristically defines} the anchors and \textit{aligns} the features to the anchors.

Ranked #172 on

Object Detection

on COCO test-dev

Ranked #172 on

Object Detection

on COCO test-dev

Gaining Extra Supervision via Multi-task learning for Multi-Modal Video Question Answering

no code implementations • 28 May 2019 • Junyeong Kim, Minuk Ma, Kyung-Su Kim, Sungjin Kim, Chang D. Yoo

This paper proposes a method to gain extra supervision via multi-task learning for multi-modal video question answering.

Edge-labeling Graph Neural Network for Few-shot Learning

4 code implementations • CVPR 2019 • Jongmin Kim, Taesup Kim, Sungwoong Kim, Chang D. Yoo

In this paper, we propose a novel edge-labeling graph neural network (EGNN), which adapts a deep neural network on the edge-labeling graph, for few-shot learning.

Learning to Augment Influential Data

no code implementations • ICLR 2019 • Donghoon Lee, Chang D. Yoo

The differentiable augmentation model and reformulation of the influence function allow the parameters of the augmented model to be directly updated by backpropagation to minimize the validation loss.

Progressive Attention Memory Network for Movie Story Question Answering

no code implementations • CVPR 2019 • Junyeong Kim, Minuk Ma, Kyung-Su Kim, Sungjin Kim, Chang D. Yoo

To overcome these challenges, PAMN involves three main features: (1) progressive attention mechanism that utilizes cues from both question and answer to progressively prune out irrelevant temporal parts in memory, (2) dynamic modality fusion that adaptively determines the contribution of each modality for answering the current question, and (3) belief correction answering scheme that successively corrects the prediction score on each candidate answer.

Ranked #2 on

Video Story QA

on MovieQA

Ranked #2 on

Video Story QA

on MovieQA

Pivot Correlational Neural Network for Multimodal Video Categorization

no code implementations • ECCV 2018 • Sunghun Kang, Junyeong Kim, Hyun-Soo Choi, Sungjin Kim, Chang D. Yoo

The architecture is trained to maximizes the correlation between the hidden states as well as the predictions of the modal-agnostic pivot stream and modal-specific stream in the network.

Fast and Efficient Image Quality Enhancement via Desubpixel Convolutional Neural Networks

1 code implementation • ECCV2018 2018 • Thang Vu, Cao V. Nguyen, Trung X. Pham, Tung M. Luu, Chang D. Yoo

This paper considers a convolutional neural network for image quality enhancement referred to as the fast and efficient quality enhancement (FEQE) that can be trained for either image super-resolution or image enhancement to provide accurate yet visually pleasing images on mobile devices by addressing the following three main issues.

A Resizable Mini-batch Gradient Descent based on a Multi-Armed Bandit

no code implementations • ICLR 2019 • Seong Jin Cho, Sunghun Kang, Chang D. Yoo

Determining the appropriate batch size for mini-batch gradient descent is always time consuming as it often relies on grid search.

Meta-Learning via Feature-Label Memory Network

no code implementations • 19 Oct 2017 • Dawit Mureja, Hyunsin Park, Chang D. Yoo

The feature memory is used to store the features of input data samples and the label memory stores their labels.

Early Improving Recurrent Elastic Highway Network

no code implementations • 14 Aug 2017 • Hyunsin Park, Chang D. Yoo

Expanding on the idea of adaptive computation time (ACT), with the use of an elastic gate in the form of a rectified exponentially decreasing function taking on as arguments as previous hidden state and input, the proposed model is able to evaluate the appropriate recurrent depth for each input.

Face Alignment Using Cascade Gaussian Process Regression Trees

no code implementations • CVPR 2015 • Donghoon Lee, Hyunsin Park, Chang D. Yoo

Without increasing prediction time, the prediction of cGPRT can be performed in the same framework as the cascade regression trees (CRT) but with better generalization.

Phoneme Classification using Constrained Variational Gaussian Process Dynamical System

no code implementations • NeurIPS 2012 • Hyunsin Park, Sungrack Yun, Sanghyuk Park, Jongmin Kim, Chang D. Yoo

This paper describes a new acoustic model based on variational Gaussian process dynamical system (VGPDS) for phoneme classification.

Higher-Order Correlation Clustering for Image Segmentation

no code implementations • NeurIPS 2011 • Sungwoong Kim, Sebastian Nowozin, Pushmeet Kohli, Chang D. Yoo

For many of the state-of-the-art computer vision algorithms, image segmentation is an important preprocessing step.