Search Results for author: Ge-Peng Ji

Found 26 papers, 22 papers with code

JL-DCF: Joint Learning and Densely-Cooperative Fusion Framework for RGB-D Salient Object Detection

1 code implementation • CVPR 2020 • Keren Fu, Deng-Ping Fan, Ge-Peng Ji, Qijun Zhao

This paper proposes a novel joint learning and densely-cooperative fusion (JL-DCF) architecture for RGB-D salient object detection.

Ranked #6 on

RGB-D Salient Object Detection

on NLPR

Ranked #6 on

RGB-D Salient Object Detection

on NLPR

Inf-Net: Automatic COVID-19 Lung Infection Segmentation from CT Images

3 code implementations • 22 Apr 2020 • Deng-Ping Fan, Tao Zhou, Ge-Peng Ji, Yi Zhou, Geng Chen, Huazhu Fu, Jianbing Shen, Ling Shao

Coronavirus Disease 2019 (COVID-19) spread globally in early 2020, causing the world to face an existential health crisis.

PraNet: Parallel Reverse Attention Network for Polyp Segmentation

4 code implementations • 13 Jun 2020 • Deng-Ping Fan, Ge-Peng Ji, Tao Zhou, Geng Chen, Huazhu Fu, Jianbing Shen, Ling Shao

To address these challenges, we propose a parallel reverse attention network (PraNet) for accurate polyp segmentation in colonoscopy images.

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

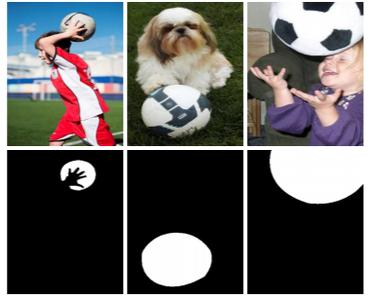

Re-thinking Co-Salient Object Detection

2 code implementations • 7 Jul 2020 • Deng-Ping Fan, Tengpeng Li, Zheng Lin, Ge-Peng Ji, Dingwen Zhang, Ming-Ming Cheng, Huazhu Fu, Jianbing Shen

CoSOD is an emerging and rapidly growing extension of salient object detection (SOD), which aims to detect the co-occurring salient objects in a group of images.

Ranked #7 on

Co-Salient Object Detection

on CoCA

Ranked #7 on

Co-Salient Object Detection

on CoCA

Siamese Network for RGB-D Salient Object Detection and Beyond

2 code implementations • 26 Aug 2020 • Keren Fu, Deng-Ping Fan, Ge-Peng Ji, Qijun Zhao, Jianbing Shen, Ce Zhu

Inspired by the observation that RGB and depth modalities actually present certain commonality in distinguishing salient objects, a novel joint learning and densely cooperative fusion (JL-DCF) architecture is designed to learn from both RGB and depth inputs through a shared network backbone, known as the Siamese architecture.

Ranked #3 on

RGB-D Salient Object Detection

on STERE

Ranked #3 on

RGB-D Salient Object Detection

on STERE

Light Field Salient Object Detection: A Review and Benchmark

1 code implementation • 10 Oct 2020 • Keren Fu, Yao Jiang, Ge-Peng Ji, Tao Zhou, Qijun Zhao, Deng-Ping Fan

Secondly, we benchmark nine representative light field SOD models together with several cutting-edge RGB-D SOD models on four widely used light field datasets, from which insightful discussions and analyses, including a comparison between light field SOD and RGB-D SOD models, are achieved.

Concealed Object Detection

1 code implementation • 20 Feb 2021 • Deng-Ping Fan, Ge-Peng Ji, Ming-Ming Cheng, Ling Shao

We present the first systematic study on concealed object detection (COD), which aims to identify objects that are "perfectly" embedded in their background.

Ranked #5 on

Camouflaged Object Segmentation

on CHAMELEON

Ranked #5 on

Camouflaged Object Segmentation

on CHAMELEON

Camouflaged Object Segmentation

Camouflaged Object Segmentation

Dichotomous Image Segmentation

+2

Dichotomous Image Segmentation

+2

Camouflaged Object Segmentation with Distraction Mining

1 code implementation • CVPR 2021 • Haiyang Mei, Ge-Peng Ji, Ziqi Wei, Xin Yang, Xiaopeng Wei, Deng-Ping Fan

In this paper, we strive to embrace challenges towards effective and efficient COS. To this end, we develop a bio-inspired framework, termed Positioning and Focus Network (PFNet), which mimics the process of predation in nature.

Ranked #11 on

Dichotomous Image Segmentation

on DIS-TE3

Ranked #11 on

Dichotomous Image Segmentation

on DIS-TE3

Camouflaged Object Segmentation

Camouflaged Object Segmentation

Dichotomous Image Segmentation

+3

Dichotomous Image Segmentation

+3

Progressively Normalized Self-Attention Network for Video Polyp Segmentation

3 code implementations • 18 May 2021 • Ge-Peng Ji, Yu-Cheng Chou, Deng-Ping Fan, Geng Chen, Huazhu Fu, Debesh Jha, Ling Shao

Existing video polyp segmentation (VPS) models typically employ convolutional neural networks (CNNs) to extract features.

Ranked #6 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #6 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Guidance and Teaching Network for Video Salient Object Detection

no code implementations • 21 May 2021 • Yingxia Jiao, Xiao Wang, Yu-Cheng Chou, Shouyuan Yang, Ge-Peng Ji, Rong Zhu, Ge Gao

Owing to the difficulties of mining spatial-temporal cues, the existing approaches for video salient object detection (VSOD) are limited in understanding complex and noisy scenarios, and often fail in inferring prominent objects.

Depth Quality-Inspired Feature Manipulation for Efficient RGB-D Salient Object Detection

1 code implementation • 5 Jul 2021 • Wenbo Zhang, Ge-Peng Ji, Zhuo Wang, Keren Fu, Qijun Zhao

To tackle this dilemma and also inspired by the fact that depth quality is a key factor influencing the accuracy, we propose a novel depth quality-inspired feature manipulation (DQFM) process, which is efficient itself and can serve as a gating mechanism for filtering depth features to greatly boost the accuracy.

Full-Duplex Strategy for Video Object Segmentation

1 code implementation • ICCV 2021 • Ge-Peng Ji, Deng-Ping Fan, Keren Fu, Zhe Wu, Jianbing Shen, Ling Shao

Previous video object segmentation approaches mainly focus on using simplex solutions between appearance and motion, limiting feature collaboration efficiency among and across these two cues.

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Hard (Unseen)

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Hard (Unseen)

Fast Camouflaged Object Detection via Edge-based Reversible Re-calibration Network

1 code implementation • 5 Nov 2021 • Ge-Peng Ji, Lei Zhu, Mingchen Zhuge, Keren Fu

Camouflaged Object Detection (COD) aims to detect objects with similar patterns (e. g., texture, intensity, colour, etc) to their surroundings, and recently has attracted growing research interest.

Video Polyp Segmentation: A Deep Learning Perspective

4 code implementations • 27 Mar 2022 • Ge-Peng Ji, Guobao Xiao, Yu-Cheng Chou, Deng-Ping Fan, Kai Zhao, Geng Chen, Luc van Gool

We present the first comprehensive video polyp segmentation (VPS) study in the deep learning era.

Ranked #2 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #2 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Deep Gradient Learning for Efficient Camouflaged Object Detection

1 code implementation • 25 May 2022 • Ge-Peng Ji, Deng-Ping Fan, Yu-Cheng Chou, Dengxin Dai, Alexander Liniger, Luc van Gool

This paper introduces DGNet, a novel deep framework that exploits object gradient supervision for camouflaged object detection (COD).

Camouflaged Object Detection via Context-aware Cross-level Fusion

2 code implementations • 27 Jul 2022 • Geng Chen, Si-Jie Liu, Yu-Jia Sun, Ge-Peng Ji, Ya-Feng Wu, Tao Zhou

To address these challenges, we propose a novel Context-aware Cross-level Fusion Network (C2F-Net), which fuses context-aware cross-level features for accurately identifying camouflaged objects.

Depth Quality-Inspired Feature Manipulation for Efficient RGB-D and Video Salient Object Detection

1 code implementation • 8 Aug 2022 • Wenbo Zhang, Keren Fu, Zhuo Wang, Ge-Peng Ji, Qijun Zhao

Inspired by the fact that depth quality is a key factor influencing the accuracy, we propose an efficient depth quality-inspired feature manipulation (DQFM) process, which can dynamically filter depth features according to depth quality.

Masked Vision-Language Transformer in Fashion

1 code implementation • 27 Oct 2022 • Ge-Peng Ji, Mingcheng Zhuge, Dehong Gao, Deng-Ping Fan, Christos Sakaridis, Luc van Gool

We present a masked vision-language transformer (MVLT) for fashion-specific multi-modal representation.

SAM Struggles in Concealed Scenes -- Empirical Study on "Segment Anything"

no code implementations • 12 Apr 2023 • Ge-Peng Ji, Deng-Ping Fan, Peng Xu, Ming-Ming Cheng, BoWen Zhou, Luc van Gool

Segmenting anything is a ground-breaking step toward artificial general intelligence, and the Segment Anything Model (SAM) greatly fosters the foundation models for computer vision.

Advances in Deep Concealed Scene Understanding

1 code implementation • 21 Apr 2023 • Deng-Ping Fan, Ge-Peng Ji, Peng Xu, Ming-Ming Cheng, Christos Sakaridis, Luc van Gool

Concealed scene understanding (CSU) is a hot computer vision topic aiming to perceive objects exhibiting camouflage.

Rethinking Polyp Segmentation from an Out-of-Distribution Perspective

1 code implementation • 13 Jun 2023 • Ge-Peng Ji, Jing Zhang, Dylan Campbell, Huan Xiong, Nick Barnes

Unlike existing fully-supervised approaches, we rethink colorectal polyp segmentation from an out-of-distribution perspective with a simple but effective self-supervised learning approach.

How Good is Google Bard's Visual Understanding? An Empirical Study on Open Challenges

1 code implementation • 27 Jul 2023 • Haotong Qin, Ge-Peng Ji, Salman Khan, Deng-Ping Fan, Fahad Shahbaz Khan, Luc van Gool

Google's Bard has emerged as a formidable competitor to OpenAI's ChatGPT in the field of conversational AI.

An objective validation of polyp and instrument segmentation methods in colonoscopy through Medico 2020 polyp segmentation and MedAI 2021 transparency challenges

1 code implementation • 30 Jul 2023 • Debesh Jha, Vanshali Sharma, Debapriya Banik, Debayan Bhattacharya, Kaushiki Roy, Steven A. Hicks, Nikhil Kumar Tomar, Vajira Thambawita, Adrian Krenzer, Ge-Peng Ji, Sahadev Poudel, George Batchkala, Saruar Alam, Awadelrahman M. A. Ahmed, Quoc-Huy Trinh, Zeshan Khan, Tien-Phat Nguyen, Shruti Shrestha, Sabari Nathan, Jeonghwan Gwak, Ritika K. Jha, Zheyuan Zhang, Alexander Schlaefer, Debotosh Bhattacharjee, M. K. Bhuyan, Pradip K. Das, Deng-Ping Fan, Sravanthi Parsa, Sharib Ali, Michael A. Riegler, Pål Halvorsen, Thomas de Lange, Ulas Bagci

Automatic analysis of colonoscopy images has been an active field of research motivated by the importance of early detection of precancerous polyps.

Large Model Based Referring Camouflaged Object Detection

no code implementations • 28 Nov 2023 • Shupeng Cheng, Ge-Peng Ji, Pengda Qin, Deng-Ping Fan, BoWen Zhou, Peng Xu

Our motivation is to make full use of the semantic intelligence and intrinsic knowledge of recent Multimodal Large Language Models (MLLMs) to decompose this complex task in a human-like way.

MAST: Video Polyp Segmentation with a Mixture-Attention Siamese Transformer

1 code implementation • 23 Jan 2024 • Geng Chen, Junqing Yang, Xiaozhou Pu, Ge-Peng Ji, Huan Xiong, Yongsheng Pan, Hengfei Cui, Yong Xia

To the best of our knowledge, our MAST is the first transformer model dedicated to video polyp segmentation.

Effectiveness Assessment of Recent Large Vision-Language Models

no code implementations • 7 Mar 2024 • Yao Jiang, Xinyu Yan, Ge-Peng Ji, Keren Fu, Meijun Sun, Huan Xiong, Deng-Ping Fan, Fahad Shahbaz Khan

The advent of large vision-language models (LVLMs) represents a noteworthy advancement towards the pursuit of artificial general intelligence.