Search Results for author: Radhika Dua

Found 8 papers, 3 papers with code

Reweighting Strategy based on Synthetic Data Identification for Sentence Similarity

1 code implementation • COLING 2022 • Taehee Kim, ChaeHun Park, Jimin Hong, Radhika Dua, Edward Choi, Jaegul Choo

To analyze this, we first train a classifier that identifies machine-written sentences, and observe that the linguistic features of the sentences identified as written by a machine are significantly different from those of human-written sentences.

Automatic Detection of Noisy Electrocardiogram Signals without Explicit Noise Labels

no code implementations • 8 Aug 2022 • Radhika Dua, Jiyoung Lee, Joon-Myoung Kwon, Edward Choi

Automatic deep learning-based examination of ECG signals can lead to inaccurate diagnosis, and manual analysis involves rejection of noisy ECG samples by clinicians, which might cost extra time.

Task Agnostic and Post-hoc Unseen Distribution Detection

no code implementations • 26 Jul 2022 • Radhika Dua, Seongjun Yang, Yixuan Li, Edward Choi

Despite the recent advances in out-of-distribution(OOD) detection, anomaly detection, and uncertainty estimation tasks, there do not exist a task-agnostic and post-hoc approach.

Towards the Practical Utility of Federated Learning in the Medical Domain

1 code implementation • 7 Jul 2022 • Seongjun Yang, Hyeonji Hwang, Daeyoung Kim, Radhika Dua, Jong-Yeup Kim, Eunho Yang, Edward Choi

We evaluate six FL algorithms designed for addressing data heterogeneity among clients, and a hybrid algorithm combining the strengths of two representative FL algorithms.

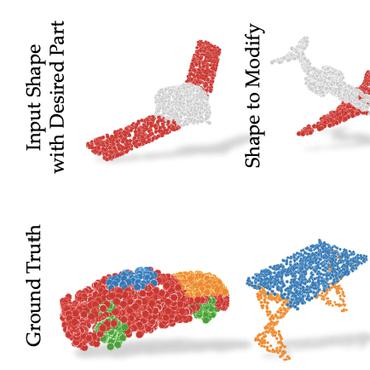

ConDor: Self-Supervised Canonicalization of 3D Pose for Partial Shapes

1 code implementation • CVPR 2022 • Rahul Sajnani, Adrien Poulenard, Jivitesh Jain, Radhika Dua, Leonidas J. Guibas, Srinath Sridhar

ConDor is a self-supervised method that learns to Canonicalize the 3D orientation and position for full and partial 3D point clouds.

Natural Attribute-based Shift Detection

no code implementations • 18 Oct 2021 • Jeonghoon Park, Jimin Hong, Radhika Dua, Daehoon Gwak, Yixuan Li, Jaegul Choo, Edward Choi

Despite the impressive performance of deep networks in vision, language, and healthcare, unpredictable behaviors on samples from the distribution different than the training distribution cause severe problems in deployment.

Beyond VQA: Generating Multi-word Answer and Rationale to Visual Questions

no code implementations • 24 Oct 2020 • Radhika Dua, Sai Srinivas Kancheti, Vineeth N Balasubramanian

To take this a step forward, we introduce a new task: ViQAR (Visual Question Answering and Reasoning), wherein a model must generate the complete answer and a rationale that seeks to justify the generated answer.

VayuAnukulani: Adaptive Memory Networks for Air Pollution Forecasting

no code implementations • 8 Apr 2019 • Divyam Madaan, Radhika Dua, Prerana Mukherjee, Brejesh lall

Extensive experiments on data sources obtained in Delhi demonstrate that the proposed adaptive attention based Bidirectional LSTM Network outperforms several baselines for classification and regression models.