Depth Map Super-Resolution

10 papers with code • 0 benchmarks • 2 datasets

Depth map super-resolution is the task of upsampling depth images.

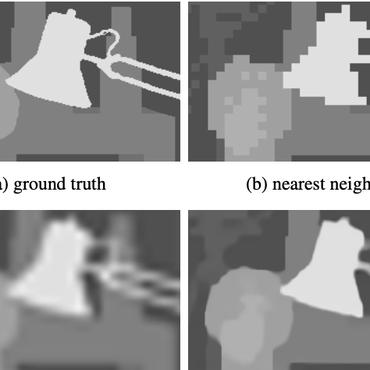

( Image credit: A Joint Intensity and Depth Co-Sparse Analysis Model for Depth Map Super-Resolution )

Benchmarks

These leaderboards are used to track progress in Depth Map Super-Resolution

Most implemented papers

Deformable Kernel Networks for Joint Image Filtering

Previous methods based on convolutional neural networks (CNNs) combine nonlinear activations of spatially-invariant kernels to estimate structural details and regress the filtering result.

Discrete Cosine Transform Network for Guided Depth Map Super-Resolution

Guided depth super-resolution (GDSR) is an essential topic in multi-modal image processing, which reconstructs high-resolution (HR) depth maps from low-resolution ones collected with suboptimal conditions with the help of HR RGB images of the same scene.

Inferring Super-Resolution Depth from a Moving Light-Source Enhanced RGB-D Sensor: A Variational Approach

A novel approach towards depth map super-resolution using multi-view uncalibrated photometric stereo is presented.

High-resolution Depth Maps Imaging via Attention-based Hierarchical Multi-modal Fusion

Specifically, to effectively extract and combine relevant information from LR depth and HR guidance, we propose a multi-modal attention based fusion (MMAF) strategy for hierarchical convolutional layers, including a feature enhance block to select valuable features and a feature recalibration block to unify the similarity metrics of modalities with different appearance characteristics.

Towards Fast and Accurate Real-World Depth Super-Resolution: Benchmark Dataset and Baseline

Depth maps obtained by commercial depth sensors are always in low-resolution, making it difficult to be used in various computer vision tasks.

Unpaired Depth Super-Resolution in the Wild

We propose an unpaired learning method for depth super-resolution, which is based on a learnable degradation model, enhancement component and surface normal estimates as features to produce more accurate depth maps.

Deep Attentional Guided Image Filtering

Specifically, we propose an attentional kernel learning module to generate dual sets of filter kernels from the guidance and the target, respectively, and then adaptively combine them by modeling the pixel-wise dependency between the two images.

Learning Continuous Depth Representation via Geometric Spatial Aggregator

Depth map super-resolution (DSR) has been a fundamental task for 3D computer vision.

Guided Depth Map Super-resolution: A Survey

Guided depth map super-resolution (GDSR), which aims to reconstruct a high-resolution (HR) depth map from a low-resolution (LR) observation with the help of a paired HR color image, is a longstanding and fundamental problem, it has attracted considerable attention from computer vision and image processing communities.

SGNet: Structure Guided Network via Gradient-Frequency Awareness for Depth Map Super-Resolution

Recent image guided DSR approaches mainly focus on spatial domain to rebuild depth structure.

Middlebury 2005

Middlebury 2005

RGB-D-D

RGB-D-D