Facial Action Unit Detection

17 papers with code • 2 benchmarks • 4 datasets

Facial action unit detection is the task of detecting action units from a video of a face - for example, lip tightening and cheek raising.

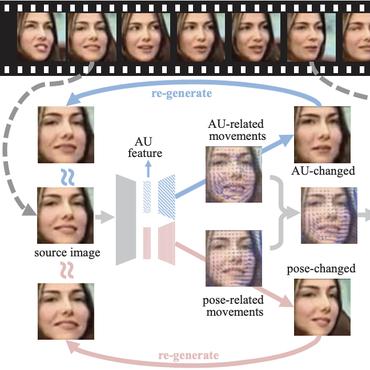

( Image credit: Self-supervised Representation Learning from Videos for Facial Action Unit Detection )

Libraries

Use these libraries to find Facial Action Unit Detection models and implementationsLatest papers with no code

AUD-TGN: Advancing Action Unit Detection with Temporal Convolution and GPT-2 in Wild Audiovisual Contexts

Leveraging the synergy of both audio data and visual data is essential for understanding human emotions and behaviors, especially in in-the-wild setting.

Leveraging Synthetic Data for Generalizable and Fair Facial Action Unit Detection

Then, we use MSDA to transfer the AU detection knowledge from a real dataset and the synthetic dataset to a target dataset.

Contrastive Learning of Person-independent Representations for Facial Action Unit Detection

We formulate the self-supervised AU representation learning signals in two-fold: (1) AU representation should be frame-wisely discriminative within a short video clip; (2) Facial frames sampled from different identities but show analogous facial AUs should have consistent AU representations.

Distribution Matching for Multi-Task Learning of Classification Tasks: a Large-Scale Study on Faces & Beyond

Multi-Task Learning (MTL) is a framework, where multiple related tasks are learned jointly and benefit from a shared representation space, or parameter transfer.

Boosting Facial Action Unit Detection Through Jointly Learning Facial Landmark Detection and Domain Separation and Reconstruction

Recently how to introduce large amounts of unlabeled facial images in the wild into supervised Facial Action Unit (AU) detection frameworks has become a challenging problem.

Occlusion Aware Student Emotion Recognition based on Facial Action Unit Detection

Given that approximately half of science, technology, engineering, and mathematics (STEM) undergraduate students in U. S. colleges and universities leave by the end of the first year [15], it is crucial to improve the quality of classroom environments.

Fighting over-fitting with quantization for learning deep neural networks on noisy labels

The rising performance of deep neural networks is often empirically attributed to an increase in the available computational power, which allows complex models to be trained upon large amounts of annotated data.

Multi-modal Facial Action Unit Detection with Large Pre-trained Models for the 5th Competition on Affective Behavior Analysis in-the-wild

Facial action unit detection has emerged as an important task within facial expression analysis, aimed at detecting specific pre-defined, objective facial expressions, such as lip tightening and cheek raising.

Local Region Perception and Relationship Learning Combined with Feature Fusion for Facial Action Unit Detection

Human affective behavior analysis plays a vital role in human-computer interaction (HCI) systems.

Self-supervised Facial Action Unit Detection with Region and Relation Learning

Facial action unit (AU) detection is a challenging task due to the scarcity of manual annotations.

DISFA

DISFA

BP4D

BP4D

CANDOR Corpus

CANDOR Corpus

Thermal Face Database

Thermal Face Database