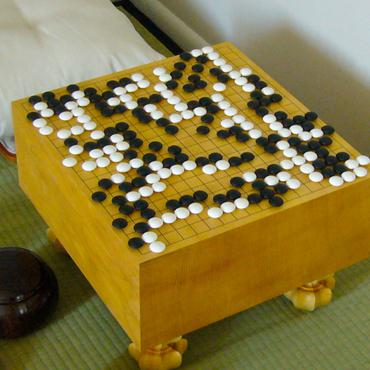

Game of Go

19 papers with code • 1 benchmarks • 1 datasets

Go is an abstract strategy board game for two players, in which the aim is to surround more territory than the opponent. The task is to train an agent to play the game and be superior to other players.

Libraries

Use these libraries to find Game of Go models and implementationsLatest papers with no code

"Task Success" is not Enough: Investigating the Use of Video-Language Models as Behavior Critics for Catching Undesirable Agent Behaviors

Large-scale generative models are shown to be useful for sampling meaningful candidate solutions, yet they often overlook task constraints and user preferences.

Explaining How a Neural Network Play the Go Game and Let People Learn

The AI model has surpassed human players in the game of Go, and it is widely believed that the AI model has encoded new knowledge about the Go game beyond human players.

Vision Transformers for Computer Go

Motivated by the success of transformers in various fields, such as language understanding and image analysis, this investigation explores their application in the context of the game of Go.

AlphaZero Gomoku

In the past few years, AlphaZero's exceptional capability in mastering intricate board games has garnered considerable interest.

The ProfessionAl Go annotation datasEt (PAGE)

To the best of our knowledge, PAGE is the first dataset with extensive annotation in the game of Go.

The cost of passing -- using deep learning AIs to expand our understanding of the ancient game of Go

AI engines utilizing deep learning neural networks provide excellent tools for analyzing traditional board games.

Score vs. Winrate in Score-Based Games: which Reward for Reinforcement Learning?

In the last years, the DeepMind algorithm AlphaZero has become the state of the art to efficiently tackle perfect information two-player zero-sum games with a win/lose outcome.

Spatial State-Action Features for General Games

In many board games and other abstract games, patterns have been used as features that can guide automated game-playing agents.

Probabilistic DAG Search

Exciting contemporary machine learning problems have recently been phrased in the classic formalism of tree search -- most famously, the game of Go.

Batch Monte Carlo Tree Search

The transposition table contains the results of the inferences while the search tree contains the statistics of Monte Carlo Tree Search.

PAGE

PAGE