Medical Image Registration

77 papers with code • 4 benchmarks • 8 datasets

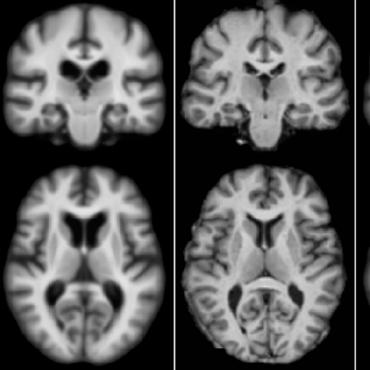

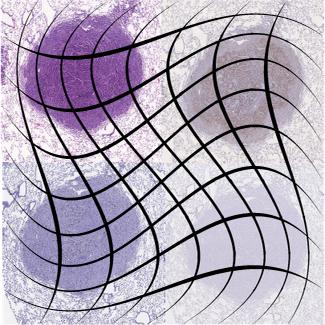

Image registration, also known as image fusion or image matching, is the process of aligning two or more images based on image appearances. Medical Image Registration seeks to find an optimal spatial transformation that best aligns the underlying anatomical structures. Medical Image Registration is used in many clinical applications such as image guidance, motion tracking, segmentation, dose accumulation, image reconstruction and so on. Medical Image Registration is a broad topic which can be grouped from various perspectives. From input image point of view, registration methods can be divided into unimodal, multimodal, interpatient, intra-patient (e.g. same- or different-day) registration. From deformation model point of view, registration methods can be divided in to rigid, affine and deformable methods. From region of interest (ROI) perspective, registration methods can be grouped according to anatomical sites such as brain, lung registration and so on. From image pair dimension perspective, registration methods can be divided into 3D to 3D, 3D to 2D and 2D to 2D/3D.

Source: Deep Learning in Medical Image Registration: A Review

Libraries

Use these libraries to find Medical Image Registration models and implementationsDatasets

Latest papers

ModeTv2: GPU-accelerated Motion Decomposition Transformer for Pairwise Optimization in Medical Image Registration

Deformable image registration plays a crucial role in medical imaging, aiding in disease diagnosis and image-guided interventions.

uniGradICON: A Foundation Model for Medical Image Registration

We therefore propose uniGradICON, a first step toward a foundation model for registration providing 1) great performance \emph{across} multiple datasets which is not feasible for current learning-based registration methods, 2) zero-shot capabilities for new registration tasks suitable for different acquisitions, anatomical regions, and modalities compared to the training dataset, and 3) a strong initialization for finetuning on out-of-distribution registration tasks.

HyperPredict: Estimating Hyperparameter Effects for Instance-Specific Regularization in Deformable Image Registration

Our approach which we call HyperPredict, implements a Multi-Layer Perceptron that learns the effect of selecting particular hyperparameters for registering an image pair by predicting the resulting segmentation overlap and measure of deformation smoothness.

Quantifying the Resolution of a Template after Image Registration

In many image processing applications (e. g. computational anatomy) a groupwise registration is performed on a sample of images and a template image is simultaneously generated.

Pyramid Attention Network for Medical Image Registration

The advent of deep-learning-based registration networks has addressed the time-consuming challenge in traditional iterative methods. However, the potential of current registration networks for comprehensively capturing spatial relationships has not been fully explored, leading to inadequate performance in large-deformation image registration. The pure convolutional neural networks (CNNs) neglect feature enhancement, while current Transformer-based networks are susceptible to information redundancy. To alleviate these issues, we propose a pyramid attention network (PAN) for deformable medical image registration. Specifically, the proposed PAN incorporates a dual-stream pyramid encoder with channel-wise attention to boost the feature representation. Moreover, a multi-head local attention Transformer is introduced as decoder to analyze motion patterns and generate deformation fields. Extensive experiments on two public brain magnetic resonance imaging (MRI) datasets and one abdominal MRI dataset demonstrate that our method achieves favorable registration performance, while outperforming several CNN-based and Transformer-based registration networks. Our code is publicly available at https://github. com/JuliusWang-7/PAN.

Decoder-Only Image Registration

For this, we propose a novel network architecture, termed LessNet in this paper, which contains only a learnable decoder, while entirely omitting the utilization of a learnable encoder.

Local Feature Matching Using Deep Learning: A Survey

The objective of this endeavor is to furnish a comprehensive overview of local feature matching methods.

MambaMorph: a Mamba-based Framework for Medical MR-CT Deformable Registration

Capturing voxel-wise spatial correspondence across distinct modalities is crucial for medical image analysis.

SAME++: A Self-supervised Anatomical eMbeddings Enhanced medical image registration framework using stable sampling and regularized transformation

In this work, we introduce a fast and accurate method for unsupervised 3D medical image registration building on top of a Self-supervised Anatomical eMbedding (SAM) algorithm, which is capable of computing dense anatomical correspondences between two images at the voxel level.

Residual Aligner-based Network (RAN): Motion-separable structure for coarse-to-fine discontinuous deformable registration

Deformable image registration, the estimation of the spatial transformation between different images, is an important task in medical imaging.

PPMI

PPMI

Learn2Reg

Learn2Reg

OASIS

OASIS

IXI

IXI

SR-Reg

SR-Reg

Full-Spectral Autofluorescence Lifetime Microscopic Images

Full-Spectral Autofluorescence Lifetime Microscopic Images

BreastDICOM4

BreastDICOM4