Multimodal Unsupervised Image-To-Image Translation

14 papers with code • 6 benchmarks • 4 datasets

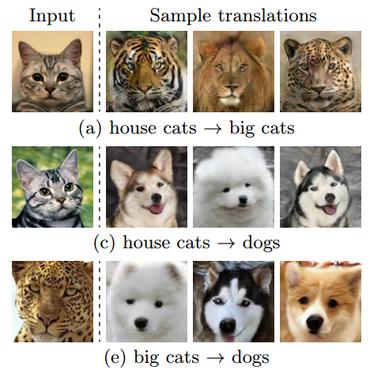

Multimodal unsupervised image-to-image translation is the task of producing multiple translations to one domain from a single image in another domain.

( Image credit: MUNIT: Multimodal UNsupervised Image-to-image Translation )

Libraries

Use these libraries to find Multimodal Unsupervised Image-To-Image Translation models and implementationsMost implemented papers

Breaking the Cycle - Colleagues Are All You Need

(2) Since it does not need to support the cycle constraint, no irrelevant traces of the input are left on the generated image.

Image-to-image Translation via Hierarchical Style Disentanglement

Recently, image-to-image translation has made significant progress in achieving both multi-label (\ie, translation conditioned on different labels) and multi-style (\ie, generation with diverse styles) tasks.

A Style-aware Discriminator for Controllable Image Translation

Current image-to-image translations do not control the output domain beyond the classes used during training, nor do they interpolate between different domains well, leading to implausible results.

Wavelet-based Unsupervised Label-to-Image Translation

Semantic Image Synthesis (SIS) is a subclass of image-to-image translation where a semantic layout is used to generate a photorealistic image.

CelebA-HQ

CelebA-HQ

AFHQ

AFHQ

CATS

CATS

FFHQ-Aging

FFHQ-Aging