Object Detection

3697 papers with code • 84 benchmarks • 256 datasets

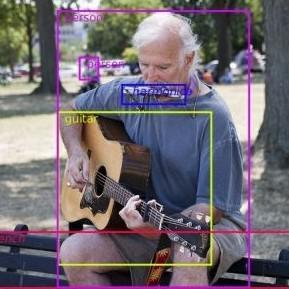

Object Detection is a computer vision task in which the goal is to detect and locate objects of interest in an image or video. The task involves identifying the position and boundaries of objects in an image, and classifying the objects into different categories. It forms a crucial part of vision recognition, alongside image classification and retrieval.

The state-of-the-art methods can be categorized into two main types: one-stage methods and two stage-methods:

-

One-stage methods prioritize inference speed, and example models include YOLO, SSD and RetinaNet.

-

Two-stage methods prioritize detection accuracy, and example models include Faster R-CNN, Mask R-CNN and Cascade R-CNN.

The most popular benchmark is the MSCOCO dataset. Models are typically evaluated according to a Mean Average Precision metric.

( Image credit: Detectron )

Libraries

Use these libraries to find Object Detection models and implementationsDatasets

Subtasks

-

3D Object Detection

3D Object Detection

-

Real-Time Object Detection

Real-Time Object Detection

-

RGB Salient Object Detection

RGB Salient Object Detection

-

Few-Shot Object Detection

Few-Shot Object Detection

-

Few-Shot Object Detection

Few-Shot Object Detection

-

Video Object Detection

Video Object Detection

-

RGB-D Salient Object Detection

RGB-D Salient Object Detection

-

Open Vocabulary Object Detection

Open Vocabulary Object Detection

-

Object Detection In Aerial Images

Object Detection In Aerial Images

-

Weakly Supervised Object Detection

Weakly Supervised Object Detection

-

Small Object Detection

Small Object Detection

-

Robust Object Detection

Robust Object Detection

-

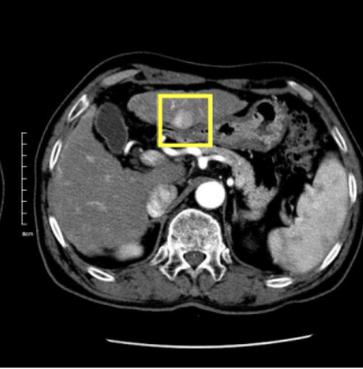

Medical Object Detection

Medical Object Detection

-

Zero-Shot Object Detection

Zero-Shot Object Detection

-

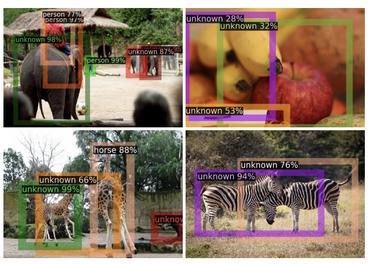

Open World Object Detection

Open World Object Detection

-

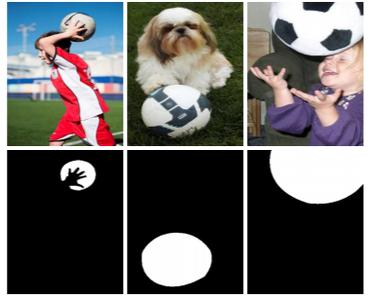

Co-Salient Object Detection

Co-Salient Object Detection

-

Dense Object Detection

Dense Object Detection

-

Object Proposal Generation

Object Proposal Generation

-

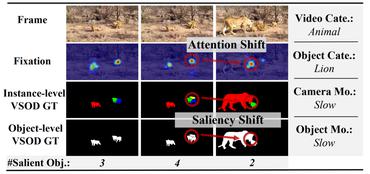

Video Salient Object Detection

Video Salient Object Detection

-

Camouflaged Object Segmentation

Camouflaged Object Segmentation

-

License Plate Detection

License Plate Detection

-

Head Detection

Head Detection

-

Multiview Detection

Multiview Detection

-

3D Object Detection From Monocular Images

3D Object Detection From Monocular Images

-

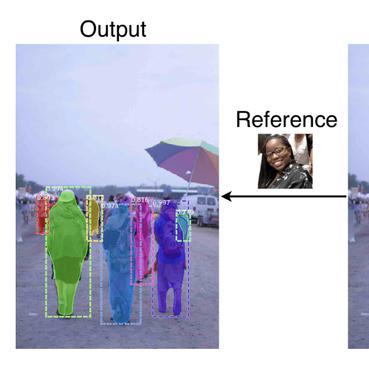

One-Shot Object Detection

One-Shot Object Detection

-

Moving Object Detection

Moving Object Detection

-

Surgical tool detection

Surgical tool detection

-

Described Object Detection

Described Object Detection

-

Body Detection

Body Detection

-

Pupil Detection

Pupil Detection

-

Object Detection In Indoor Scenes

Object Detection In Indoor Scenes

-

Class-agnostic Object Detection

Class-agnostic Object Detection

-

Semantic Part Detection

Semantic Part Detection

-

Object Skeleton Detection

Object Skeleton Detection

-

Fish Detection

Fish Detection

-

Multiple Affordance Detection

Multiple Affordance Detection

-

Weakly Supervised 3D Detection

Weakly Supervised 3D Detection

Latest papers

Multi-resolution Rescored ByteTrack for Video Object Detection on Ultra-low-power Embedded Systems

This paper introduces Multi-Resolution Rescored Byte-Track (MR2-ByteTrack), a novel video object detection framework for ultra-low-power embedded processors.

Learning Feature Inversion for Multi-class Anomaly Detection under General-purpose COCO-AD Benchmark

Moreover, current metrics such as AU-ROC have nearly reached saturation on simple datasets, which prevents a comprehensive evaluation of different methods.

Low-Light Image Enhancement Framework for Improved Object Detection in Fisheye Lens Datasets

This study addresses the evolving challenges in urban traffic monitoring detection systems based on fisheye lens cameras by proposing a framework that improves the efficacy and accuracy of these systems.

Training-free Boost for Open-Vocabulary Object Detection with Confidence Aggregation

Specifically, in the region-proposal stage, proposals that contain novel instances showcase lower objectness scores, since they are treated as background proposals during the training phase.

SFSORT: Scene Features-based Simple Online Real-Time Tracker

This paper introduces SFSORT, the world's fastest multi-object tracking system based on experiments conducted on MOT Challenge datasets.

ConsistencyDet: A Robust Object Detector with a Denoising Paradigm of Consistency Model

In the present study, we introduce a novel framework designed to articulate object detection as a denoising diffusion process, which operates on the perturbed bounding boxes of annotated entities.

Scaling Multi-Camera 3D Object Detection through Weak-to-Strong Eliciting

Finally, for MC3D-Det joint training, the elaborate dataset merge strategy is designed to solve the problem of inconsistent camera numbers and camera parameters.

Retrieval-Augmented Open-Vocabulary Object Detection

Specifically, RALF consists of two modules: Retrieval Augmented Losses (RAL) and Retrieval-Augmented visual Features (RAF).

Better Monocular 3D Detectors with LiDAR from the Past

Accurate 3D object detection is crucial to autonomous driving.

Detecting Every Object from Events

Object detection is critical in autonomous driving, and it is more practical yet challenging to localize objects of unknown categories: an endeavour known as Class-Agnostic Object Detection (CAOD).

MS COCO

MS COCO

KITTI

KITTI

nuScenes

nuScenes

Visual Genome

Visual Genome

LVIS

LVIS

SUN RGB-D

SUN RGB-D

Waymo Open Dataset

Waymo Open Dataset

BDD100K

BDD100K

MVTecAD

MVTecAD

Manga109

Manga109