Semantic Segmentation

5173 papers with code • 125 benchmarks • 311 datasets

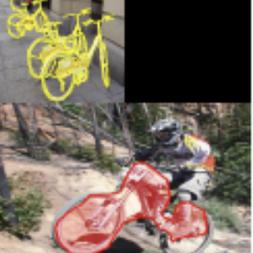

Semantic Segmentation is a computer vision task in which the goal is to categorize each pixel in an image into a class or object. The goal is to produce a dense pixel-wise segmentation map of an image, where each pixel is assigned to a specific class or object. Some example benchmarks for this task are Cityscapes, PASCAL VOC and ADE20K. Models are usually evaluated with the Mean Intersection-Over-Union (Mean IoU) and Pixel Accuracy metrics.

( Image credit: CSAILVision )

Libraries

Use these libraries to find Semantic Segmentation models and implementationsSubtasks

-

Tumor Segmentation

Tumor Segmentation

-

Panoptic Segmentation

Panoptic Segmentation

-

3D Semantic Segmentation

3D Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Scene Segmentation

Scene Segmentation

-

Semi-Supervised Semantic Segmentation

Semi-Supervised Semantic Segmentation

-

Real-Time Semantic Segmentation

Real-Time Semantic Segmentation

-

3D Part Segmentation

3D Part Segmentation

-

Unsupervised Semantic Segmentation

Unsupervised Semantic Segmentation

-

Road Segmentation

Road Segmentation

-

One-Shot Segmentation

One-Shot Segmentation

-

Bird's-Eye View Semantic Segmentation

Bird's-Eye View Semantic Segmentation

-

Crack Segmentation

Crack Segmentation

-

UNET Segmentation

UNET Segmentation

-

Universal Segmentation

Universal Segmentation

-

Class-Incremental Semantic Segmentation

Class-Incremental Semantic Segmentation

-

Polyp Segmentation

Polyp Segmentation

-

Vision-Language Segmentation

Vision-Language Segmentation

-

4D Spatio Temporal Semantic Segmentation

4D Spatio Temporal Semantic Segmentation

-

Histopathological Segmentation

Histopathological Segmentation

-

Attentive segmentation networks

Attentive segmentation networks

-

Text-Line Extraction

Text-Line Extraction

-

Aerial Video Semantic Segmentation

Aerial Video Semantic Segmentation

-

Amodal Panoptic Segmentation

Amodal Panoptic Segmentation

-

Robust BEV Map Segmentation

Robust BEV Map Segmentation

Most implemented papers

A ConvNet for the 2020s

The "Roaring 20s" of visual recognition began with the introduction of Vision Transformers (ViTs), which quickly superseded ConvNets as the state-of-the-art image classification model.

Deep High-Resolution Representation Learning for Visual Recognition

High-resolution representations are essential for position-sensitive vision problems, such as human pose estimation, semantic segmentation, and object detection.

Fully Convolutional Networks for Semantic Segmentation

Convolutional networks are powerful visual models that yield hierarchies of features.

High-Resolution Representations for Labeling Pixels and Regions

The proposed approach achieves superior results to existing single-model networks on COCO object detection.

Deformable Convolutional Networks

Convolutional neural networks (CNNs) are inherently limited to model geometric transformations due to the fixed geometric structures in its building modules.

Attention U-Net: Learning Where to Look for the Pancreas

We propose a novel attention gate (AG) model for medical imaging that automatically learns to focus on target structures of varying shapes and sizes.

YOLACT++: Better Real-time Instance Segmentation

Then we produce instance masks by linearly combining the prototypes with the mask coefficients.

Microsoft COCO: Common Objects in Context

We present a new dataset with the goal of advancing the state-of-the-art in object recognition by placing the question of object recognition in the context of the broader question of scene understanding.

ResNeSt: Split-Attention Networks

It is well known that featuremap attention and multi-path representation are important for visual recognition.

UNet++: A Nested U-Net Architecture for Medical Image Segmentation

Implementation of different kinds of Unet Models for Image Segmentation - Unet , RCNN-Unet, Attention Unet, RCNN-Attention Unet, Nested Unet

MS COCO

MS COCO

Cityscapes

Cityscapes

KITTI

KITTI

ShapeNet

ShapeNet

ScanNet

ScanNet

ADE20K

ADE20K

NYUv2

NYUv2

DAVIS

DAVIS

SYNTHIA

SYNTHIA

EuroSAT

EuroSAT