Semantic Segmentation

5164 papers with code • 125 benchmarks • 313 datasets

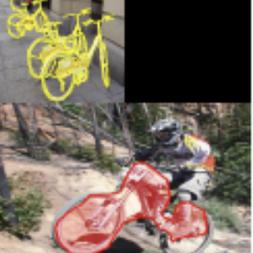

Semantic Segmentation is a computer vision task in which the goal is to categorize each pixel in an image into a class or object. The goal is to produce a dense pixel-wise segmentation map of an image, where each pixel is assigned to a specific class or object. Some example benchmarks for this task are Cityscapes, PASCAL VOC and ADE20K. Models are usually evaluated with the Mean Intersection-Over-Union (Mean IoU) and Pixel Accuracy metrics.

( Image credit: CSAILVision )

Libraries

Use these libraries to find Semantic Segmentation models and implementationsSubtasks

-

Tumor Segmentation

Tumor Segmentation

-

Panoptic Segmentation

Panoptic Segmentation

-

3D Semantic Segmentation

3D Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Scene Segmentation

Scene Segmentation

-

Semi-Supervised Semantic Segmentation

Semi-Supervised Semantic Segmentation

-

Real-Time Semantic Segmentation

Real-Time Semantic Segmentation

-

3D Part Segmentation

3D Part Segmentation

-

Unsupervised Semantic Segmentation

Unsupervised Semantic Segmentation

-

Road Segmentation

Road Segmentation

-

One-Shot Segmentation

One-Shot Segmentation

-

Bird's-Eye View Semantic Segmentation

Bird's-Eye View Semantic Segmentation

-

Crack Segmentation

Crack Segmentation

-

UNET Segmentation

UNET Segmentation

-

Universal Segmentation

Universal Segmentation

-

Class-Incremental Semantic Segmentation

Class-Incremental Semantic Segmentation

-

Polyp Segmentation

Polyp Segmentation

-

Vision-Language Segmentation

Vision-Language Segmentation

-

4D Spatio Temporal Semantic Segmentation

4D Spatio Temporal Semantic Segmentation

-

Histopathological Segmentation

Histopathological Segmentation

-

Attentive segmentation networks

Attentive segmentation networks

-

Text-Line Extraction

Text-Line Extraction

-

Aerial Video Semantic Segmentation

Aerial Video Semantic Segmentation

-

Amodal Panoptic Segmentation

Amodal Panoptic Segmentation

-

Robust BEV Map Segmentation

Robust BEV Map Segmentation

Latest papers

ViM-UNet: Vision Mamba for Biomedical Segmentation

Here, we introduce ViM-UNet, a novel segmentation architecture based on it and compare it to UNet and UNETR for two challenging microscopy instance segmentation tasks.

OpenTrench3D: A Photogrammetric 3D Point Cloud Dataset for Semantic Segmentation of Underground Utilities

We present OpenTrench3D, a novel and comprehensive 3D Semantic Segmentation point cloud dataset, designed to advance research and development in underground utility surveying and mapping.

Multi-view Aggregation Network for Dichotomous Image Segmentation

Dichotomous Image Segmentation (DIS) has recently emerged towards high-precision object segmentation from high-resolution natural images.

Rethinking the Spatial Inconsistency in Classifier-Free Diffusion Guidance

Classifier-Free Guidance (CFG) has been widely used in text-to-image diffusion models, where the CFG scale is introduced to control the strength of text guidance on the whole image space.

LHU-Net: A Light Hybrid U-Net for Cost-Efficient, High-Performance Volumetric Medical Image Segmentation

As a result of the rise of Transformer architectures in medical image analysis, specifically in the domain of medical image segmentation, a multitude of hybrid models have been created that merge the advantages of Convolutional Neural Networks (CNNs) and Transformers.

FPL+: Filtered Pseudo Label-based Unsupervised Cross-Modality Adaptation for 3D Medical Image Segmentation

Adapting a medical image segmentation model to a new domain is important for improving its cross-domain transferability, and due to the expensive annotation process, Unsupervised Domain Adaptation (UDA) is appealing where only unlabeled images are needed for the adaptation.

Frequency Decomposition-Driven Unsupervised Domain Adaptation for Remote Sensing Image Semantic Segmentation

Cross-domain semantic segmentation of remote sensing (RS) imagery based on unsupervised domain adaptation (UDA) techniques has significantly advanced deep-learning applications in the geosciences.

Sigma: Siamese Mamba Network for Multi-Modal Semantic Segmentation

In this work, we introduce Sigma, a Siamese Mamba network for multi-modal semantic segmentation, utilizing the Selective Structured State Space Model, Mamba.

PARIS3D: Reasoning-based 3D Part Segmentation Using Large Multimodal Model

We introduce a novel segmentation task known as reasoning part segmentation for 3D objects, aiming to output a segmentation mask based on complex and implicit textual queries about specific parts of a 3D object.

HAPNet: Toward Superior RGB-Thermal Scene Parsing via Hybrid, Asymmetric, and Progressive Heterogeneous Feature Fusion

In this study, we take one step toward this new research area by exploring a feasible strategy to fully exploit VFM features for RGB-thermal scene parsing.

MS COCO

MS COCO

Cityscapes

Cityscapes

KITTI

KITTI

ShapeNet

ShapeNet

ScanNet

ScanNet

ADE20K

ADE20K

NYUv2

NYUv2

DAVIS

DAVIS

SYNTHIA

SYNTHIA

EuroSAT

EuroSAT