Unsupervised Object Segmentation

21 papers with code • 9 benchmarks • 11 datasets

Datasets

Most implemented papers

Multi-Object Representation Learning with Iterative Variational Inference

Human perception is structured around objects which form the basis for our higher-level cognition and impressive systematic generalization abilities.

MONet: Unsupervised Scene Decomposition and Representation

The ability to decompose scenes in terms of abstract building blocks is crucial for general intelligence.

GENESIS: Generative Scene Inference and Sampling with Object-Centric Latent Representations

Generative latent-variable models are emerging as promising tools in robotics and reinforcement learning.

GENESIS-V2: Inferring Unordered Object Representations without Iterative Refinement

Moreover, object representations are often inferred using RNNs which do not scale well to large images or iterative refinement which avoids imposing an unnatural ordering on objects in an image but requires the a priori initialisation of a fixed number of object representations.

Unsupervised Image Decomposition with Phase-Correlation Networks

The ability to decompose scenes into their object components is a desired property for autonomous agents, allowing them to reason and act in their surroundings.

Object Discovery with a Copy-Pasting GAN

We tackle the problem of object discovery, where objects are segmented for a given input image, and the system is trained without using any direct supervision whatsoever.

Unsupervised Object Segmentation by Redrawing

Object segmentation is a crucial problem that is usually solved by using supervised learning approaches over very large datasets composed of both images and corresponding object masks.

Emergence of Object Segmentation in Perturbed Generative Models

To force the generator to learn a representation where the foreground layer corresponds to an object, we perturb the output of the generative model by introducing a random shift of both the foreground image and mask relative to the background.

Object Segmentation Without Labels with Large-Scale Generative Models

The recent rise of unsupervised and self-supervised learning has dramatically reduced the dependency on labeled data, providing effective image representations for transfer to downstream vision tasks.

The Emergence of Objectness: Learning Zero-Shot Segmentation from Videos

Our model starts with two separate pathways: an appearance pathway that outputs feature-based region segmentation for a single image, and a motion pathway that outputs motion features for a pair of images.

DUTS

DUTS

DAVIS 2016

DAVIS 2016

FBMS

FBMS

SegTrack-v2

SegTrack-v2

ECSSD

ECSSD

Multi-dSprites

Multi-dSprites

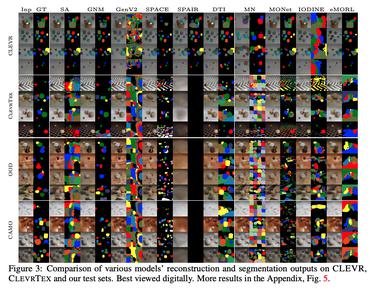

ClevrTex

ClevrTex

FBMS-59

FBMS-59