Zero-Shot Object Detection

26 papers with code • 7 benchmarks • 6 datasets

Zero-shot object detection (ZSD) is the task of object detection where no visual training data is available for some of the target object classes.

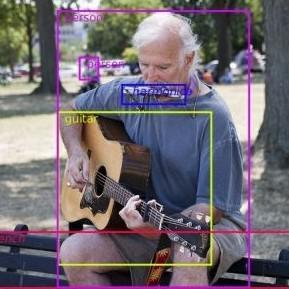

( Image credit: Zero-Shot Object Detection: Learning to Simultaneously Recognize and Localize Novel Concepts )

Libraries

Use these libraries to find Zero-Shot Object Detection models and implementationsMost implemented papers

Zero-Shot Object Detection: Learning to Simultaneously Recognize and Localize Novel Concepts

We hypothesize that this setting is ill-suited for real-world applications where unseen objects appear only as a part of a complex scene, warranting both the `recognition' and `localization' of an unseen category.

GTNet: Generative Transfer Network for Zero-Shot Object Detection

FFU and BFU add the IoU variance to the results of CFU, yielding class-specific foreground and background features, respectively.

Background Learnable Cascade for Zero-Shot Object Detection

The major contributions for BLC are as follows: (i) we propose a multi-stage cascade structure named Cascade Semantic R-CNN to progressively refine the alignment between visual and semantic of ZSD; (ii) we develop the semantic information flow structure and directly add it between each stage in Cascade Semantic RCNN to further improve the semantic feature learning; (iii) we propose the background learnable region proposal network (BLRPN) to learn an appropriate word vector for background class and use this learned vector in Cascade Semantic R CNN, this design makes \Background Learnable" and reduces the confusion between background and unseen classes.

Zero-shot Object Detection Through Vision-Language Embedding Alignment

Recent approaches have shown that training deep neural networks directly on large-scale image-text pair collections enables zero-shot transfer on various recognition tasks.

Many Heads but One Brain: Fusion Brain -- a Competition and a Single Multimodal Multitask Architecture

Supporting the current trend in the AI community, we present the AI Journey 2021 Challenge called Fusion Brain, the first competition which is targeted to make the universal architecture which could process different modalities (in this case, images, texts, and code) and solve multiple tasks for vision and language.

Robust Region Feature Synthesizer for Zero-Shot Object Detection

Zero-shot object detection aims at incorporating class semantic vectors to realize the detection of (both seen and) unseen classes given an unconstrained test image.

From Node to Graph: Joint Reasoning on Visual-Semantic Relational Graph for Zero-Shot Detection

Zero-Shot Detection (ZSD), which aims at localizing andrecognizing unseen objects in a complicated scene, usuallyleverages the visual and semantic information of individ-ual objects alone.

Bridging the Gap between Object and Image-level Representations for Open-Vocabulary Detection

Two popular forms of weak-supervision used in open-vocabulary detection (OVD) include pretrained CLIP model and image-level supervision.

Resolving Semantic Confusions for Improved Zero-Shot Detection

Zero-shot detection (ZSD) is a challenging task where we aim to recognize and localize objects simultaneously, even when our model has not been trained with visual samples of a few target ("unseen") classes.

ZBS: Zero-shot Background Subtraction via Instance-level Background Modeling and Foreground Selection

However, previous unsupervised deep learning BGS algorithms perform poorly in sophisticated scenarios such as shadows or night lights, and they cannot detect objects outside the pre-defined categories.

MS COCO

MS COCO

LVIS

LVIS

PASCAL VOC 2007

PASCAL VOC 2007

MSCOCO

MSCOCO

RF100

RF100