Search Results for author: Thomas H. Li

Found 30 papers, 21 papers with code

StreamFlow: Streamlined Multi-Frame Optical Flow Estimation for Video Sequences

1 code implementation • 28 Nov 2023 • Shangkun Sun, Jiaming Liu, Thomas H. Li, Huaxia Li, Guoqing Liu, Wei Gao

To address this issue, multi-frame optical flow methods leverage adjacent frames to mitigate the local ambiguity.

Mug-STAN: Adapting Image-Language Pretrained Models for General Video Understanding

1 code implementation • 25 Nov 2023 • Ruyang Liu, Jingjia Huang, Wei Gao, Thomas H. Li, Ge Li

Large-scale image-language pretrained models, e. g., CLIP, have demonstrated remarkable proficiency in acquiring general multi-modal knowledge through web-scale image-text data.

Efficient Test-Time Adaptation for Super-Resolution with Second-Order Degradation and Reconstruction

1 code implementation • NeurIPS 2023 • Zeshuai Deng, Zhuokun Chen, Shuaicheng Niu, Thomas H. Li, Bohan Zhuang, Mingkui Tan

Then, we adapt the SR model by implementing feature-level reconstruction learning from the initial test image to its second-order degraded counterparts, which helps the SR model generate plausible HR images.

One For All: Video Conversation is Feasible Without Video Instruction Tuning

1 code implementation • 27 Sep 2023 • Ruyang Liu, Chen Li, Yixiao Ge, Ying Shan, Thomas H. Li, Ge Li

Without bells and whistles, BT-Adapter achieves (1) state-of-the-art zero-shot results on various video tasks using thousands of fewer GPU hours.

Ranked #5 on

Zero-Shot Video Retrieval

on LSMDC

Ranked #5 on

Zero-Shot Video Retrieval

on LSMDC

Video-based Generative Performance Benchmarking (Consistency)

Video-based Generative Performance Benchmarking (Consistency)

Video-based Generative Performance Benchmarking (Contextual Understanding)

+6

Video-based Generative Performance Benchmarking (Contextual Understanding)

+6

$A^2$Nav: Action-Aware Zero-Shot Robot Navigation by Exploiting Vision-and-Language Ability of Foundation Models

no code implementations • 15 Aug 2023 • Peihao Chen, Xinyu Sun, Hongyan Zhi, Runhao Zeng, Thomas H. Li, Gaowen Liu, Mingkui Tan, Chuang Gan

We study the task of zero-shot vision-and-language navigation (ZS-VLN), a practical yet challenging problem in which an agent learns to navigate following a path described by language instructions without requiring any path-instruction annotation data.

Learning Vision-and-Language Navigation from YouTube Videos

1 code implementation • ICCV 2023 • Kunyang Lin, Peihao Chen, Diwei Huang, Thomas H. Li, Mingkui Tan, Chuang Gan

In this paper, we propose to learn an agent from these videos by creating a large-scale dataset which comprises reasonable path-instruction pairs from house tour videos and pre-training the agent on it.

Hard Sample Matters a Lot in Zero-Shot Quantization

1 code implementation • CVPR 2023 • Huantong Li, Xiangmiao Wu, Fanbing Lv, Daihai Liao, Thomas H. Li, Yonggang Zhang, Bo Han, Mingkui Tan

Nonetheless, we find that the synthetic samples constructed in existing ZSQ methods can be easily fitted by models.

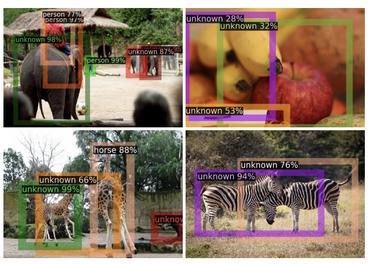

Detecting the open-world objects with the help of the Brain

1 code implementation • 21 Mar 2023 • Shuailei Ma, Yuefeng Wang, Ying WEI, Peihao Chen, Zhixiang Ye, Jiaqi Fan, Enming Zhang, Thomas H. Li

We propose leveraging the VL as the ``Brain'' of the open-world detector by simply generating unknown labels.

Causality Compensated Attention for Contextual Biased Visual Recognition

1 code implementation • ICLR 2023 • Ruyang Liu, Jingjia Huang, Ge Li, Thomas H. Li

Visual attention does not always capture the essential object representation desired for robust predictions.

Ranked #1 on

Multi-Label Image Classification

on MSCOCO

Ranked #1 on

Multi-Label Image Classification

on MSCOCO

Multi-Label Classification

Multi-Label Classification

Multi-Label Image Classification

+1

Multi-Label Image Classification

+1

Revisiting Temporal Modeling for CLIP-based Image-to-Video Knowledge Transferring

1 code implementation • CVPR 2023 • Ruyang Liu, Jingjia Huang, Ge Li, Jiashi Feng, Xinglong Wu, Thomas H. Li

In this paper, based on the CLIP model, we revisit temporal modeling in the context of image-to-video knowledge transferring, which is the key point for extending image-text pretrained models to the video domain.

Ranked #7 on

Video Retrieval

on MSR-VTT-1kA

(using extra training data)

Ranked #7 on

Video Retrieval

on MSR-VTT-1kA

(using extra training data)

CAT: LoCalization and IdentificAtion Cascade Detection Transformer for Open-World Object Detection

no code implementations • CVPR 2023 • Shuailei Ma, Yuefeng Wang, Jiaqi Fan, Ying WEI, Thomas H. Li, Hongli Liu, Fanbing Lv

Open-world object detection (OWOD), as a more general and challenging goal, requires the model trained from data on known objects to detect both known and unknown objects and incrementally learn to identify these unknown objects.

Improving Graph Representation for Point Cloud Segmentation via Attentive Filtering

no code implementations • CVPR 2023 • Nan Zhang, Zhiyi Pan, Thomas H. Li, Wei Gao, Ge Li

Recently, self-attention networks achieve impressive performance in point cloud segmentation due to their superiority in modeling long-range dependencies.

Learning Active Camera for Multi-Object Navigation

no code implementations • 14 Oct 2022 • Peihao Chen, Dongyu Ji, Kunyang Lin, Weiwen Hu, Wenbing Huang, Thomas H. Li, Mingkui Tan, Chuang Gan

How to make robots perceive the environment as efficiently as humans is a fundamental problem in robotics.

Weakly-Supervised Multi-Granularity Map Learning for Vision-and-Language Navigation

1 code implementation • 14 Oct 2022 • Peihao Chen, Dongyu Ji, Kunyang Lin, Runhao Zeng, Thomas H. Li, Mingkui Tan, Chuang Gan

To achieve accurate and efficient navigation, it is critical to build a map that accurately represents both spatial location and the semantic information of the environment objects.

Masked Motion Encoding for Self-Supervised Video Representation Learning

2 code implementations • CVPR 2023 • Xinyu Sun, Peihao Chen, LiangWei Chen, Changhao Li, Thomas H. Li, Mingkui Tan, Chuang Gan

The latest attempts seek to learn a representation model by predicting the appearance contents in the masked regions.

Ranked #2 on

Self-Supervised Action Recognition

on HMDB51

Ranked #2 on

Self-Supervised Action Recognition

on HMDB51

Frequency-Aware Self-Supervised Monocular Depth Estimation

1 code implementation • 11 Oct 2022 • Xingyu Chen, Thomas H. Li, Ruonan Zhang, Ge Li

We present two versatile methods to generally enhance self-supervised monocular depth estimation (MDE) models.

Self-Supervised Monocular Depth Estimation: Solving the Edge-Fattening Problem

1 code implementation • 2 Oct 2022 • Xingyu Chen, Ruonan Zhang, Ji Jiang, Yan Wang, Ge Li, Thomas H. Li

In this paper, we redesign the patch-based triplet loss in MDE to alleviate the ubiquitous edge-fattening issue.

Ranked #1 on

Unsupervised Monocular Depth Estimation

on Kitti Raw

Ranked #1 on

Unsupervised Monocular Depth Estimation

on Kitti Raw

Deep Geometry Post-Processing for Decompressed Point Clouds

1 code implementation • 29 Apr 2022 • Xiaoqing Fan, Ge Li, Dingquan Li, Yurui Ren, Wei Gao, Thomas H. Li

Point cloud compression plays a crucial role in reducing the huge cost of data storage and transmission.

Neural Texture Extraction and Distribution for Controllable Person Image Synthesis

1 code implementation • CVPR 2022 • Yurui Ren, Xiaoqing Fan, Ge Li, Shan Liu, Thomas H. Li

Our model is trained to predict human images in arbitrary poses, which encourages it to extract disentangled and expressive neural textures representing the appearance of different semantic entities.

PIRenderer: Controllable Portrait Image Generation via Semantic Neural Rendering

1 code implementation • ICCV 2021 • Yurui Ren, Ge Li, Yuanqi Chen, Thomas H. Li, Shan Liu

The proposed model can generate photo-realistic portrait images with accurate movements according to intuitive modifications.

Combining Attention with Flow for Person Image Synthesis

no code implementations • 4 Aug 2021 • Yurui Ren, Yubo Wu, Thomas H. Li, Shan Liu, Ge Li

Pose-guided person image synthesis aims to synthesize person images by transforming reference images into target poses.

Structure-Transformed Texture-Enhanced Network for Person Image Synthesis

no code implementations • ICCV 2021 • Munan Xu, Yuanqi Chen, Shan Liu, Thomas H. Li, Ge Li

Pose-guided virtual try-on task aims to modify the fashion item based on pose transfer task.

Deep Spatial Transformation for Pose-Guided Person Image Generation and Animation

1 code implementation • 27 Aug 2020 • Yurui Ren, Ge Li, Shan Liu, Thomas H. Li

We show that our framework can spatially transform the inputs in an efficient manner.

Deep Image Spatial Transformation for Person Image Generation

2 code implementations • CVPR 2020 • Yurui Ren, Xiaoming Yu, Junming Chen, Thomas H. Li, Ge Li

Finally, we warp the source features using a content-aware sampling method with the obtained local attention coefficients.

StructureFlow: Image Inpainting via Structure-aware Appearance Flow

1 code implementation • ICCV 2019 • Yurui Ren, Xiaoming Yu, Ruonan Zhang, Thomas H. Li, Shan Liu, Ge Li

Image inpainting techniques have shown significant improvements by using deep neural networks recently.

Deep AutoEncoder-based Lossy Geometry Compression for Point Clouds

no code implementations • 18 Apr 2019 • Wei Yan, Yiting shao, Shan Liu, Thomas H. Li, Zhu Li, Ge Li

Point cloud is a fundamental 3D representation which is widely used in real world applications such as autonomous driving.

Graph Convolutional Label Noise Cleaner: Train a Plug-and-play Action Classifier for Anomaly Detection

1 code implementation • CVPR 2019 • Jia-Xing Zhong, Nannan Li, Weijie Kong, Shan Liu, Thomas H. Li, Ge Li

Remarkably, we obtain the frame-level AUC score of 82. 12% on UCF-Crime.

Anomaly Detection In Surveillance Videos

Anomaly Detection In Surveillance Videos

Multiple Instance Learning

+3

Multiple Instance Learning

+3

Step-by-step Erasion, One-by-one Collection: A Weakly Supervised Temporal Action Detector

no code implementations • 9 Jul 2018 • Jia-Xing Zhong, Nannan Li, Weijie Kong, Tao Zhang, Thomas H. Li, Ge Li

Weakly supervised temporal action detection is a Herculean task in understanding untrimmed videos, since no supervisory signal except the video-level category label is available on training data.

Exploiting the Value of the Center-dark Channel Prior for Salient Object Detection

no code implementations • 14 May 2018 • Chunbiao Zhu, Wen-Hao Zhang, Thomas H. Li, Ge Li

In this paper, we propose a novel salient object detection algorithm for RGB-D images using center-dark channel priors.

PDNet: Prior-model Guided Depth-enhanced Network for Salient Object Detection

2 code implementations • 23 Mar 2018 • Chunbiao Zhu, Xing Cai, Kan Huang, Thomas H. Li, Ge Li

One is the lack of tremendous amount of annotated data to train a network.